Team from MIT’s Computer Science and Artificial Intelligence Lab detects dementia using AI and a digital pen.

For all of the advances in medical technology, many of the world’s most widely-used diagnostic tools essentially involve just two things: pen and paper.

Tests such as the Montreal Cognitive Assessment (MoCA) and the Clock Drawing Test (CDT) are used to detect cognitive change arising from a wide range of causes, from strokes and concussions to dementias such as Alzheimer’s disease.

What’s disconcerting, though, is that, with dementia and other disorders growing in prevalence, most current diagnostic methods detect cognitive impairment only after it starts affecting people’s lives. In Alzheimer’s, for example, changes in the brain may occur 10 or more years before the cognitive change becomes noticeable, and no easily administered test can detect these changes at the very earliest stage.

At least, not yet.

This month researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) were part of a team that published a paper demonstrating a predictive model that, coupled with existing hardware, opens up the possibility of detecting disorders such as dementia earlier than ever before.

Clock-drawing test

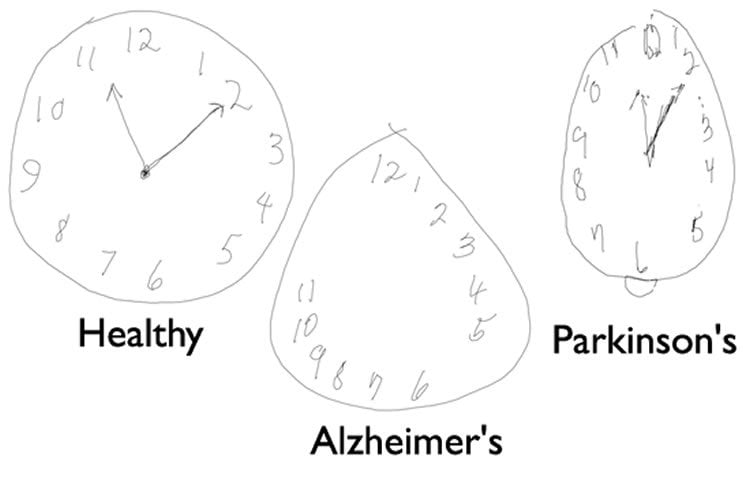

For several decades, doctors have screened for conditions including Parkinson’s and Alzheimer’s with the CDT, which asks subjects to draw an analog clock-face showing a specified time, and to copy a pre-drawn clock.

But the test has limitations, because its benchmarks rely on doctors’ subjective judgments, such as determining whether a clock circle has “only minor distortion.”

CSAIL researchers were particularly struck by the fact that CDT analysis was typically based on the person’s final drawing rather than on the process as a whole.

Enter the Anoto Live Pen, a digitizing ballpoint pen that measures its position on the paper upwards of 80 times a second, using a camera built into the pen. The pen provides data that are far more precise than can be measured on an ordinary drawing, and captures timing information that allows the system to analyze each and every one of a subject’s movements and hesitations.

Research at Lahey Hospital and Medical Center and CSAIL produced novel software for analyzing this version of the test, producing what the team calls the digital Clock Drawing Test (dCDT).

Predictive power of drawings

Working with a collection of 2,600 tests administered over the past nine years, the team developed computational models that show early promise in being able to better detect whether someone has a cognitive impairment, and even determine precisely what they may have.

They tested their models against standard methods used by physicians and found that the machine learning models were significantly more accurate.

“We’ve improved the analysis so that it is automated and objective,” says CSAIL principal investigator Cynthia Rudin, a professor at the Sloan School of Management and co-author of the paper. “With the right equipment, you can get results wherever you want, quickly, and with higher accuracy.”

Some of the machine learning techniques they used were designed to produce “transparent” classifiers, which provide insights into what factors are important for screening and diagnosis.

“These examples help calibrate the predictive power of each part of the drawing,” says first author William Souillard-Mandar, a graduate student at CSAIL. “They allow us to extract thousands of features from the drawing process that give hints about the subject’s cognitive state, and our algorithms help determine which ones can make the most accurate prediction.”

Souillard-Mandar and Rudin co-wrote the paper with MIT Professor Randall Davis and researchers Dana Penney of Lahey Hospital, Rhoda Au of Boston University, David Libon of Drexel University, Catherine Price of the University of Florida, Melissa Lamar of the University of Illinois Chicago, and Rod Swenson of the University of North Dakota Medical School.

Different disorders reveal themselves in different ways on the CDT, which asks people to draw a clock showing 10 minutes after 11, and then asks them to copy a pre-drawn clock showing that time.

For example, while healthy adults spend more time on the dCDT thinking (with the pen off the paper) than “inking,” memory-impaired subjects spend even more time than that thinking rather than inking. Parkinson’s subjects, meanwhile, took longer to draw clocks that tended to be smaller, suggesting that they are working harder, but producing less — an insight not detectable with previous analysis systems.

Potential time-saver

Beyond the significant potential to improve people’s health are the work’s implications for automating a tedious scoring process.

“Neurologists see dozens of patients every day, and so the amount of time they spend sifting through databases and hand-coding their observations adds up very quickly,” says Phil Cohen, a vice president at VoiceBox Technologies who has done extensive research involving digital-pen technologies. “The work is still in a relatively early state, but this has the potential to not just better detect disease, but save clinicians a lot of time.”

Now that the team has proven the dCDT’s effectiveness, they are working to develop an interface that would allow neurologists and non-specialists alike to more easily use the technology in hospitals.

“We’re eager to see how well our model will work with other screening tools we’re developing,” Davis says. “As researchers, we’re just beginning to investigate all of the ways that your subtle behaviors reveal things about your brain.”

Funding: Preparation of this work was supported by the Netherlands Science Foundation (432-08-002 to C. K. W. De Dreu and VENI 016-155-082 to M. E. Kret).

Source: Adam Conner-Simons – MIT/CSAIL

Image Credit: The image is credited to The researchers/MIT.

Original Research: The research paper for “Learning Classification Models of Cognitive Conditions from Subtle Behaviors in the Digital Clock Drawing Test” by William Souillard-Mandar, Randall Davis, Cynthia Rudin, Rhoda Au, David J. Libon, Rodney Swenson, Catherine C. Price, Melissa Lamar, and Dana L. Penney will be published in MLJ special issue for Healthcare and Medicine. Full open access preview (pdf) is available from MIT.

Abstract

Learning Classification Models of Cognitive Conditions from Subtle Behaviors in the Digital Clock Drawing Test

The Clock Drawing Test – a simple pencil and paper test – has been used for more than 50 years as a screening tool to differentiate normal individuals from those with cognitive impairment, and has proven useful in helping to diagnose cognitive dysfunction associated with neurological disorders such as Alzheimer’s disease, Parkinson’s disease, and other dementias and conditions.

We have been administering the test using a digitizing ballpoint pen that reports its position with considerable spatial and temporal precision, making available far more detailed data about the subject’s performance.

Using pen stroke data from these drawings categorized by our software, we designed and computed a largecollection of features, then explored the tradeoffs in performance and interpretability in classifiers built using a number of di↵erent subsets of these features and a variety of different machine learning techniques. We used traditional machine learning methods to build prediction models that achieve high accuracy. We operationalized widely used manual scoring systems so that we could use them as benchmarks for our models. We worked with clinicians to define guidelines for model interpretability, and constructed sparse linear models and rule lists designed to be as easy to use as scoring systems currently used by clinicians, but more accurate.

While our models will require additional testing for validation, they over the possibility of substantial improvement in detecting cognitive impairment earlier than currently possible, a development with considerable potential impact in practice.

“Learning Classification Models of Cognitive Conditions from Subtle Behaviors in the Digital Clock Drawing Test” by William Souillard-Mandar, Randall Davis, Cynthia Rudin, Rhoda Au, David J. Libon, Rodney Swenson, Catherine C. Price, Melissa Lamar, and Dana L. Penney will be published in MLJ special issue for Healthcare and Medicine.