Summary: Researchers at UCSD have developed a new gesture recognition glove that can wirelessly translate the ASL alphabet into text. The glove can also communicate back by controlling a virtual hand and mimicking gestures.

Source: UCSD.

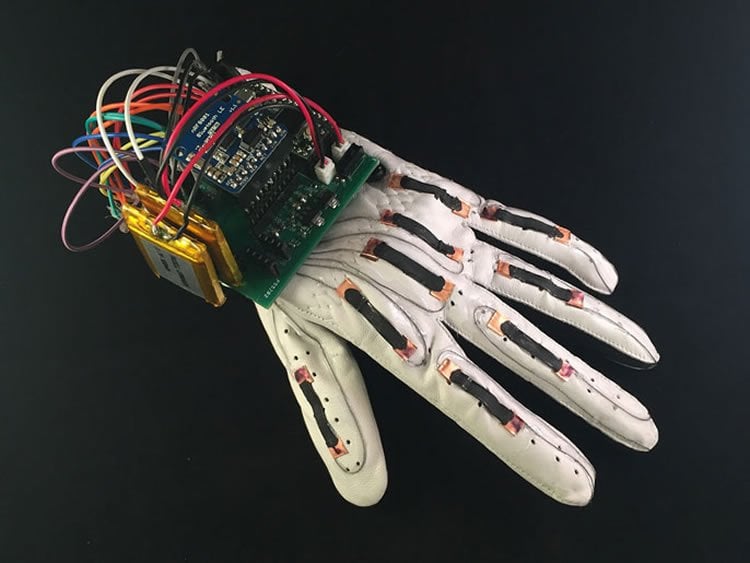

Engineers at the University of California San Diego have developed a smart glove that wirelessly translates the American Sign Language alphabet into text and controls a virtual hand to mimic sign language gestures. The device, which engineers call “The Language of Glove,” was built for less than $100 using stretchable and printable electronics that are inexpensive, commercially available and easy to assemble. The work was published on July 12 in the journal PLOS ONE.

In addition to decoding American Sign Language gestures, researchers are developing the glove to be used in a variety of other applications ranging from virtual and augmented reality to telesurgery, technical training and defense.

“Gesture recognition is just one demonstration of this glove’s capabilities,” said Timothy O’Connor, a nanoengineering Ph.D. student at UC San Diego and the first author of the study. “Our ultimate goal is to make this a smart glove that in the future will allow people to use their hands in virtual reality, which is much more intuitive than using a joystick and other existing controllers. This could be better for games and entertainment, but more importantly for virtual training procedures in medicine, for example, where it would be advantageous to actually simulate the use of one’s hands.”

The glove is unique in that it has sensors made from stretchable materials, is inexpensive and simple to manufacture. “We’ve innovated a low-cost and straightforward design for smart wearable devices using off-the-shelf components. Our work could enable other researchers to develop similar technologies without requiring costly materials or complex fabrication methods,” said Darren Lipomi, a nanoengineering professor who is a member of the Center for Wearable Sensors at UC San Diego and the study’s senior author.

The ‘language of glove’

The team built the device using a leather athletic glove and adhered nine stretchable sensors to the back at the knuckles — two on each finger and one on the thumb. The sensors are made of thin strips of a silicon-based polymer coated with a conductive carbon paint. The sensors are secured onto the glove with copper tape. Stainless steel thread connects each of the sensors to a low power, custom-made printed circuit board that’s attached to the back of the wrist.

The sensors change their electrical resistance when stretched or bent. This allows them to code for different letters of the American Sign Language alphabet based on the positions of all nine knuckles. A straight or relaxed knuckle is encoded as “0” and a bent knuckle is encoded as “1”. When signing a particular letter, the glove creates a nine-digit binary key that translates into that letter. For example, the code for the letter “A” (thumb straight, all other fingers curled) is “011111111,” while the code for “B” (thumb bent, all other fingers straight) is “100000000.” Engineers equipped the glove with an accelerometer and pressure sensor to distinguish between letters like “I” and “J”, whose gestures are different but generate the same nine-digit code.

The low power printed circuit board on the glove converts the nine-digit key into a letter and then transmits the signals via Bluetooth to a smartphone or computer screen. The glove can wirelessly translate all 26 letters of the American Sign Language alphabet into text. Researchers also used the glove to control a virtual hand to sign letters in the American Sign Language alphabet.

Moving forward, the team is developing the next version of this glove — one that’s endowed with the sense of touch. The goal is to make a glove that could control either a virtual or robotic hand and then send tactile sensations back to the user’s hand, Lipomi said. “This work is a step toward that direction.”

Funding: This work was supported by the National Institutes of Health Director’s New Innovator Award (1DP2EB022358-01). An earlier prototype of the device was supported by the Air Force Office of Scientific Research Young Investigator Program (grant no. FA9550-13-1-0156). Additional support was provided by the Center for Wearable Sensors at the UC San Diego Jacobs School of Engineering and member companies Qualcomm, Sabic, Cubic, Dexcom, Honda, Samsung and Sony.

Source: Phil Roth – UCSD

Image Source: NeuroscienceNews.com image is credited to Timothy O’Connor/UC San Diego Jacobs School of Engineering.

Video Source: Video credited to JacobsSchoolNews.

Original Research: Full open access research for “The Language of Glove: Wireless gesture decoder with low-power and stretchable hybrid electronics” by Timothy F. O’Connor, Matthew E. Fach, Rachel Miller, Samuel E. Root, Patrick P. Mercier, and Darren J. Lipomi in PLOS ONE. Published online July 12 doi:10.1371/journal.pone.0179766

[cbtabs][cbtab title=”MLA”]UCSD “Low-Cost Smart Glove Translates American Sign Language Alphabet.” NeuroscienceNews. NeuroscienceNews, 24 July 2017.

<https://neurosciencenews.com/robotic-glove-sign-language-7166/>.[/cbtab][cbtab title=”APA”]UCSD (2017, July 24). Low-Cost Smart Glove Translates American Sign Language Alphabet. NeuroscienceNew. Retrieved July 24, 2017 from https://neurosciencenews.com/robotic-glove-sign-language-7166/[/cbtab][cbtab title=”Chicago”]UCSD “Low-Cost Smart Glove Translates American Sign Language Alphabet.” https://neurosciencenews.com/robotic-glove-sign-language-7166/ (accessed July 24, 2017).[/cbtab][/cbtabs]

Abstract

The Language of Glove: Wireless gesture decoder with low-power and stretchable hybrid electronics

Autism spectrum disorder (ASD) is marked by both socio-communicative difficulties and abnormalities in sensory processing. Much of the work on sensory deficits in ASD has focused on tactile sensations and the perceptual aspects of somatosensation, such as encoding of stimulus intensity and location. Although aberrant pain processing has often been noted in clinical observations of patients with ASD, it remains largely uninvestigated. Importantly, the neural mechanism underlying higher order cognitive aspects of pain processing such as pain anticipation also remains unknown. Here we examined both pain perception and anticipation in high-functioning adults with ASD and matched healthy controls (HC) using an anticipatory pain paradigm in combination with functional magnetic resonance imaging (fMRI) and concurrent skin conductance response (SCR) recording. Participants were asked to choose a level of electrical stimulation that would feel moderately painful to them. Compared to HC group, ASD group chose a lower level of stimulation prior to fMRI. However, ASD participants showed greater activation in both rostral and dorsal anterior cingulate cortex during the anticipation of stimulation, but not during stimulation delivery. There was no significant group difference in insular activation during either pain anticipation or perception. However, activity in the left anterior insula correlated with SCR during pain anticipation. Taken together, these results suggest that ASD is marked with aberrantly higher level of sensitivity to upcoming aversive stimuli, which may reflect abnormal attentional orientation to nociceptive signals and a failure in interoceptive inference.

“Heightened brain response to pain anticipation in high-functioning adults with autism spectrum disorder” by Xiaosi Gu, Thomas J. Zhou, Evdokia Anagnostou, Latha Soorya, Alexander Kolevzon, Patrick R. Hof, and Jin Fan in European Journal of Neuroscience. Published online May 25 doi:10.1111/ejn.13598