Physicians and biomedical engineers from Johns Hopkins report what they believe is the first successful effort to wiggle fingers individually and independently of each other using a mind-controlled artificial “arm” to control the movement.

The proof-of-concept feat, described online this week in the Journal of Neural Engineering, represents a potential advance in technologies to restore refined hand function to those who have lost arms to injury or disease, the researchers say. The young man on whom the experiment was performed was not missing an arm or hand, but he was outfitted with a device that essentially took advantage of a brain-mapping procedure to bypass control of his own arm and hand.

“We believe this is the first time a person using a mind-controlled prosthesis has immediately performed individual digit movements without extensive training,” says senior author Nathan Crone, M.D., professor of neurology at the Johns Hopkins University School of Medicine. “This technology goes beyond available prostheses, in which the artificial digits, or fingers, moved as a single unit to make a grabbing motion, like one used to grip a tennis ball.”

For the experiment, the research team recruited a young man with epilepsy already scheduled to undergo brain mapping at The Johns Hopkins Hospital’s Epilepsy Monitoring Unit to pinpoint the origin of his seizures.

While brain recordings were made using electrodes surgically implanted for clinical reasons, the signals also control a modular prosthetic limb developed by the Johns Hopkins University Applied Physics Laboratory.

Prior to connecting the prosthesis, the researchers mapped and tracked the specific parts of the subject’s brain responsible for moving each finger, then programmed the prosthesis to move the corresponding finger.

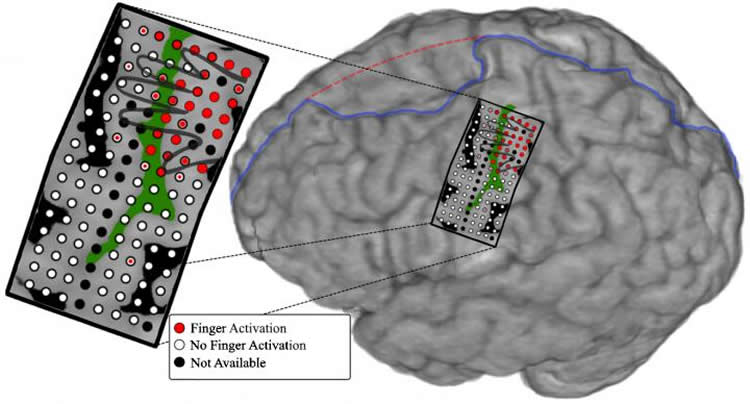

First, the patient’s neurosurgeon placed an array of 128 electrode sensors, all on a single rectangular sheet of film the size of a credit card, on the part of the man’s brain that normally controls hand and arm movements. Each sensor measured a circle of brain tissue 1 millimeter in diameter.

The computer program the Johns Hopkins team developed had the man move individual fingers on command and recorded which parts of the brain the “lit up” when each sensor detected an electric signal.

In addition to collecting data on the parts of brain involved in motor movement, the researchers measured electrical brain activity involved in tactile sensation. To do this, the subject was outfitted with a glove with small, vibrating buzzers in the fingertips, which went off individually in each finger. The researchers measured the resulting electrical activity in the brain for each finger connection.

After the motor and sensory data were collected, the researchers programmed the prosthetic arm to move corresponding fingers based on which part of the brain was active. The researchers turned on the prosthetic arm, which was wired to the patient through the brain electrodes, and asked the subject to “think” about individually moving thumb, index, middle, ring and pinkie fingers. The electrical activity generated in the brain moved the fingers.

“The electrodes used to measure brain activity in this study gave us better resolution of a large region of cortex than anything we’ve used before and allowed for more precise spatial mapping in the brain,” says Guy Hotson, graduate student and lead author of the study. “This precision is what allowed us to separate the control of individual fingers.”

Initially, the mind-controlled limb had an accuracy of 76 percent. Once the researchers coupled the ring and pinkie fingers together, the accuracy increased to 88 percent.

“The part of the brain that controls the pinkie and ring fingers overlaps, and most people move the two fingers together,” says Crone. “It makes sense that coupling these two fingers improved the accuracy.”

The subject uses his mind to move individual fingers on a prosthetic arm. Credit: Courtesy of the Journal of Neural Engineering.

The researchers note there was no pre-training required for the subject to gain this level of control, and the entire experiment took less than two hours.

Crone cautions that application of this technology to those actually missing limbs is still some years off and will be costly, requiring extensive mapping and computer programming. According to the Amputee Coalition, over 100,000 people living in the U.S. have amputated hands or arms, and most could potentially benefit from such technology.

Additional authors on the study include David McMullen, Matthew Fifer, William Anderson and Nitish Thakor of Johns Hopkins Medicine and Matthew Johannes, Kapil Katyal, Matthew Para, Robert Armiger and Brock Wester of the Johns Hopkins Applied Physics Laboratory.

Funding: This study was funded by the National Institute of Neurological Disorders and Stroke (grant number 1R01NS088606-01).

Source: Johns Hopkins Medicine

Image Credit: The image is credited to Guy Hotson.

Video Source: The video is available at the Johns Hopkins Medicine YouTube page. Courtesy of the Journal of Neural Engineering.

Original Research: Abstract for “Individual finger control of a modular prosthetic limb using high-density electrocorticography in a human subject” by Guy Hotson, David P McMullen, Matthew S Fifer, Matthew S Johannes, Kapil D Katyal, Matthew P Para, Robert Armiger, William S Anderson, Nitish V Thakor, Brock A Wester and Nathan E Crone in Journal of Neural Engineering. Published online February 10 2016 doi:10.1088/1741-2560/13/2/026017

Abstract

Individual finger control of a modular prosthetic limb using high-density electrocorticography in a human subject

Objective. We used native sensorimotor representations of fingers in a brain–machine interface (BMI) to achieve immediate online control of individual prosthetic fingers.

Approach. Using high gamma responses recorded with a high-density electrocorticography (ECoG) array, we rapidly mapped the functional anatomy of cued finger movements. We used these cortical maps to select ECoG electrodes for a hierarchical linear discriminant analysis classification scheme to predict: (1) if any finger was moving, and, if so, (2) which digit was moving. To account for sensory feedback, we also mapped the spatiotemporal activation elicited by vibrotactile stimulation. Finally, we used this prediction framework to provide immediate online control over individual fingers of the Johns Hopkins University Applied Physics Laboratory modular prosthetic limb.

Main results. The balanced classification accuracy for detection of movements during the online control session was 92% (chance: 50%). At the onset of movement, finger classification was 76% (chance: 20%), and 88% (chance: 25%) if the pinky and ring finger movements were coupled. Balanced accuracy of fully flexing the cued finger was 64%, and 77% had we combined pinky and ring commands. Offline decoding yielded a peak finger decoding accuracy of 96.5% (chance: 20%) when using an optimized selection of electrodes. Offline analysis demonstrated significant finger-specific activations throughout sensorimotor cortex. Activations either prior to movement onset or during sensory feedback led to discriminable finger control.

Significance. Our results demonstrate the ability of ECoG-based BMIs to leverage the native functional anatomy of sensorimotor cortical populations to immediately control individual finger movements in real time.

“Individual finger control of a modular prosthetic limb using high-density electrocorticography in a human subject” by Guy Hotson, David P McMullen, Matthew S Fifer, Matthew S Johannes, Kapil D Katyal, Matthew P Para, Robert Armiger, William S Anderson, Nitish V Thakor, Brock A Wester and Nathan E Crone in Journal of Neural Engineering. Published online February 10 2016 doi:10.1088/1741-2560/13/2/026017