Summary: Artificial intelligence technology is able to objectively differentiate between those with PTSD and those without by analyzing speech samples, with 89.1% accuracy.

Source: NYU Langone Health

A specially designed computer program can help diagnose post-traumatic stress disorder (PTSD) in veterans by analyzing their voices, a new study finds.

Published online April 22 in the journal Depression and Anxiety, the study found that an artificial intelligence tool can distinguish – with 89 percent accuracy – between the voices of those with or without PTSD.

“Our findings suggest that speech-based characteristics can be used to diagnose this disease, and with further refinement and validation, may be employed in the clinic in the near future,” says senior study author Charles R. Marmar, MD, the Lucius N. Littauer Professor and chair of the Department of Psychiatry at NYU School of Medicine.

More than 70 percent of adults worldwide experience a traumatic event at some point in their lives, with up to 12 percent of people in some struggling countries suffering from PTSD. Those with the condition experience strong, persistent distress when reminded of a triggering event.

The study authors say that a PTSD diagnosis is most often determined by clinical interview or a self-report assessment, both inherently prone to biases. This has led to efforts to develop objective, measurable, physical markers of PTSD progression, much like laboratory values for medical conditions, but progress has been slow.

Learning How to Learn

In the current study, the research team used a statistical/machine learning technique, called random forests, that has the ability to “learn” how to classify individuals based on examples. Such AI programs build “decision” rules and mathematical models that enable decision-making with increasing accuracy as the amount of training data grows.

The researchers first recorded standard, hours-long diagnostic interviews, called Clinician-Administered PTSD Scale, or CAPS, of 53 Iraq and Afghanistan veterans with military-service-related PTSD, as well as those of 78 veterans without the disease. The recordings were then fed into voice software from SRI International – the institute that also invented Siri – to yield a total of 40,526 speech-based features captured in short spurts of talk, which the team’s AI program sifted through for patterns.

The random forest program linked patterns of specific voice features with PTSD, including less clear speech and a lifeless, metallic tone, both of which had long been reported anecdotally as helpful in diagnosis. While the current study did not explore the disease mechanisms behind PTSD, the theory is that traumatic events change brain circuits that process emotion and muscle tone, which affects a person’s voice.

Moving forward, the research team plans to train the AI voice tool with more data, further validate it on an independent sample, and apply for government approval to use the tool clinically.

“Speech is an attractive candidate for use in an automated diagnostic system, perhaps as part of a future PTSD smartphone app, because it can be measured cheaply, remotely, and non-intrusively,” says lead author Adam Brown, PhD, adjunct assistant professor in the Department of Psychiatry at NYU School of Medicine.

“The speech analysis technology used in the current study on PTSD detection falls into the range of capabilities included in our speech analytics platform called SenSay Analytics™,” says Dimitra Vergyri, director of SRI International’s Speech Technology and Research (STAR) Laboratory. “The software analyzes words – in combination with frequency, rhythm, tone, and articulatory characteristics of speech – to infer the state of the speaker, including emotion, sentiment, cognition, health, mental health and communication quality. The technology has been involved in a series of industry applications visible in startups like Oto, Ambit and Decoded Health.”

Along with Marmar and Brown, authors of the study from the Department of Psychiatry were Meng Qian, Eugene Laska, Carole Siegel, Meng Li, and Duna Abu-Amara. Study authors from SRI International were Andreas Tsiartas, Dimitra Vergyri, Colleen Richey, Jennifer Smith, and Bruce Knoth. Brown is also an associate professor of psychology at the New School for Social Research.

Funding: The study was supported by the U.S. Army Medical Research & Acquisition Activity (USAMRAA) and Telemedicine & Advanced Technology Research Center (TATRC) grant W81XWH- ll-C-0004, as well as by the Steven and Alexandra Cohen Foundation.

Source:

NYU Langone Health

Media Contacts:

Jim Mandler – NYU Langone Health

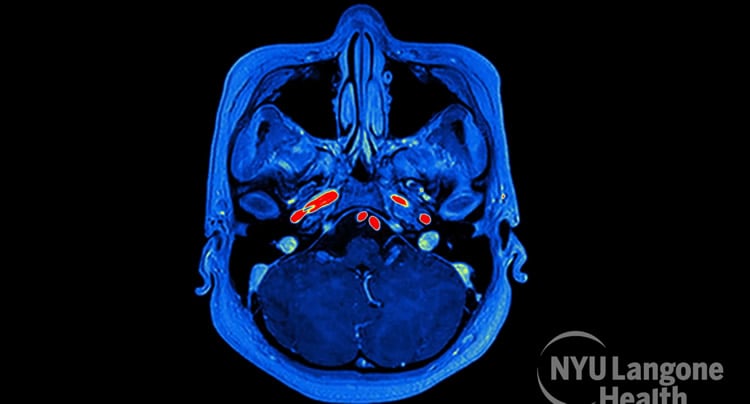

Image Source:

The image is adapted from the NYU Langone Health news release.

Original Research: Closed access

“Speech‐based markers for posttraumatic stress disorder in US veterans”

Charles R. Marmar, Adam D. Brown, Meng Qian, Eugene Laska, Carole Siegel, Meng Li, Duna Abu‐Amara, Andreas Tsiartas, Colleen Richey, Jennifer Smith, Bruce Knoth, Dimitra Vergyri. Depression and Anxiety 22 APR 2019 doi:10.1002/da.22890

Abstract

Speech‐based markers for posttraumatic stress disorder in US veterans

Background

The diagnosis of posttraumatic stress disorder (PTSD) is usually based on clinical interviews or self‐report measures. Both approaches are subject to under‐ and over‐reporting of symptoms. An objective test is lacking. We have developed a classifier of PTSD based on objective speech‐marker features that discriminate PTSD cases from controls.

Methods

Speech samples were obtained from warzone‐exposed veterans, 52 cases with PTSD and 77 controls, assessed with the Clinician‐Administered PTSD Scale. Individuals with major depressive disorder (MDD) were excluded. Audio recordings of clinical interviews were used to obtain 40,526 speech features which were input to a random forest (RF) algorithm.

Results

The selected RF used 18 speech features and the receiver operating characteristic curve had an area under the curve (AUC) of 0.954. At a probability of PTSD cut point of 0.423, Youden’s index was 0.787, and overall correct classification rate was 89.1%. The probability of PTSD was higher for markers that indicated slower, more monotonous speech, less change in tonality, and less activation. Depression symptoms, alcohol use disorder, and TBI did not meet statistical tests to be considered confounders.

Conclusions

This study demonstrates that a speech‐based algorithm can objectively differentiate PTSD cases from controls. The RF classifier had a high AUC. Further validation in an independent sample and appraisal of the classifier to identify those with MDD only compared with those with PTSD comorbid with MDD is required.