Summary: Using augmented reality, researchers discover how rats recalibrate learned relationships between a landmark, speed, distance and time to create a locational ‘map’ in the brain.

Source: Johns Hopkins University.

Before the age of GPS, humans had to orient themselves without on-screen arrows pointing down an exact street, but rather, by memorizing landmarks and using learned relationships among time, speed and distance. They had to know, for instance, that 10 minutes of brisk walking might equate to half a mile traveled.

A new Johns Hopkins study found that rats’ ability to recalibrate these learned relationships is ever-evolving, moment-by-moment.

The findings, to be published Feb. 11 in Nature, provide insight on how the brain creates a map inside one’s head.

“The hippocampus and neighboring regions in the brain help us figure out where we are in the world,” says Manu Madhav, a postdoctoral associate in the Johns Hopkins Zanvyl Krieger Mind/Brain Institute and one of the study’s primary authors. “By studying the firing patterns of neurons in these areas, we can better understand how we map our location.”

The brain receives two types of cues that aid in this mapping; the first is external landmarks, like the pink house at the end of the street or a discolored floor tile that a person remembers to mark a certain location or distance.

“The second type of cue is from one’s self-motion through the world, like having an internal speedometer or a step-counter,” says Ravi Jayakumar, a primary author on the paper and Ph.D. candidate in the Mechanical Engineering Department at Johns Hopkins. “By calculating distance over time, based on your speed or by adding up your steps, your brain can estimate how far you’ve gone even when you don’t have landmarks to rely on.” This process is called path integration.

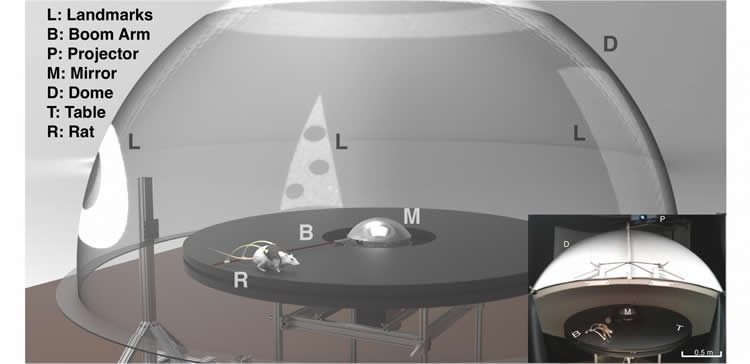

But if you walk for 10 minutes, is your estimate of how far you’ve traveled always the same or is it molded by your recent experience of the world? To investigate this, the research team studied rats running laps around a circular track. They projected various shapes to act as landmarks onto a planetarium-like dome over the track and moved the shapes either in the same direction as the rats or the opposite way. As in a computer game, the landmark speed depended on how fast the animal was running at each moment, creating an augmented reality environment where rats perceived themselves as running slower or faster than they actually were.

During these experiments, the research team studied the rats’ ‘place cells,’ or hippocampal neurons that fire when an animal visits a specific area in a familiar environment. When the rat thinks that it has run one lap and has returned to the same location, a place cell would fire again. By looking at these neurons’ firing pattern, the researchers determined how fast the rat thought it was running through the world.

When the researchers stopped projecting the shapes, leaving the rats with only their self-motion cues (e.g., their internal speedometer) to guide them, the place cell firing revealed that the rats continued to think that they were running faster (or slower) than they actually were. The experience of the rotating landmarks in the augmented reality environment, the researchers say, caused a long-lasting change in the animal’s perception of how fast and how far it was moving with each step.

“It’s always been known that animals have to recalibrate their self-motion cues during development; for example, an animal’s legs get longer as it grows, and that affects their measurement of how far their steps can take them,” says Madhav. “However, our lab showed that recalibration happens on a minute-by-minute basis even in adulthood. We’re constantly updating the model of how our physical movements through the world update our location in the internal map in our head.”

The study’s findings add additional evidence toward how memories, inherently grounded in time and space, are formed. “We know that the hippocampus in humans is involved not only in spatial mapping, but it also is crucial for forming conscious memories of our daily life experiences,” says James Knierim, a neuroscientist at Johns Hopkins who led the study along with mechanical engineer Noah Cowan, also of the university. Because spatial disorientation and loss of memory are one of the first symptoms of Alzheimer’s disease–which destroys hippocampal neurons in its earliest stages–these findings can further research efforts to understand the causes and potential cures for Alzheimer’s and other neurodegenerative diseases.

“As an engineer, I find it particularly exciting that our interdisciplinary approach can be used to understand some of the most complex cognitive processing systems in the brain,” adds Cowan.

Looking forward, the research team hopes to use the same augmented reality experimental setup to study how other regions of the brain coordinate their activity with the hippocampus to form a coherent internal map of the world.

Funding: This work was supported by the Johns Hopkins University Discovery Award, Johns Hopkins Science of Learning Institute Award, Johns Hopkins Kavli Neuroscience Discovery Institute Postdoctoral Distinguished Fellowship, Johns Hopkins Mechanical Engineering Departmental Fellowship.

Source: Chanapa Tantibanchachai – Johns Hopkins UniversityPublisher: Organized by NeuroscienceNews.com.

Image Source: NeuroscienceNews.com image is credited to Ravikrishnan Jayakumar.

Original Research: Abstract for “Recalibration of path integration in hippocampal place cells” by Ravikrishnan P. Jayakumar, Manu S. Madhav, Francesco Savelli, Hugh T. Blair, Noah J. Cowan & James J. Knierim in Nature. Published February 11 2019.

doi:10.3038/s41586-019-0939-3

[cbtabs][cbtab title=”MLA”]Johns Hopkins University”Rats in Augmented Reality Help Show How the Brain Determines Location.” NeuroscienceNews. NeuroscienceNews, 11 February 2019.

<https://neurosciencenews.com/location-augmented-reality-10719/>.[/cbtab][cbtab title=”APA”]Johns Hopkins University(2019, February 11). Rats in Augmented Reality Help Show How the Brain Determines Location. NeuroscienceNews. Retrieved February 11, 2019 from https://neurosciencenews.com/location-augmented-reality-10719/[/cbtab][cbtab title=”Chicago”]Johns Hopkins University”Rats in Augmented Reality Help Show How the Brain Determines Location.” https://neurosciencenews.com/location-augmented-reality-10719/ (accessed February 11, 2019).[/cbtab][/cbtabs]

Abstract

Recalibration of path integration in hippocampal place cells

Hippocampal place cells are spatially tuned neurons that serve as elements of a ‘cognitive map’ in the mammalian brain. To detect the animal’s location, place cells are thought to rely upon two interacting mechanisms: sensing the position of the animal relative to familiar landmarks and measuring the distance and direction that the animal has travelled from previously occupied locations. The latter mechanism—known as path integration—requires a finely tuned gain factor that relates the animal’s self-movement to the updating of position on the internal cognitive map, as well as external landmarks to correct the positional error that accumulates. Models of hippocampal place cells and entorhinal grid cells based on path integration treat the path-integration gain as a constant but behavioural evidence in humans suggests that the gain is modifiable. Here we show, using physiological evidence from rat hippocampal place cells, that the path-integration gain is a highly plastic variable that can be altered by persistent conflict between self-motion cues and feedback from external landmarks. In an augmented-reality system, visual landmarks were moved in proportion to the movement of a rat on a circular track, creating continuous conflict with path integration. Sustained exposure to this cue conflict resulted in predictable and prolonged recalibration of the path-integration gain, as estimated from the place cells after the landmarks were turned off. We propose that this rapid plasticity keeps the positional update in register with the movement of the rat in the external world over behavioural timescales. These results also demonstrate that visual landmarks not only provide a signal to correct cumulative error in the path-integration system but also rapidly fine-tune the integration computation itself.