Summary: Researchers have conducted a study of an iPhone app that screens young children for signs of autism. The study found the app was easy for parents to use and yielded reliable scientific data.

Source: Duke University.

A Duke study of an iPhone app to screen young children for signs of autism has found that the app is easy to use, welcomed by caregivers and good at producing reliable scientific data.

The study, described June 1 in an open access journal npj Digital Medicine, points the way to broader, easier access to screening for autism and other neurodevelopmental disorders.

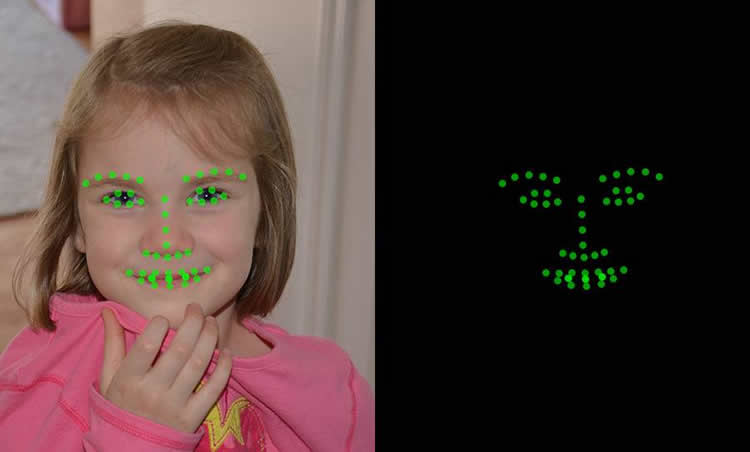

The app first administers caregiver consent forms and survey questions and then uses the phone’s ‘selfie’ camera to collect videos of young children’s reactions while they watch movies designed to elicit autism risk behaviors, such as patterns of emotion and attention, on the device’s screen.

The videos of the child’s reactions are sent to the study’s servers, where automatic behavioral coding software tracks the movement of video landmarks on the child’s face and quantifies the child’s emotions and attention. For example, in response to a short movie of bubbles floating across the screen, the video coding algorithm looks for movements of the face that would indicate joy.

In this study, children whose parents rated their child as having a high number of autism symptoms showed less frequent expressions of joy in response to the bubbles.

Autism screening in young children is presently done in clinical settings, rather than the child’s natural environment, and highly trained people are needed to both administer the test and analyze the results. “That’s not scalable,” said New York University’s Helen Egger, M.D., one of the co-leaders of the study.

This study, from informed consent to data collection and preliminary analysis, was conducted with an app available for free from Apple Store and based on Apple’s ResearchKit open source development platform.

In one year, there were more than 10,000 downloads of the app, and 1,756 families with children aged one to six years participated in the study. Parents completed 5,618 surveys and uploaded 4,441 videos. Usable data were collected on 88 percent of the uploaded videos, demonstrating for the first time the feasibility of this type of tool for observing and coding behavior in natural environments.

“This demonstrates the feasibility of this approach,” said Geraldine Dawson, Ph.D., Director of the Duke Center for Autism and Brain Development and co-leader of the study. “Many caregivers were willing to participate, the data were high quality and the video analysis algorithms produced results consistent with the scoring we produce in our autism program here at Duke.”

An app-based approach can reach into underserved areas better and make it much easier to track an individual child’s changes over time, said Guillermo Sapiro, Edmund T. Pratt, Jr. School Professor of Electrical and Computer Engineering at Duke and a co-leader of the study.

“This technology has the potential to transform how we screen and monitor children’s development,” Sapiro said.

The reported project was a 12-month study. The entire test took about 20 minutes to complete, with only a few minutes involving the child.

The app also included a widely used questionnaire that screens for autism. Based on the questionnaire, participating families received some feedback from the app about what the child’s apparent risk for autism might be. If parents reported a high level of autism symptoms on the questionnaire, they were encouraged to seek further consultation with their health care providers.

Co-Principal Investigators of the study included Helen Egger, now at New York University and adjunct member of the Duke faculty; Geraldine Dawson and Guillermo Sapiro of Duke; and Ricky Bloomfield, now at Apple, Inc. The team included Kimberly Carpenter, Jordan Hashemi, Steven Espinosa, high-school students, undergraduate students, graduate students, post-docs and software developers.

Creation of the app and the research project were supported by the Duke Institute for Health Information, the Information Initiative at Duke, the Duke Endowment, the Coulter Foundation, the Psychiatry Research Incentive and Development Grant Program, the Duke Education and Human Development Incubator, the Duke University School of Medicine Primary Care Leadership Track, Bass Connections, Duke Office of the Vice Provost for Research, National Science Foundation, Department of Defense and the Office of the Assistant Secretary of Defense for Research and Engineering and NIH.

Funding: Grant funding includes NSF-CCF-13-18168, NGA HM0177-13-1-0007 and HM04761610001, NICHD 1P50HD093074-01, ONR N000141210839, and ARO W911NF-16-1-0088.

Source: Duke University

Publisher: Organized by NeuroscienceNews.com.

Image Source: NeuroscienceNews.com image is credited to Duke University.

Original Research: Open access research for “Automatic emotion and attention analysis of young children at home: a ResearchKit autism feasibility study” by Helen L. Egger, Geraldine Dawson, Jordan Hashemi, Kimberly L. H. Carpenter, Steven Espinosa, Kathleen Campbell, Samuel Brotkin, Jana Schaich-Borg, Qiang Qiu, Mariano Tepper, Jeffrey P. Baker, Richard A. Bloomfield Jr. & Guillermo Sapiro in NPJ: Digital Medicine. Published June 1 2018.

doi:10.1038/s41746-018-0024-6

[cbtabs][cbtab title=”MLA”]Duke University “Mobile App For Autism Screening Yields Useful Data.” NeuroscienceNews. NeuroscienceNews, 2 June 2018.

<https://neurosciencenews.com/autism-app-data-9219/>.[/cbtab][cbtab title=”APA”]Duke University (2018, June 2). Mobile App For Autism Screening Yields Useful Data. NeuroscienceNews. Retrieved June 2, 2018 from https://neurosciencenews.com/autism-app-data-9219/[/cbtab][cbtab title=”Chicago”]Duke University “Mobile App For Autism Screening Yields Useful Data.” https://neurosciencenews.com/autism-app-data-9219/ (accessed June 2, 2018).[/cbtab][/cbtabs]

Abstract

Automatic emotion and attention analysis of young children at home: a ResearchKit autism feasibility study

Current tools for objectively measuring young children’s observed behaviors are expensive, time-consuming, and require extensive training and professional administration. The lack of scalable, reliable, and validated tools impacts access to evidence-based knowledge and limits our capacity to collect population-level data in non-clinical settings. To address this gap, we developed mobile technology to collect videos of young children while they watched movies designed to elicit autism-related behaviors and then used automatic behavioral coding of these videos to quantify children’s emotions and behaviors. We present results from our iPhone study Autism & Beyond, built on ResearchKit’s open-source platform. The entire study—from an e-Consent process to stimuli presentation and data collection—was conducted within an iPhone-based app available in the Apple Store. Over 1 year, 1756 families with children aged 12–72 months old participated in the study, completing 5618 caregiver-reported surveys and uploading 4441 videos recorded in the child’s natural settings. Usable data were collected on 87.6% of the uploaded videos. Automatic coding identified significant differences in emotion and attention by age, sex, and autism risk status. This study demonstrates the acceptability of an app-based tool to caregivers, their willingness to upload videos of their children, the feasibility of caregiver-collected data in the home, and the application of automatic behavioral encoding to quantify emotions and attention variables that are clinically meaningful and may be refined to screen children for autism and developmental disorders outside of clinical settings. This technology has the potential to transform how we screen and monitor children’s development.