Summary: Combining brain activity data with artificial intelligence, researchers generated faces based upon what individuals considered to be attractive features.

Source: University of Helsinki

Researchers at the University of Helsinki and University of Copenhagen investigated whether a computer would be able to identify the facial features we consider attractive and, based on this, create new images matching our criteria. The researchers used artificial intelligence to interpret brain signals and combined the resulting brain-computer interface with a generative model of artificial faces. This enabled the computer to create facial images that appealed to individual preferences.

“In our previous studies, we designed models that could identify and control simple portrait features, such as hair colour and emotion. However, people largely agree on who is blond and who smiles. Attractiveness is a more challenging subject of study, as it is associated with cultural and psychological factors that likely play unconscious roles in our individual preferences. Indeed, we often find it very hard to explain what it is exactly that makes something, or someone, beautiful: Beauty is in the eye of the beholder,” says Senior Researcher and Docent Michiel Spapé from the Department of Psychology and Logopedics, University of Helsinki.

The study, which combines computer science and psychology, was published in February in the IEEE Transactions in Affective Computing journal.

Preferences exposed by the brain

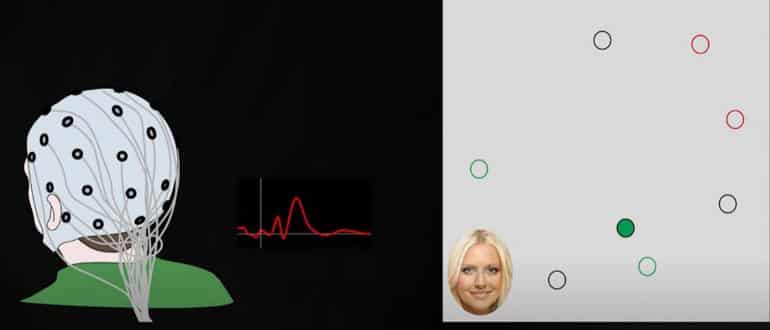

Initially, the researchers gave a generative adversarial neural network (GAN) the task of creating hundreds of artificial portraits. The images were shown, one at a time, to 30 volunteers who were asked to pay attention to faces they found attractive while their brain responses were recorded via electroencephalography (EEG).

“It worked a bit like the dating app Tinder: the participants ‘swiped right’ when coming across an attractive face. Here, however, they did not have to do anything but look at the images. We measured their immediate brain response to the images,” Spapé explains.

The researchers analysed the EEG data with machine learning techniques, connecting individual EEG data through a brain-computer interface to a generative neural network.

“A brain-computer interface such as this is able to interpret users’ opinions on the attractiveness of a range of images. By interpreting their views, the AI model interpreting brain responses and the generative neural network modelling the face images can together produce an entirely new face image by combining what a particular person finds attractive,” says Academy Research Fellow and Associate Professor Tuukka Ruotsalo, who heads the project.

To test the validity of their modelling, the researchers generated new portraits for each participant, predicting they would find them personally attractive. Testing them in a double-blind procedure against matched controls, they found that the new images matched the preferences of the subjects with an accuracy of over 80%.

“The study demonstrates that we are capable of generating images that match personal preference by connecting an artificial neural network to brain responses. Succeeding in assessing attractiveness is especially significant, as this is such a poignant, psychological property of the stimuli. Computer vision has thus far been very successful at categorising images based on objective patterns. By bringing in brain responses to the mix, we show it is possible to detect and generate images based on psychological properties, like personal taste,” Spapé explains.

Potential for exposing unconscious attitudes

Ultimately, the study may benefit society by advancing the capacity for computers to learn and increasingly understand subjective preferences, through interaction between AI solutions and brain-computer interfaces.

“If this is possible in something that is as personal and subjective as attractiveness, we may also be able to look into other cognitive functions such as perception and decision-making. Potentially, we might gear the device towards identifying stereotypes or implicit bias and better understand individual differences,” says Spapé.

About this AI research news

Source: University of Helsinki

Contact: Michiel Spapé – University of Helsinki

Image: The image is credited to COGNITIVE COMPUTING -TUTKIMUSRYHMÄ

Original Research: Closed access.

“Brain-computer interface for generating personally attractive images” by M. Spape, K. Davis, L. Kangassalo, N. Ravaja, Z. Sovijarvi-Spape and T. Ruotsalo. IEEE Transactions on Affective Computing

Abstract

Brain-computer interface for generating personally attractive images

While we instantaneously recognize a face as attractive, it is much harder to explain what exactly defines personal attraction. This suggests that attraction depends on implicit processing of complex, culturally and individually defined features. Generative adversarial neural networks (GANs), which learn to mimic complex data distributions, can potentially model subjective preferences unconstrained by pre-defined model parameterization.

Here, we present generative brain-computer interfaces (GBCI), coupling GANs with brain-computer interfaces. GBCI first presents a selection of images and captures personalized attractiveness reactions toward the images via electroencephalography. These reactions are then used to control a GAN model, finding a representation that matches the features constituting an attractive image for an individual.

We conducted an experiment (N=30) to validate GBCI using a face-generating GAN and producing images that are hypothesized to be individually attractive. In double-blind evaluation of the GBCI-produced images against matched controls, we found GBCI yielded highly accurate results. Thus, the use of EEG responses to control a GAN presents a valid tool for interactive information-generation.

Furthermore, the GBCI-derived images visually replicated known effects from social neuroscience, suggesting that the individually responsive, generative nature of GBCI provides a powerful, new tool in mapping individual differences and visualizing cognitive-affective processing.