Summary: While Western people tend to look at lip movement when communicating, a new study reports Japanese people are less influenced by looking at the mouth when communicating.

Source: Kumamoto University.

Japanese people influenced less by lip movements when listening to another speaker: Evidence from neuroimaging study.

Which parts of a person’s face do you look at when you listen them speak? Lip movements affect the perception of voice information from the ears when listening to someone speak, but native Japanese speakers are mostly unaffected by that part of the face. Recent research from Japan has revealed a clear difference in the brain network activation between two groups of people, native English speakers and native Japanese speakers, during face-to-face vocal communication.

It is known that visual speech information, such as lip movement, affects the perception of voice information from the ears when speaking to someone face-to-face. For example, lip movement can help a person to hear better under noisy conditions. On the contrary, dubbed movie content, where the lip movement conflicts with a speaker’s voice, gives a listener the illusion of hearing another sound. This illusion is called the “McGurk effect.”

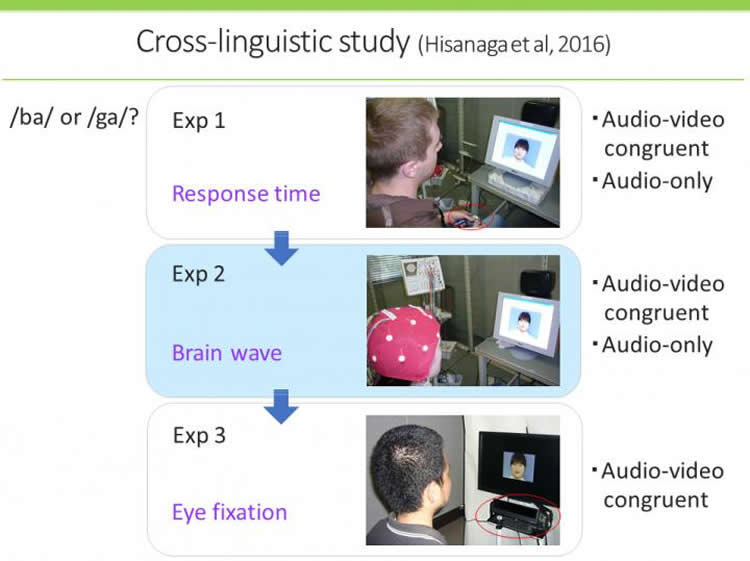

According to an analysis of previous behavioral studies, native Japanese speakers are not influenced by visual lip movements as much as native English speakers. To examine this phenomenon further, researchers from Kumamoto University measured and analyzed gaze patterns, brain waves, and reaction times for speech identification between two groups of 20 native Japanese speakers and 20 native English speakers.

The difference was clear. When natural speech is paired with lip movement, native English speakers focus their gaze on a speaker’s lips before the emergence of any sound. The gaze of native Japanese speakers, however, is not as fixed. Furthermore, native English speakers were able to understand speech faster by combining the audio and visual cues, whereas native Japanese speakers showed delayed speech understanding when lip motion was in view.

“Native English speakers attempt to narrow down candidates for incoming sounds by using information from the lips which start moving a few hundreds of milliseconds before vocalizations begin. Native Japanese speakers, on the other hand, place their emphasis only on hearing, and visual information seems to require extra processing,” explained Kumamoto University’s Professor Kaoru Sekiyama, who lead the research.

Kumamoto University researchers then teamed up with researchers from Sapporo Medical University and Japan’s Advanced Telecommunications Research Institute International (ATR) to measure and analyze brain activation patterns using functional magnetic resonance imaging (fMRI). Their goal was to elucidate differences in brain activity between the two languages.

The functional connectivity in the brain between the area that deals with hearing and the area that deals with visual motion information, the primary auditory and middle temporal areas respectively, was stronger in native English speakers than in native Japanese speakers. This result strongly suggests that auditory and visual information are associated with each other at an early stage of information processing in an English speaker’s brain, whereas the association is made at a later stage in a Japanese speaker’s brain. The functional connectivity between auditory and visual information, and the manner in which the two types of information are processed together was shown to be clearly different between the two different language speakers.

“It has been said that video materials produce better results when studying a foreign language. However, it has also been reported that video materials do not have a very positive effect for native Japanese speakers,” said Professor Sekiyama. “It may be that there are unique ways in which Japanese people process audio information, which are related to what we have shown in our recent research, that are behind this phenomenon.”

Funding: The study was funded by Japanese Grants-in-Aid for Scientific Research, National Institute of Information and Communications Technology.

Source: J. Sanderson, N. Fukuda – Kumamoto University

Image Source: This NeuroscienceNews.com image is credited to Dr. Kaoru Sekiyama.

Original Research: Full open access research for “Language/Culture Modulates Brain and Gaze Processes in Audiovisual Speech Perception” by Satoko Hisanaga, Kaoru Sekiyama, Tomohiko Igasaki & Nobuki Murayama in Scientific Reports. Published online October 13 2016 doi:10.1038/srep35265

[cbtabs][cbtab title=”MLA”]Kumamoto University. “Hearing With Your Eyes: A Western Style of Speech Perception.” NeuroscienceNews. NeuroscienceNews, 15 November 2016.

<https://neurosciencenews.com/speech-perception-eyes-5519/>.[/cbtab][cbtab title=”APA”]Kumamoto University. (2016, November 15). Hearing With Your Eyes: A Western Style of Speech Perception. NeuroscienceNews. Retrieved November 15, 2016 from https://neurosciencenews.com/speech-perception-eyes-5519/[/cbtab][cbtab title=”Chicago”]Kumamoto University. “Hearing With Your Eyes: A Western Style of Speech Perception.” https://neurosciencenews.com/speech-perception-eyes-5519/ (accessed November 15, 2016).[/cbtab][/cbtabs]

Abstract

Language/Culture Modulates Brain and Gaze Processes in Audiovisual Speech Perception

Several behavioural studies have shown that the interplay between voice and face information in audiovisual speech perception is not universal. Native English speakers (ESs) are influenced by visual mouth movement to a greater degree than native Japanese speakers (JSs) when listening to speech. However, the biological basis of these group differences is unknown. Here, we demonstrate the time-varying processes of group differences in terms of event-related brain potentials (ERP) and eye gaze for audiovisual and audio-only speech perception. On a behavioural level, while congruent mouth movement shortened the ESs’ response time for speech perception, the opposite effect was observed in JSs. Eye-tracking data revealed a gaze bias to the mouth for the ESs but not the JSs, especially before the audio onset. Additionally, the ERP P2 amplitude indicated that ESs processed multisensory speech more efficiently than auditory-only speech; however, the JSs exhibited the opposite pattern. Taken together, the ESs’ early visual attention to the mouth was likely to promote phonetic anticipation, which was not the case for the JSs. These results clearly indicate the impact of language and/or culture on multisensory speech processing, suggesting that linguistic/cultural experiences lead to the development of unique neural systems for audiovisual speech perception.

“Language/Culture Modulates Brain and Gaze Processes in Audiovisual Speech Perception” by Satoko Hisanaga, Kaoru Sekiyama, Tomohiko Igasaki & Nobuki Murayama in Scientific Reports. Published online October 13 2016 doi:10.1038/srep35265