Summary: Less is more when it comes to a successful smile, researcher report. In a new study, researchers presented over 800 participants with computer generated images of smiling faces. Researchers found people rated smaller smiles to be more genuine, while bigger smiles that showed more teeth were perceived less well.

Source: PLOS.

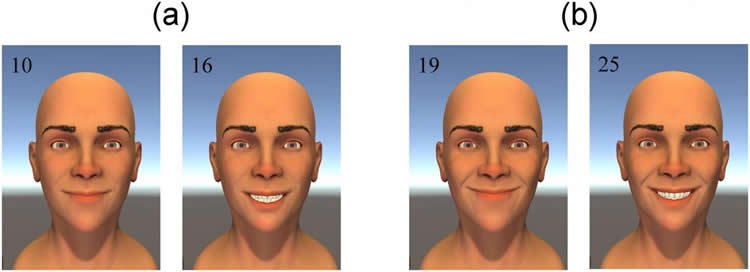

Participants rated 3-D computer-animated models for successful smile qualities.

Research using computer-animated 3D faces suggests that less is more for a successful smile, according to a study published June 28, 2017 in the open-access journal PLOS ONE by Nathaniel Helwig from the University of Minnesota, US, and colleagues.

Facial cues are an important form of nonverbal communication in social interactions, and previous studies indicate that computer-generated facial models can be useful for systematically studying how changes in expression over space and time affect how people read faces. The authors of the present study presented a series of 3D computer-animated facial models to 802 participants. Each model’s expression was altered by varying the mouth angle, extent of smile and the degree to which teeth were on show, as well as how symmetrically the smile developed, and participants were asked to rate smiles based on effectiveness, genuineness, pleasantness and perceived emotional intent.

The researchers found that a successful smile – one that is rated effective, genuine and pleasant – may contradict the “more is always better” principle, as a bigger smile which shows more teeth may in fact be perceived less well. Successful smiles therefore have an optimal balance of teeth, mouth angle and smile extent to hit a smile ‘sweet spot’. Smiles were also rated as more successful if they developed quite symmetrically, with the left and right side of the faces being synced to within 125 milliseconds.

According to the authors, using 3D computer amination may help to develop a more complete spatiotemporal understanding of our emotional perceptions of facial expression. Since some people have medical conditions such as stroke which hinder facial expressions, with possible psychological and social consequences, these results could also inform current medical practices for facial reanimation surgery and rehabilitation.

Funding: The study was supported by the Swiss National Science Foundation (grant PP00P3-130191), the Centre d’Imagerie BioMédicale of the University of Lausanne, as well as the Foundation Rossi Di Montalera, the LifeWatch Foundation, the AnnMarie and Per Ahlqvist Foundation, the Torsten Soderberg Foundation and the Swedish Science Council.

Source: Beth Jones – PLOS

Image Source: NeuroscienceNews.com image is credited to Helwig et al (2017).

Original Research: Full open access research for “Dynamic properties of successful smiles” by Nathaniel E. Helwig, Nick E. Sohre, Mark R. Ruprecht, Stephen J. Guy, and Sofía Lyford-Pike in PLOS ONE. Published online June 28 2017 doi:10.1371/journal.pone.0179708

[cbtabs][cbtab title=”MLA”]PLOS “Facial Models Suggest Less May Be More For a Successful Smile.” NeuroscienceNews. NeuroscienceNews, 28 June 2017.

<https://neurosciencenews.com/smile-facial-model-6998/>.[/cbtab][cbtab title=”APA”]PLOS (2017, June 28). Facial Models Suggest Less May Be More For a Successful Smile. NeuroscienceNew. Retrieved June 28, 2017 from https://neurosciencenews.com/smile-facial-model-6998/[/cbtab][cbtab title=”Chicago”]PLOS “Facial Models Suggest Less May Be More For a Successful Smile.” https://neurosciencenews.com/smile-facial-model-6998/ (accessed June 28, 2017).[/cbtab][/cbtabs]

Abstract

Dynamic properties of successful smiles

Facial expression of emotion is a foundational aspect of social interaction and nonverbal communication. In this study, we use a computer-animated 3D facial tool to investigate how dynamic properties of a smile are perceived. We created smile animations where we systematically manipulated the smile’s angle, extent, dental show, and dynamic symmetry. Then we asked a diverse sample of 802 participants to rate the smiles in terms of their effectiveness, genuineness, pleasantness, and perceived emotional intent. We define a “successful smile” as one that is rated effective, genuine, and pleasant in the colloquial sense of these words. We found that a successful smile can be expressed via a variety of different spatiotemporal trajectories, involving an intricate balance of mouth angle, smile extent, and dental show combined with dynamic symmetry. These findings have broad applications in a variety of areas, such as facial reanimation surgery, rehabilitation, computer graphics, and psychology.

“Dynamic properties of successful smiles” by Nathaniel E. Helwig, Nick E. Sohre, Mark R. Ruprecht, Stephen J. Guy, and Sofía Lyford-Pike in PLOS ONE. Published online June 28 2017 doi:10.1371/journal.pone.0179708