Summary: Using a ‘smell virtual landscape’, researchers discover the mammalian brain can map our surroundings based on smells alone.

Source: Northwestern University.

Northwestern University researchers have developed a new “smell virtual landscape” that enables the study of how smells engage the brain’s navigation system. The work demonstrates, for the first time, that the mammalian brain can form a map of its surroundings based solely on smells.

The olfactory-based virtual reality system could lead to a fuller understanding of odor-guided navigation and explain why mammals have an aversion to unpleasant odors, an attraction to pheromones and an innate preference to one odor over another. The system could also help tech developers incorporate smell into current virtual reality systems to give users a more multisensory experience.

“We have invented what we jokingly call a ‘smellovision,'” said Daniel A. Dombeck, associate professor of neurobiology in Northwestern’s Weinberg College of Arts and Sciences, who led the study. “It is the world’s first method to control odorant concentrations rapidly in space for mammals as they move around.”

The study was published online today, Feb. 26, by the journal Nature Communications.

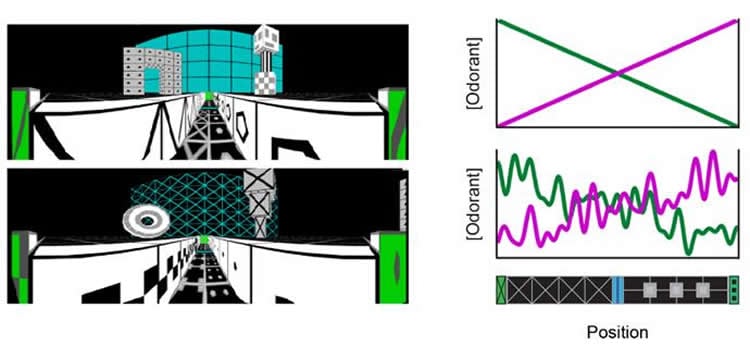

Researchers have long known that odors can guide animals’ behaviors. But studying this phenomenon has been difficult because odors are nearly impossible to control as they naturally travel and diffuse in the air. By using a virtual reality system made of smells instead of audio and visuals, Dombeck and graduate student Brad Radvansky created a landscape in which smells can be controlled and maintained.

“Imagine a room in which each position is defined by a unique smell profile,” Radvansky said. “And imagine that this profile is maintained no matter how much time elapses or how fast you move through the room.”

That is exactly what Dombeck’s team developed, using mice in their study. Aided by a predictive algorithm that determined precise timing and distributions, the airflow system pumped scents – such as bubblegum, pine and a sour smell – past the mouse’s nose to create a virtual room. Mice first explored the virtual environment through both visual and olfactory cues. Researchers then shut off the visual virtual reality system, forcing the mice to navigate the room in total darkness based on olfactory cues alone. The mice did not show a decrease in performance. Instead, the study indicated that moving through a smell landscape engages the brain’s spatial mapping mechanisms.

Not only can the platform help researchers learn more about how the brain processes and uses smells, it could also lay the groundwork for human applications.

“Development of virtual reality technology has mainly focused on vision and sound,” Dombeck said. “It is likely that our technology will eventually be incorporated into commercial virtual reality systems to create a more immersive multisensory experience for humans.”

Funding: The research was supported by The McKnight Foundation, The Klingenstein Foundation, The Whitehall Foundation, the Chicago Biomedical Consortium with support from the Searle Funds of The Chicago Community Trust, the National Institutes of Health (award number 1R01MH101297) and the National Science Foundation (award number CRCNS 1516235).

Source: Amanda Morris – Northwestern University

Publisher: Organized by NeuroscienceNews.com.

Image Source: NeuroscienceNews.com image credited to Daniel Dombeck and Brad Radvansky/Northwestern University.

Original Research: Open access research in Nature Communications.

doi:10.1038/s41467-018-03262-4

[cbtabs][cbtab title=”MLA”]Northwestern University “Brain Can Navigate Based Solely on Smells.” NeuroscienceNews. NeuroscienceNews, 26 February 2018.

<https://neurosciencenews.com/smell-navigation-8567/>.[/cbtab][cbtab title=”APA”]Northwestern University (2018, February 26). Brain Can Navigate Based Solely on Smells. NeuroscienceNews. Retrieved February 26, 2018 from https://neurosciencenews.com/smell-navigation-8567/[/cbtab][cbtab title=”Chicago”]Northwestern University “Brain Can Navigate Based Solely on Smells.” https://neurosciencenews.com/smell-navigation-8567/ (accessed February 26, 2018).[/cbtab][/cbtabs]

Abstract

An olfactory virtual reality system for mice

All motile organisms use spatially distributed chemical features of their surroundings to guide their behaviors, but the neural mechanisms underlying such behaviors in mammals have been difficult to study, largely due to the technical challenges of controlling chemical concentrations in space and time during behavioral experiments. To overcome these challenges, we introduce a system to control and maintain an olfactory virtual landscape. This system uses rapid flow controllers and an online predictive algorithm to deliver precise odorant distributions to head-fixed mice as they explore a virtual environment. We establish an odor-guided virtual navigation behavior that engages hippocampal CA1 “place cells” that exhibit similar properties to those previously reported for real and visual virtual environments, demonstrating that navigation based on different sensory modalities recruits a similar cognitive map. This method opens new possibilities for studying the neural mechanisms of olfactory-driven behaviors, multisensory integration, innate valence, and low-dimensional sensory-spatial processing.