Summary: While previous studies point to an overlap between perception and memory, a new study finds the two are systematically different.

Source: NYU

The brain works in fundamentally different ways when remembering what we have seen compared to seeing something for the first time, a team of scientists has found.

While previous work had concluded there is significant overlap between these two processes, the new study, which appears in the journal Nature Communications, reveals they are systematically different.

“There are undoubtedly some similarities between the brain’s activity when people are seeing and remembering things, but there are also significant differences,” says Jonathan Winawer, a professor of psychology and neuroscience at New York University and the senior author of the paper.

“These distinctions are crucial to better understanding memory behavior and related afflictions.”

“We think these differences have to do with the architecture of the visual system itself and that the vision and memory processes produce different patterns of activity within this architecture,” adds Serra Favila, the paper’s lead author and an NYU doctoral student at the time of the study.

For decades, it was thought that recalling what we have seen—a sunset, a painting, another’s face—meant reactivating the same neuronal process used when seeing these images for the first time. However, the relationship between these activities—feedforward (vision) and feedback (memory)—is unclear.

To explore this, the research team, which also included Brice Kuhl, previously an assistant professor at NYU, conducted a series of experiments with human subjects.

Using functional MRI (fMRI) technology, the scientists measured the subjects’ visual cortex responses as they viewed images (simple geometric shapes in different locations on a computer screen) and, later, when they were asked to recall their make-up.

Varying the location of these visual shapes in the experiments allowed the researchers to monitor and understand memory activity in the visual system in a highly precise way.

The results showed some similarities between neuronal activity when initially processing these visual shapes and when asked to recall them—the parts of the visual cortex deployed when seeing something for the first time (perception) were also active during memory processing.

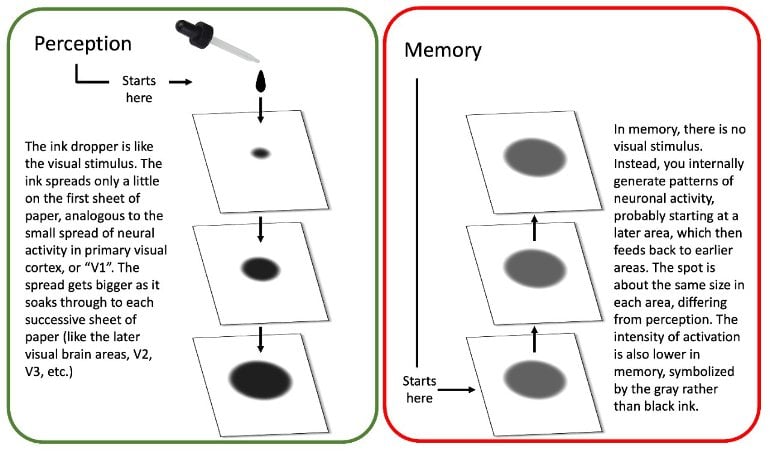

However, activity during memory also differed from activity during perception in highly systematic ways. Many of these differences stem from how visual scenes are mapped onto the brain. The brain has dozens of visual areas to process and store incoming images. These areas are arranged in a hierarchy—a long-understood characteristic.

More specifically, the primary visual cortex (V1) is at the bottom of the hierarchy because it is the first area to receive visual inputs, and it maps the visual scene in fine spatial detail. The signals are then passed along to subsequent brain maps for further processing—to the secondary visual cortex, or V2, and then V3, etc.

The initial processing by the primary visual cortex accurately captures the spatial arrangement of images while the higher brain areas, such as the secondary visual cortex, extract more complex information—What shape does an object have? What color is it? Is it a cup or bowl? But what is gained in complexity is lost in spatial precision.

“The tradeoff is that as these higher areas extract more complex information, they become less concerned about the exact spatial arrangement of the image,” explains Winawer.

In the Nature Communications study, the researchers found that during perception viewing a small object activated a small part of the primary visual cortex, a larger part of secondary visual cortex, and even larger parts of higher cortices.

This was expected due to the known properties of the visual hierarchy, they note. However, they found that this progression appears to be lost when recalling a visual stimulus (i.e., memory).

The scientists say this is akin to the way ink spreads on stacked pieces of paper. In perception, brain activity becomes more dispersed as you move up the organ’s hierarchy.

By contrast, in memory, the ink starts out at the top of the hierarchy, already dispersed, and cannot get narrower as it goes back down, thus the activity remains relatively constant.

This loss of progression during memory may explain why remembering a scene is so different from seeing one, and why there tends to be so much less detail available in memory.

About this perception and memory research news

Author: Press Office

Source: NYU

Contact: Press Office – NYU

Image: The image is credited to Jonathan Winawer, NYU’s Department of Psychology/New York University

Original Research: Open access.

“Perception and memory have distinct spatial tuning properties in human visual cortex” by Serra E. Favila et al. Nature Communications

Abstract

Perception and memory have distinct spatial tuning properties in human visual cortex

Reactivation of earlier perceptual activity is thought to underlie long-term memory recall. Despite evidence for this view, it is unclear whether mnemonic activity exhibits the same tuning properties as feedforward perceptual activity.

Here, we leverage population receptive field models to parameterize fMRI activity in human visual cortex during spatial memory retrieval.

Though retinotopic organization is present during both perception and memory, large systematic differences in tuning are also evident. Whereas there is a three-fold decline in spatial precision from early to late visual areas during perception, this pattern is not observed during memory retrieval.

This difference cannot be explained by reduced signal-to-noise or poor performance on memory trials. Instead, by simulating top-down activity in a network model of cortex, we demonstrate that this property is well explained by the hierarchical structure of the visual system.

Together, modeling and empirical results suggest that computational constraints imposed by visual system architecture limit the fidelity of memory reactivation in sensory cortex.