Using brain recordings and a computer model, an interdisciplinary team confounds the conventional wisdom about how the brain sorts out relevant versus irrelevant sensory inputs in making choices.

While eating lunch, you notice an insect buzzing around your plate. Its color and motion could both influence how you respond. If the insect was yellow and black you might decide it was a bee and move away. Conversely, you might simply be annoyed at the buzzing motion and shoo the insect away. You perceive both color and motion, and decide based on the circumstances. Our brains make such contextual decisions in a heartbeat. The mystery is how.

In an article published Nov. 7 in the journal Nature, a team of Stanford neuroscientists and engineers delve into this decision-making process and report some findings that confound the conventional wisdom.

Until now, neuroscientists have believed that decisions of this sort involved two steps: one group of neurons that performed a gating function to ascertain whether motion or color was most relevant to the situation and a second group of neurons that considered only the sensory input relevant to making a decision under the circumstances.

But in a study that combined brain recordings from trained monkeys and a sophisticated computer model based on that biological data, Stanford neuroscientist William Newsome and three co-authors discovered that the entire decision-making process may occur in a localized region of the prefrontal cortex.

In this region of the brain, located in the frontal lobes just behind the forehead, they found that color and motion signals converged in a specific circuit of neurons. Based on their experimental evidence and computer simulations, the scientists hypothesized that these neurons act together to make two snap judgments: whether color or motion is the most relevant sensory input in the current context and what action to take.

“We were quite surprised,” said Newsome, the Harman Family Provostial Professor at the Stanford School of Medicine and lead author.

He and first author Valerio Mante, a former Stanford neurobiologist now at the University of Zurich and the Swiss Federal Institute of Technology, had begun the experiment expecting to find that the irrelevant signal, whether color or motion, would be gated out of the circuit long before the decision-making neurons went into action.

“What we saw instead was this complicated mix of signals that we could measure but whose meaning and underlying mechanism we couldn’t understand,” Newsome said. “These signals held information about the color and motion of the stimulus, which stimulus dimension was most relevant and the decision that the monkeys made. But the signals were profoundly mixed up at the single neuron level. We decided there was a lot more we needed to learn about these neurons and that the key to unlocking the secret might lie in a population level analysis of the circuit activity.”

To solve this brain puzzle the neurobiologists began a cross-disciplinary collaboration with Krishna Shenoy, a professor of electrical engineering at Stanford, and David Sussillo, co-first author on the paper and a postdoctoral scholar in Shenoy’s lab.

Sussillo created a software model to simulate how these neurons worked. The idea was to build a model sophisticated enough to mimic the decision-making process but easier to study than taking repeated electrical readings from a brain.

The general model architecture they used is called a recurrent neural network: a set of software modules designed to accept inputs and perform tasks similar to how biological neurons operate. The scientists designed this artificial neural network using computational techniques that enabled the software model to make itself more proficient at decision-making over time.

“We challenged the artificial system to solve a problem analogous to the one given to the monkeys,” Sussillo explained. “But we didn’t tell the neural network how to solve the problem.”

As a result, once the artificial network learned to solve the task, the scientists could study the model to develop inferences about how the biological neurons might be working.

The entire process was grounded in the biological experiments.

The neuroscientists trained two macaque monkeys to view a random-dot visual display that had two different features – motion and color. For any given presentation, the dots could move to the right or left, and the color could be red or green. The monkeys were taught to use sideways glances to answer two different questions depending on the currently instructed “rule” or context. Were there more red or green dots (ignore the motion)? Or were the dots moving to the left or right (ignore the color)?

Eye-tracking instruments recorded the glances, or saccades, that the monkeys used to register their responses. Their answers were correlated with recordings of neuronal activity taken directly from an area in the prefrontal cortex known to control saccadic eye movements.

The neuroscientists collected 1,402 such experimental measurements. Each time the monkeys were asked one or the other question. The idea was to obtain brain recordings at the moment when the monkeys saw a visual cue that established the context (either the red/green or left/right question) and what decision the animal made regarding color or direction of motion.

It was the puzzling mish-mash of signals in the brain recordings from these experiments that prompted the scientists to build the recurrent neural network as a way to rerun the experiment, in a simulated way, time and time again.

As the four researchers became confident that their software simulations accurately mirrored the actual biological behavior, they studied the model to learn exactly how it solved the task. This allowed them to form a hypothesis about what was occurring in that patch of neurons in the prefrontal cortex where perception and decision occurred.

“The idea is really very simple,” Sussillo explained.

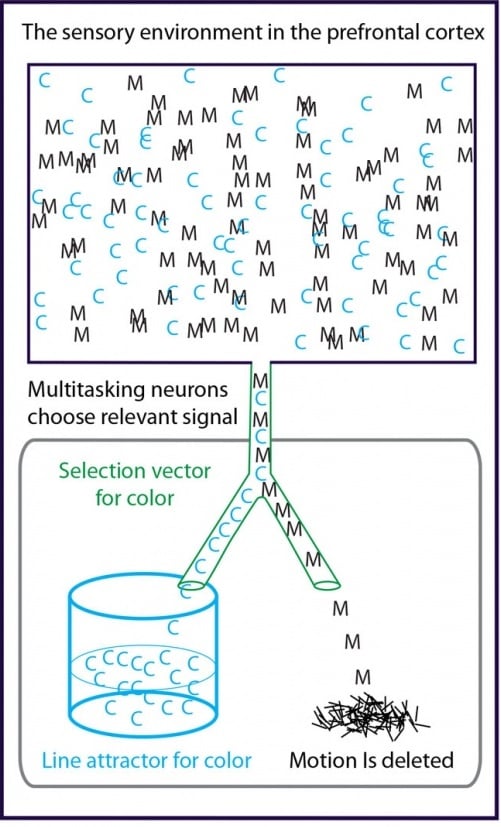

Their hypothesis revolves around two mathematical concepts: a line attractor and a selection vector.

The entire group of neurons being studied received sensory data about both the color and the motion of the dots.

The line attractor is a mathematical representation for the amount of information that this group of neurons was getting about either of the relevant inputs, color or motion.

The selection vector represented how the model responded when the experimenters flashed one of the two questions: red or green, left or right?

What the model showed was that when the question pertained to color, the selection vector directed the artificial neurons to accept color information while ignoring the irrelevant motion information. Color data became the line attractor. After a split second these neurons registered a decision, choosing the red or green answer based on the data they were supplied.

If question was about motion, the selection vector directed motion information to the line attractor, and the artificial neurons chose left or right.

“The amazing part is that a single neuronal circuit is doing all of this,” Sussillo says. “If our model is correct, then almost all neurons in this biological circuit appear to be contributing to almost all parts of the information selection and decision-making mechanism.”

Newsome put it like this: “We think that all of these neurons are interested in everything that’s going on, but they’re interested to different degrees. They’re multitasking like crazy.”

Other researchers who are aware of the work but were not directly involved are commenting on the paper.

“This is a spectacular example of excellent experimentation combined with clever data analysis and creative theoretical modeling,” said Larry Abbott, Co-Director of the Center for Theoretical Neuroscience and the William Bloor Professor, Neuroscience, Physiology & Cellular Biophysics, Biological Sciences at Columbia University.

Christopher Harvey, a professor of neurobiology at Harvard Medical School, said the paper “provides major new hypotheses about the inner-workings of the prefrontal cortex, which is a brain area that has frequently been identified as significant for higher cognitive processes but whose mechanistic functioning has remained mysterious.”

The Stanford scientists are now designing a new biological experiment to ascertain whether the interplay between selection vector and line attractor, which they deduced from their software model, can be measured in actual brain signals.

“The model predicts a very specific type of neural activity under very specific circumstances,” Sussillo said. “If we can stimulate the prefrontal cortex in the right way, and then measure this activity, we will have gone a long way to proving that the model mechanism is indeed what is happening in the biological circuit.”

Notes about this neuroscience research

The work was supported by: the Howard Hughes Medical Institute, the Air Force Research Laboratory, a Pioneer Award from the National Institutes of Health and the Defense Advanced Research Projects Agency.

Contact: Tom Abate – Stanford School of Engineering

Source: Stanford School of Engineering press release

Image Source: The image is credited to David Sussillo/Stanford School of Engineering and is adapted from the press release.

Original Research: Abstract for “Context-dependent computation by recurrent dynamics in prefrontal cortex” by Valerio Mante, David Sussillo, Krishna V. Shenoy and William T. Newsome in Nature. Published online November 7 2013 doi:10.1038/nature12742