Summary: Researchers have identified a flexible mechanism of visual information representation that alters in correlation to visual contrasts.

Source: NIPS

The appearance of objects can often change. For example, in dim evenings or fog, the contrast of the objects decreases, making it difficult to distinguish them. However, after repeatedly encountering specific objects, the brain can identify them even if they become indistinct.

The exact mechanism contributing to the perception of low-contrast familiar objects remains unknown.

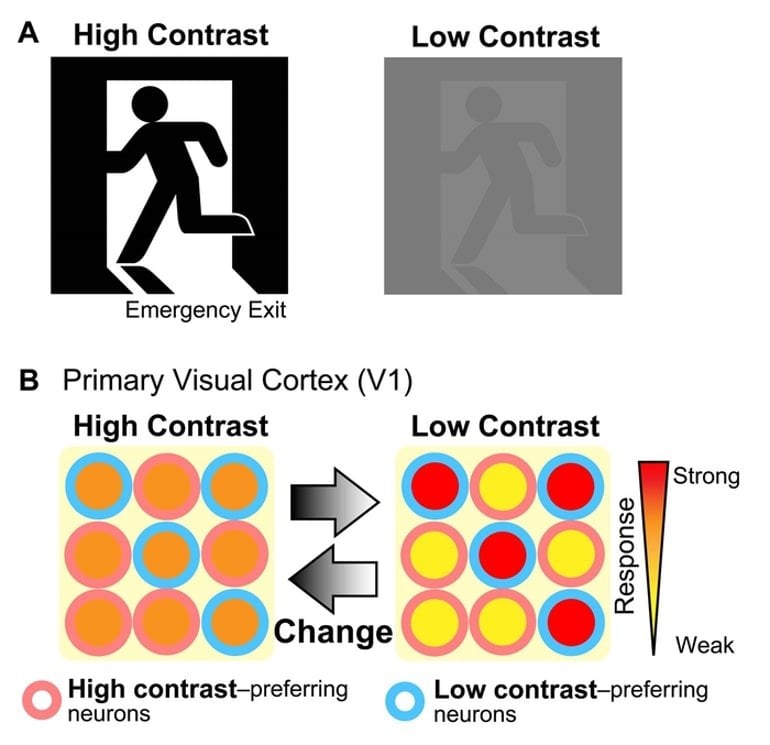

In the primary visual cortex (V1), the area of the cerebral cortex dedicated to processing basic visual information, the visual responses have been considered to reflect directly the strength of external inputs. Thus, high-contrast visual stimuli elicit strong responses and vice versa.

In this study, Rie Kimura and Yumiko Yoshimura found that in rats, the number of V1 neurons preferentially responding to low-contrast stimuli increases after repeated experiences.

In these neurons, low-contrast visual stimuli elicit stronger responses, and high-contrast stimuli elicit weaker responses. These low contrast–preferring neurons show a more evident activity when rats correctly perceive a low-contrast familiar object.

It was first reported in Science Advances that low-contrast preference in V1 is strengthened in an experience-dependent manner to represent low-contrast visual information well.

This mechanism may contribute to the perception of familiar objects, even when they are indistinct.

“This flexible information representation may enable a consistent perception of familiar objects with any contrast,” Kimura says.

“The flexibility of our brain makes our sensation effective, although you may not be aware of it. An artificial neural network model may reproduce the human sensation by incorporating not only high contrast–preferring neurons, generally considered until now, but also low contrast–preferring neurons, the main focus of this research.”

About this visual neuroscience research news

Author: Hayao KIMURA

Source: NIPS

Contact: Hayao KIMURA – NIPS

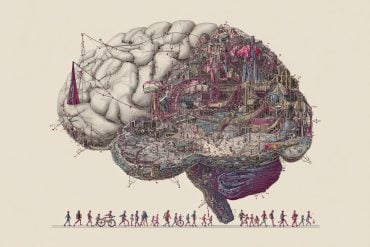

Image: The image is credited to Rie Kimura

Original Research: The findings will appear in Science Advances