Summary: Constraining hand movements affects the processing of object-meaning, a finding which supports the theory of embodied cognition.

Source: Osaka Metropolitan University

How do we understand words? Scientists don’t fully understand what happens when a word pops into your brain. A research group led by Professor Shogo Makioka at the Graduate School of Sustainable System Sciences, Osaka Metropolitan University, wanted to test the idea of embodied cognition.

Embodied cognition proposes that people understand the words for objects through how they interact with them, so the researchers devised a test to observe semantic processing of words when the ways that the participants could interact with objects were limited.

Words are expressed in relation to other words; a “cup,” for example, can be a “container, made of glass, used for drinking.” However, you can only use a cup if you understand that to drink from a cup of water, you hold it in your hand and bring it to your mouth, or that if you drop the cup, it will smash on the floor.

Without understanding this, it would be difficult to create a robot that can handle a real cup. In artificial intelligence research, these issues are known as symbol grounding problems, which map symbols onto the real world.

How do humans achieve symbol grounding? Cognitive psychology and cognitive science propose the concept of embodied cognition, where objects are given meaning through interactions with the body and the environment.

To test embodied cognition, the researchers conducted experiments to see how the participants’ brains responded to words that describe objects that can be manipulated by hand, when the participants’ hands could move freely compared to when they were restrained.

“It was very difficult to establish a method for measuring and analyzing brain activity. The first author, Ms. Sae Onishi, worked persistently to come up with a task, in a way that we were able to measure brain activity with sufficient accuracy,” Professor Makioka explained.

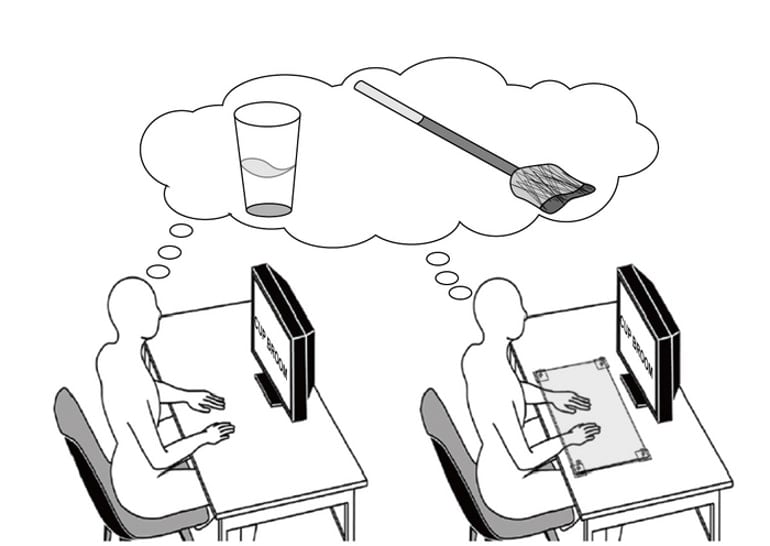

In the experiment, two words such as “cup” and “broom” were presented to participants on a screen. They were asked to compare the relative sizes of the objects those words represented and to verbally answer which object was larger—in this case, “broom.”

Comparisons were made between the words, describing two types of objects, hand-manipulable objects, such as “cup” or “broom” and nonmanipulable objects, such as “building” or “lamppost,” to observe how each type was processed.

During the tests, the participants placed their hands on a desk, where they were either free or restrained by a transparent acrylic plate. When the two words were presented on the screen, to answer which one represented a larger object, the participants needed to think of both objects and compare their sizes, forcing them to process each word’s meaning.

Brain activity was measured with functional near-infrared spectroscopy (fNIRS), which has the advantage of taking measurements without imposing further physical constraints.

The measurements focused on the interparietal sulcus and the inferior parietal lobule (supramarginal gyrus and angular gyrus) of the left brain, which are responsible for semantic processing related to tools.

The speed of the verbal response was measured to determine how quickly the participant answered after the words appeared on the screen.

The results showed that the activity of the left brain in response to hand-manipulable objects was significantly reduced by hand restraints. Verbal responses were also affected by hand constraints.

These results indicate that constraining hand movement affects the processing of object-meaning, which supports the idea of embodied cognition. These results suggest that the idea of embodied cognition could also be effective for artificial intelligence to learn the meaning of objects.

About this cognition research news

Author: Yoshiko Tani

Source: Osaka Metropolitan University

Contact: Yoshiko Tani – Osaka Metropolitan University

Image: The image is credited to Makioka, Osaka Metropolitan University

Original Research: Open access.

“Hand constraint reduces brain activity and affects the speed of verbal responses on semantic tasks” by Sae Onishi et al. Scientific Reports

Abstract

Hand constraint reduces brain activity and affects the speed of verbal responses on semantic tasks

According to the theory of embodied cognition, semantic processing is closely coupled with body movements. For example, constraining hand movements inhibits memory for objects that can be manipulated with the hands. However, it has not been confirmed whether body constraint reduces brain activity related to semantics.

We measured the effect of hand constraint on semantic processing in the parietal lobe using functional near-infrared spectroscopy.

A pair of words representing the names of hand-manipulable (e.g., cup or pencil) or nonmanipulable (e.g., windmill or fountain) objects were presented, and participants were asked to identify which object was larger.

The reaction time (RT) in the judgment task and the activation of the left intraparietal sulcus (LIPS) and left inferior parietal lobule (LIPL), including the supramarginal gyrus and angular gyrus, were analyzed. We found that constraint of hand movement suppressed brain activity in the LIPS toward hand-manipulable objects and affected RT in the size judgment task.

These results indicate that body constraint reduces the activity of brain regions involved in semantics. Hand constraint might inhibit motor simulation, which, in turn, would inhibit body-related semantic processing.