Summary: Using cappella recordings, researchers discover humans have developed complementary neural systems in each hemisphere for auditory stimuli.

Source: McGill University

Speech and music are two fundamentally human activities that are decoded in different brain hemispheres. A new study used a unique approach to reveal why this specialization exists.

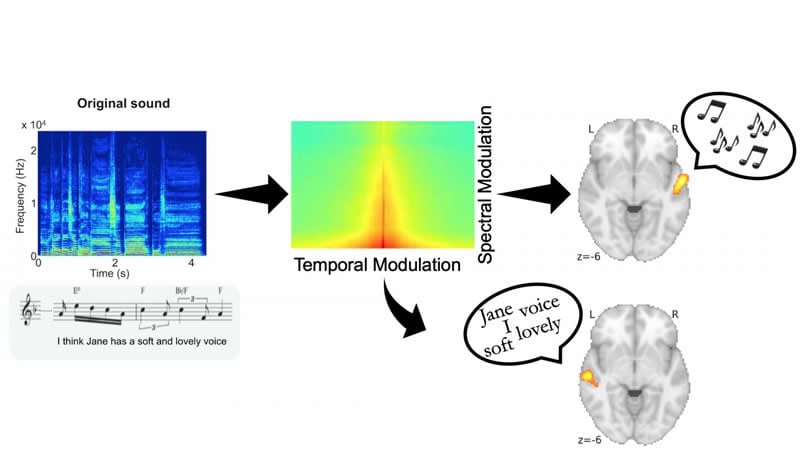

Researchers at The Neuro (Montreal Neurological Institute-Hospital) of McGill University created 100 a capella recordings, each of a soprano singing a sentence. They then distorted the recordings along two fundamental auditory dimensions: spectral and temporal dynamics, and had 49 participants distinguish the words or the melodies of each song. The experiment was conducted in two groups of English and French speakers to enhance reproducibility and generalizability. The experiment is demonstrated here: https://www.zlab.mcgill.ca/spectro_temporal_modulations/

They found that for both languages, when the temporal information was distorted, participants had trouble distinguishing the speech content, but not the melody. Conversely, when spectral information was distorted, they had trouble distinguishing the melody, but not the speech. This shows that speech and melody depend on different acoustical features.

To test how the brain responds to these different sound features, the participants were then scanned with functional magnetic resonance imaging (fMRI) while they distinguished the sounds. The researchers found that speech processing occurred in the left auditory cortex, while melodic processing occurred in the right auditory cortex.

Music and speech exploit different ends of the spectro-temporal continuum

Next, they set out to test how degradation in each acoustic dimension would affect brain activity. They found that degradation of the spectral dimension only affected activity in the right auditory cortex, and only during melody perception, while degradation of the temporal dimension affected only the left auditory cortex, and only during speech perception. This shows that the differential response in each hemisphere depends on the type of acoustical information in the stimulus.

Previous studies in animals have found that neurons in the auditory cortex respond to particular combinations of spectral and temporal energy, and are highly tuned to sounds that are relevant to the animal in its natural environment, such as communication sounds. For humans, both speech and music are important means of communication. This study shows that music and speech exploit different ends of the spectro-temporal continuum, and that hemispheric specialization may be the nervous system’s way of optimizing the processing of these two communication methods.

Solving the mystery of hemispheric specialization

“It has been known for decades that the two hemispheres respond to speech and music differently, but the physiological basis for this difference remained a mystery,” says Philippe Albouy, the study’s first author. “Here we show that this hemispheric specialization is linked to basic acoustical features that are relevant for speech and music, thus tying the finding to basic knowledge of neural organization.”

Funding: Their results were published in the journal Science on Feb. 28, 2020. It was funded by a Banting fellowship to Albouy and by grants to senior author Robert Zatorre from the Canadian Institutes for Health Research and from the Canadian Institute for Advanced Research. A cappella recordings were made with the help of McGill University’s Schulich School of Music.

Source:

McGill University

Media Contacts:

Shawn Hayward – McGill University

Image Source:

The image is credited to Robert Zatorre.

Original Research: Closed access

“Distinct sensitivity to spectrotemporal modulation supports brain asymmetry for speech and melody”. Philippe Albouy, Lucas Benjamin, Benjamin Morillon, Robert J. Zatorre.

Science doi:10.1126/science.aaz3468.

Abstract

Distinct sensitivity to spectrotemporal modulation supports brain asymmetry for speech and melody

Does brain asymmetry for speech and music emerge from acoustical cues or from domain-specific neural networks? We selectively filtered temporal or spectral modulations in sung speech stimuli for which verbal and melodic content was crossed and balanced. Perception of speech decreased only with degradation of temporal information, whereas perception of melodies decreased only with spectral degradation. Functional magnetic resonance imaging data showed that the neural decoding of speech and melodies depends on activity patterns in left and right auditory regions, respectively. This asymmetry is supported by specific sensitivity to spectrotemporal modulation rates within each region. Finally, the effects of degradation on perception were paralleled by their effects on neural classification. Our results suggest a match between acoustical properties of communicative signals and neural specializations adapted to that purpose.