Summary: MEG neuroimaging implicates the occipital place area (OPA) in our ability to rapidly sense our surroundings. The findings may advance improving machine learning and robotics technology aimed at mimicking visual processes in the human brain.

Source: Zuckerman Institute

To move through the world, you need a sense of your surroundings, especially of the constraints that restrict your movement: the walls, ceiling and other barriers that define the geometry of the navigable space around you. And now, a team of neuroscientists has identified an area of the human brain dedicated to perceiving this geometry. This brain region encodes the spatial constraints of a scene, at lightning-fast speeds, and likely contributes to our instant sense of our surroundings; orienting us in space, so we can avoid bumping into things, figure out where we are and navigate safely through our environment.

This research, published today in Neuron, sets the stage for understanding the complex computations our brains do to help us get around. Led by scientists at Columbia University’s Mortimer B. Zuckerman Mind Brain Behavior Institute and Aalto University in Finland, the work is also relevant to the development of artificial intelligence technology aimed at mimicking the visual powers of the human brain.

“Vision gives us an almost instant sense where we are in space, and in particular of the geometry of the surfaces — the ground, the walls — which constrain our movement. It feels effortless, but it requires the coordinated activity of multiple brain regions,” said Nikolaus Kriegeskorte, PhD, a principal investigator at Columbia’s Zuckerman Institute and the paper’s senior author. “How neurons work together to give us this sense of our surroundings has remained mysterious. With this study, we are a step closer to solving that puzzle.”

To figure out how the brain perceives the geometry of its surroundings, the research team asked volunteers to look at images of different three-dimensional scenes. An image might depict a typical room, with three walls, a ceiling and a floor. The researchers then systematically changed the scene: by removing the wall, for instance, or the ceiling. Simultaneously, they monitored participants’ brain activity through a combination of two cutting-edge brain-imaging technologies at Aalto’s neuroimaging facilities in Finland.

“By doing this repeatedly for each participant as we methodically altered the images, we could piece together how their brains encoded each scene,” Linda Henriksson, PhD, the paper’s first author and a lecturer in neuroscience and biomedical engineering at Aalto University.

Our visual system is organized into a hierarchy of stages. The first stage actually lies outside the brain, in the retina, which can detect simple visual features. Subsequent stages in the brain have the power to detect more complex shapes. By processing visual signals through multiple stages — and by repeated communications between the stages — the brain forms a complete picture of the world, with all its colors, shapes and textures.

In the cortex, visual signals are first analyzed in an area called the primary visual cortex. They are then passed to several higher-level cortical areas for further analyses. The occipital place area (OPA), an intermediate-level stage of cortical processing, proved particularly interesting in the brain scans of the participants.

“Previous studies had shown that OPA neurons encode scenes, rather than isolated objects,” said Dr. Kriegeskorte, who is also a professor of psychology and neuroscience and director of cognitive imaging at Columbia. “But we did not yet understand what aspect of the scenes this region’s millions of neurons encoded.”

After analyzing the participants’ brain scans, Drs. Kriegeskorte and Henriksson found that the OPA activity reflected the geometry of the scenes. The OPA activity patterns reflected the presence or absence of each scene component — the walls, the floor and the ceiling — conveying a detailed picture of the overall geometry of the scene. However, the OPA activity patterns did not depend on the components’ appearance; the textures of the walls, floor and ceiling — suggesting that the region ignores surface appearance, so as to focus solely on surface geometry. The brain region appeared to perform all the necessary computations needed to get a sense of a room’s layout extremely fast: in just 100 milliseconds.

“The speed with which our brains sense the basic geometry of our surroundings is an indication of the importance of having this information quickly,” said Dr. Henriksson.

“It is key to knowing whether you’re inside or outside, or what might be your options for navigation.”

The insights gained in this study were possible through the joint use of two complementary imaging technologies: functional magnetic resonance imaging (fMRI) and magnetoencephalography (MEG). fMRI measures local changes in blood oxygen levels, which reflect local neuronal activity. It can reveal detailed spatial activity patterns at a resolution of a couple of millimeters, but it is not very precise in time, as each fMRI measurement reflects the average activity over a five to eight seconds. By contrast, MEG measures magnetic fields generated by the brain. It can track activity with millisecond temporal precision but does not give as spatially detailed a picture.

“When we combine these two technologies, we can address both where the activity occurs and how quickly it emerges,” said Dr. Henriksson, who collected the imaging data at Aalto University.

Moving forward, the research team plans to incorporate virtual reality technology to create more realistic 3D environments for participants to experience. They also plan to build neural network models that mimic the brain’s ability to perceive the environment.

“We would like to put these things together and build computer vision systems that are more like our own brains, systems that have specialized machinery like what we observe here in the human brain for rapidly sensing the geometry of the environment,” said Dr. Kriegeskorte.

This paper is titled “Rapid invariant encoding of scene layout in human OPA.” Marieke Mur, PhD, who has since joined Western University in Ontario, Canada, also contributed to this research.

Funding: This research was supported by the Academy of Finland (Postdoctoral Research Grant; 278957), the British Academy (Postdoctoral Fellowship; PS140117) and the European Research Council (ERC-2010-StG 261352).

The authors report no financial or other conflicts of interest.

Source:

Zuckerman Institute

Media Contacts:

Anne Holden – Zuckerman Institute

Image Source:

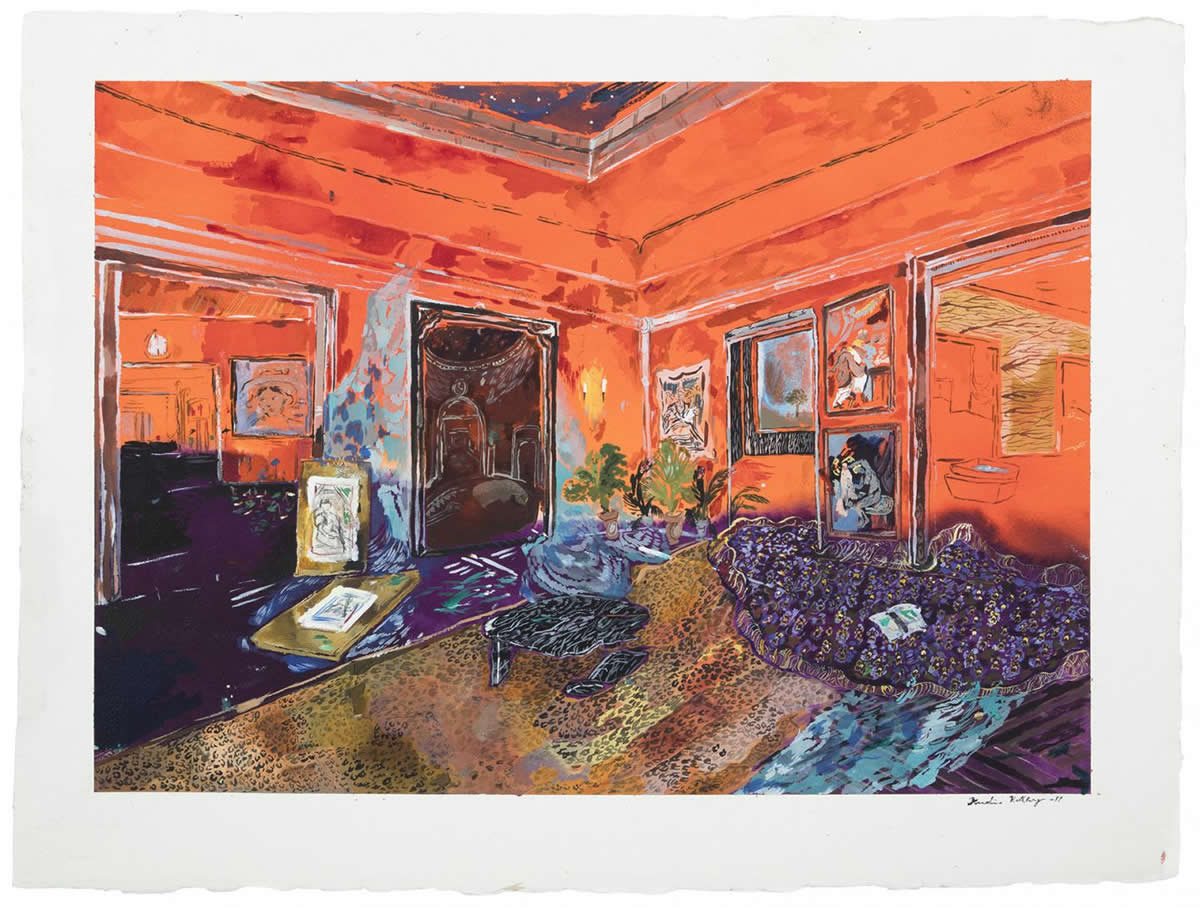

The image is credited to Karoliina Hellberg; photographer: J. Tiainen.

Original Research: Closed access

“Rapid Invariant Encoding of Scene Layout in Human OPA”. Linda Henriksson, Marieke Mur, and Nikolaus Kriegeskorte.

Neuron. doi:10.1016/j.neuron.2019.04.014

Abstract

Rapid Invariant Encoding of Scene Layout in Human OPA

Highlights

• A complete set of scene layouts is constructed in three surface textures (96 stimuli)

• Layout discrimination in OPA generalizes across surface textures

• PPA shows better texture than layout decoding

• MEG reveals rapid emergence of the layout encoding

Summary

Successful visual navigation requires a sense of the geometry of the local environment. How do our brains extract this information from retinal images? Here we visually presented scenes with all possible combinations of five scene-bounding elements (left, right, and back walls; ceiling; floor) to human subjects during functional magnetic resonance imaging (fMRI) and magnetoencephalography (MEG). The fMRI response patterns in the scene-responsive occipital place area (OPA) reflected scene layout with invariance to changes in surface texture. This result contrasted sharply with the primary visual cortex (V1), which reflected low-level image features of the stimuli, and the parahippocampal place area (PPA), which showed better texture than layout decoding. MEG indicated that the texture-invariant scene layout representation is computed from visual input within ∼100 ms, suggesting a rapid computational mechanism. Taken together, these results suggest that the cortical representation underlying our instant sense of the environmental geometry is located in the OPA.