A region of the brain known to play a key role in visual and spatial processing has a parallel function: sorting visual information into categories, according to a new study by researchers at the University of Chicago.

Primates are known to have a remarkable ability to place visual stimuli into familiar and meaningful categories, such as fruit or vegetables. They can also direct their spatial attention to different locations in a scene and make spatially-targeted movements, such as reaching.

The study, published in Neuron, shows that these very different types of information can be simultaneously encoded within the posterior parietal cortex. The research brings scientists a step closer to understanding how the brain interprets visual stimuli and solves complex tasks.

“We found that multiple functions can be mapped onto a particular region of the brain and even onto individual brain cells in that region,” said study author David Freedman, PhD, assistant professor of neurobiology at the University of Chicago. “These functions overlap. This particular brain area, even its individual neurons, can independently encode both spatial and cognitive signals.”

Freedman studies the effects of learning on the brain and how information is stored in short-term memory, with a focus on the areas that process visual stimuli. To examine this phenomenon, he has taught monkeys to play a simple video game in which they learn to assign moving visual patterns into categories.

“The task is a bit like a baseball umpire calling balls and strikes,” he said, “since the monkeys have to sort the various motion patterns into two groups, or categories.”

The monkeys master the tasks over a few weeks of training. Once they do, the researchers record electrical signals from parietal lobe neurons while the subjects perform the categorization task. By measuring electrical activity patterns of these neurons, the researchers can decode the information conveyed by the neurons’ activity.

“The activity patterns in these parietal neurons carry strong information about the category that each motion pattern gets assigned to during the task,” Freedman said.

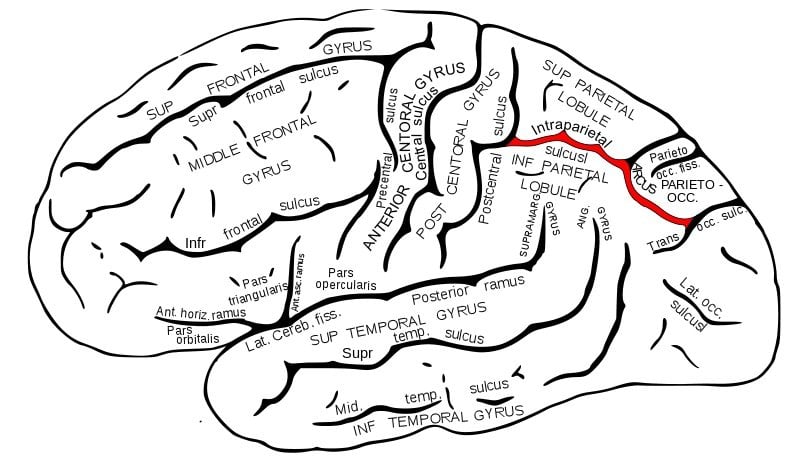

Over the years, his team’s work on categorization has zeroed in on the lateral intraparietal (LIP) area. Studies have shown that this area is vital to directing spatial attention and eye movements. But it had been unclear how an area involved in spatial attention and eye movements could also play a role in non-spatial functions such as visual categorization.

To compare spatial and category functions in the parietal lobe, Freedman and his team added a twist to the monkeys’ task. During the category task, the researchers required the subjects to make eye-movements to visual cues at various positions on the computer screen, but the subjects still had to categorize the visual patterns at the same time that they made these eye movements.

Since this parietal brain area is known to be involved in eye movements, the eye movements could have disrupted category information in that part of the brain. Instead, parietal brain cells showed a simultaneous and independent encoding of both eye-movement and category information; multiplexing of information at the level of single brain cells.

“These signals rode right on top of the eye-movement signals,” said the study’s first author, Chris Rishel, PhD, a recent graduate from Freedman’s laboratory. “We could decode both the eye-movement and the category signals with high accuracy. This tells us that different kinds of information that are usually considered quite unrelated were simultaneously and independently represented by neurons in this particular brain area.”

Their results, the study authors note, “support the possibility that LIP plays a key role in transforming visual signals in earlier sensory areas into abstract category signals during category-based decision-making tasks.”

What does the brain gain from this territorial arrangement?

“There has long been a tendency to look at the many distinct anatomical areas of the cerebral cortex of the brain and to assume that each area is like a specialized module that plays a very specific function.” Freedman said. “Our results support the growing sense that most, if not all, of these brain areas have multiple overlapping roles.”

A brain that includes such overlapping functional centers may be more efficient, Freedman suggests. “It makes mapping these regions more complicated for scientists like us, but it may boost the brain’s capacity. If each area can do a number of different things, you can squeeze a lot more function into the same space.”

A next step is to understand how neuronal category representations develop in LIP neurons during the learning process, the authors said.

Notes about this neuroscience research

The paper, “Independent category and spatial encoding in parietal cortex,” will be published online March 6 by the journal Neuron. The National Institutes of Health funded this study with additional support from the National Science Foundation, the McKnight Endowment Fund for Neuroscience, the Alfred P. Sloan Foundation and the Brain Research Foundation. Gang Huang, formerly a research technician in the lab, also contributed to the research.

Contact: John Easton – University of Chicago Medicine

Source: University of Chicago Medicine press release

Image Source: The brain image with the intraparietal sulcus highlighted is available in the public domain.

Original Research: Abstract for “Independent Category and Spatial Encoding in Parietal Cortex” by Chris A. Rishel, Gang Huang and David J. Freedman in Neuron. Published online March 6 2013 doi: 10.1016/j.neuron.2013.01.007