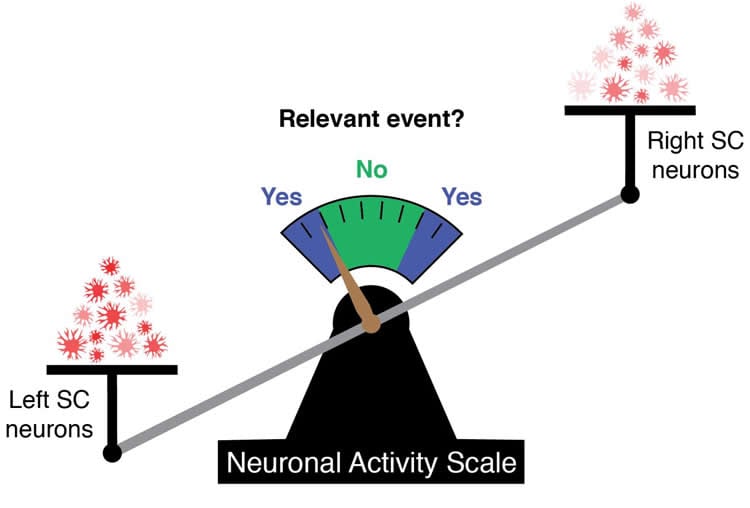

Summary: Differences in activity between the left and right superior colliculi help researchers predict whether an animal was seeing an event.

Source: NIH/NEI.

Scientists at the National Eye Institute (NEI) have found that neurons in the superior colliculus, an ancient midbrain structure found in all vertebrates, are key players in allowing us to detect visual objects and events. This structure doesn’t help us recognize what the specific object or event is; instead, it’s the part of the brain that decides something is there at all. By comparing brain activity recorded from the right and left superior colliculi at the same time, the researchers were able to predict whether an animal was seeing an event. The findings were published today in the journal Nature Neuroscience. NEI is part of the National Institutes of Health.

Perceiving objects in our environment requires not just the eyes, but also the brain’s ability to filter information, classify it, and then understand or decide that an object is actually there. Each step is handled by different parts of the brain, from the eye’s light-sensing retina to the visual cortex and the superior colliculus. For events or objects that are difficult to see (a gray chair in a dark room, for example), small changes in the amount of visual information available and recorded in the brain can be the difference between tripping over the chair or successfully avoiding it. This new study shows that this process – deciding that an object is present or that an event has occurred in the visual field – is handled by the superior colliculus.

“While we’ve known for a long time that the superior colliculus is involved in perception, we really wanted to know exactly how this part of the brain controls the perceptual choice, and find a way to describe that mechanism with a mathematical model,” said James Herman, Ph.D., lead author of the study.

“The superior colliculus plays a foundational role in our ability to process and detect events,” said Richard Krauzlis, Ph.D., principal investigator in the Laboratory of Sensorimotor Research at NEI and senior author of the study. “This new work not only shows that a specific population of neurons directly cause a behavior but also that a commonly used mathematical model can predict behavior based on these neurons.”

The process of deciding to take an action (a behavior, like avoiding a chair) based on information received from the senses (like visual information) is known as “perceptual decision-making”. Most research into perceptual decision-making – in humans, non-human primates, or in other animals – uses mathematical models to describe a relationship between a stimulus shown to an animal (like moving dots, changes in color, or appearance of objects) and the animal’s behavior. But because visual information processing in the brain is highly complex, scientists have struggled to demonstrate that these mathematical models accurately mimic a biological process happening in the brain during decision-making.

In their new study, Krauzlis, Herman, and colleagues used an “accumulator threshold model” to study how neuronal activity in the superior colliculus relates to behavior. This commonly used model assumes that information builds up over time until it hits a certain threshold, whereupon a person or animal makes a decision. (For example, as you get closer to the gray chair in the dark room, more details about shadows or edges might become available, slowly convincing you that an object is there.) Because individual neurons can slowly build up information in this way, Herman and Krauzlis elected to use neuronal signals (instead of the experimental stimulus) as the input for their behavior-prediction model.

The researchers trained two monkeys to undertake a perceptual decision task: while holding down a lever, the monkeys would respond to subtle color changes in their peripheral vision. The researchers would indicate with a cue to the monkey which side they should pay attention to. If the cued side changed color, the monkey should release the lever, but if the non-cued (foil) side changed color, the monkey should ignore it.

During the task, the researchers recorded signals from the superior colliculi on both the right and left sides of the monkey’s brain at the same time. While both sides of the brain activated in response to color changes, the researchers discovered that if the difference in neuronal activity between the two sides reached a specific threshold (e.g., neurons in the right superior colliculus fired more strongly than the left), the monkey would release the lever.

To find out if this differential neuron activation was causing the monkey’s behavior, the researchers directly altered the neuronal activation only on one side, either injecting a drug to slightly lower neuron activity, or stimulating the neurons with a tiny electrical charge to raise their activity. When the neuronal signal was stronger on the side reacting to cued color changes, the monkeys were better at reporting cued changes and rejecting foil color changes. When the neuronal signal was lower on that side, the monkeys were poorer at reporting cued color changes. These alterations in behavior could be predicted by a simple computational adjustment to the threshold model.

One reason for using the color change stimulus, Krauzlis said, was that the superior colliculus doesn’t itself process this information. Instead, other parts of the brain process the changing color, and transmit that information to the superior colliculus in order for a decision to be made. In essence, this simple differential threshold of neuronal activity in the superior colliculus triggers the animal to report the presence of something in the visual field.

“It’s surprising to discover that despite the sophisticated visual machinery that we have in the cerebral cortex, these evolutionarily older structures are still critical for the visual perception that we’re used to,” said Herman.

“For this sort of task, where you’re not asked to say exactly what was the thing, but you’re just saying, did it happen, then this activity in the superior colliculus seems to be both necessary and sufficient,” said Krauzlis.

While the model accurately predicted behavior based on activity in the superior colliculus, the pattern of activation of neurons in the superior colliculus and the signal threshold itself was unique to each monkey, meaning that each monkey had its own behavioral signal code.

Funding: NIH/National Eye Institute funded this study.

Source: Lesley Earl – NIH/NEI

Publisher: Organized by NeuroscienceNews.com.

Image Source: NeuroscienceNews.com image is credited to James Herman, Ph.D., National Eye Institute.

Original Research: Abstract for “Midbrain activity can explain perceptual decisions during an attention task” by James P. Herman, Leor N. Katz & Richard J. Krauzlis in Nature Neuroscience. Published November 26 2018.

doi:10.1038/s41593-018-0271-5

[cbtabs][cbtab title=”MLA”]NIH/NEI”Neural Code that Predicts Behavior Identified.” NeuroscienceNews. NeuroscienceNews, 26 November 2018.

<https://neurosciencenews.com/behavior-neural-code-10252/>.[/cbtab][cbtab title=”APA”]NIH/NEI(2018, November 26). Neural Code that Predicts Behavior Identified. NeuroscienceNews. Retrieved November 26, 2018 from https://neurosciencenews.com/behavior-neural-code-10252/[/cbtab][cbtab title=”Chicago”]NIH/NEI”Neural Code that Predicts Behavior Identified.” https://neurosciencenews.com/behavior-neural-code-10252/ (accessed November 26, 2018).[/cbtab][/cbtabs]

Abstract

Midbrain activity can explain perceptual decisions during an attention task

We introduce a decision model that interprets the relative levels of moment-by-moment spiking activity from the right and left superior colliculus to distinguish relevant from irrelevant stimulus events. The model explains detection performance in a covert attention task, both in intact animals and when performance is perturbed by causal manipulations. This provides a specific example of how midbrain activity could support perceptual judgments during attention tasks.