Summary: A new deep learning algorithm can reliably determine what visual stimuli neurons in the visual cortex respond best to.

Source: Harvard

Why do our eyes tend to be drawn more to some shapes, colors, and silhouettes than others?

For more than half a century, researchers have known that neurons in the brain’s visual system respond unequally to different images — a feature that is critical for the ability to recognize, understand, and interpret the multitude of visual clues surrounding us. For example, specific populations of visual neurons in an area of the brain known as the inferior temporal cortex fire more when people or other primates — animals with highly attuned visual systems — look at faces, places, objects, or text. But exactly what these neurons are responding to has remained unclear.

Now a small study in macaques led by investigators in the Blavatnik Institute at Harvard Medical School has generated some valuable clues based on an artificial intelligence system that can reliably determine what neurons in the brain’s visual cortex prefer to see.

A report of the team’s work was published today in Cell.

The vast majority of experiments to date that attempted to measure neuronal preferences have used real images. But real images carry an inherent bias: They are limited to stimuli available in the real world and to the images that researchers choose to test. The AI-based program overcomes this hurdle by creating synthetic images tailored to the preference of each neuron.

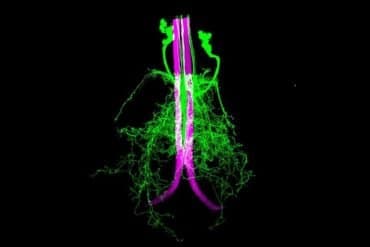

Will Xiao, a graduate student in the Department of Neurobiology at Harvard Medical School, designed a computer program that uses a form of responsive artificial intelligence to create self-adjusting images based on neural responses obtained from six macaque monkeys. To do so, he and his colleagues measured the firing rates from individual visual neurons in the brains of the animals as they watched images on a computer screen.

Over the course of a few hours, the animals were shown images in 100-millisecond blips generated by Xiao’s program. The images started out with a random textural pattern in grayscale. Based on how much the monitored neurons fired, the program gradually introduced shapes and colors, morphing over time into a final image that fully embodied a neuron’s preference. Because each of these images is synthetic, Xiao said, it avoids the bias that researchers have traditionally introduced by only using natural images.

“At the end of each experiment,” he said, “this program generates a super-stimulus for these cells.”

The results of these experiments were consistent over separate runs, explained senior investigator Margaret Livingstone: Specific neurons tended to evolve images through the program that weren’t identical but were remarkably similar.

Some of these images were in line with what Livingstone, the Takeda Professor of Neurobiology at HMS, and her colleagues expected. For example, a neuron that they suspected might respond to faces evolved round pink images with two big black dots akin to eyes. Others were more surprising. A neuron in one of the animals consistently generated images that looked like the body of a monkey, but with a red splotch near its neck. The researchers eventually realized that this monkey was housed near another that always wore a red collar.

“We think this neuron responded preferentially not just to monkey bodies but to a specific monkey,” Livingstone said.

Not every final image looked like something recognizable, Xiao added. One monkey’s neuron evolved a small black square. Another evolved an amorphous black shape with orange below.

Livingstone notes that research from her lab and others has shown that the responses of these neurons are not innate — instead, they are learned through consistent exposure to visual stimuli over time. When during development this ability to recognize and fire preferentially to certain images arises is unknown, Livingstone said. She and her colleagues plan to investigate this question in future studies.

Learning how the visual system responds to images could be key to better understanding the basic mechanisms that drive cognitive issues ranging from learning disabilities to autism spectrum disorders, which are often marked by impairments in a child’s ability to recognize faces and process facial cues.

“This malfunction in the visual processing apparatus of the brain can interfere with a child’s ability to connect, communicate, and interpret basic cues,” said Livingstone. “By studying those cells that respond preferentially to faces, for example, we could uncover clues to how social development takes place and what might sometimes go awry.”

Funding: The research was funded by National Institutes of Health and National Science Foundation.

Source:

Harvard

Media Contacts:

Christy Brownlee – Harvard

Image Source:

The image is adapted from the Harvard news release.

Original Research: Open access

“Evolving Images for Visual Neurons Using a Deep Generative Network Reveals Coding Principles and Neuronal Preferences”. Carlos R. Ponce, Will Xiao, Peter F. Schade, Till S. Hartmann, Gabriel Kreiman, Margaret S. Livingstone.

Cell. doi:10.1016/j.cell.2019.04.005

Abstract

Evolving Images for Visual Neurons Using a Deep Generative Network Reveals Coding Principles and Neuronal Preferences

Highlights

• A generative deep neural network and a genetic algorithm evolved images guided by neuronal firing

• Evolved images maximized neuronal firing in alert macaque visual cortex

• Evolved images activated neurons more than large numbers of natural images

• Similarity to evolved images predicts response of neurons to novel images

Summary

What specific features should visual neurons encode, given the infinity of real-world images and the limited number of neurons available to represent them? We investigated neuronal selectivity in monkey inferotemporal cortex via the vast hypothesis space of a generative deep neural network, avoiding assumptions about features or semantic categories. A genetic algorithm searched this space for stimuli that maximized neuronal firing. This led to the evolution of rich synthetic images of objects with complex combinations of shapes, colors, and textures, sometimes resembling animals or familiar people, other times revealing novel patterns that did not map to any clear semantic category. These results expand our conception of the dictionary of features encoded in the cortex, and the approach can potentially reveal the internal representations of any system whose input can be captured by a generative model.