Summary: Combining artificial intelligence technology with raw data from brain activity, researchers accelerate the understanding of how neural activity impacts specific behaviors.

Source: UCL

An artificial neural network (AI) designed by an international team involving UCL can translate raw data from brain activity, paving the way for new discoveries and a closer integration between technology and the brain.

The new method could accelerate discoveries of how brain activities relate to behaviours.

The study published today in eLife, co-led by the Kavli Institute for Systems Neuroscience in Trondheim and the Max Planck Institute for Human Cognitive and Brain Sciences Leipzig and funded by Wellcome and the European Research Council, shows that a convolutional neural network, a specific type of deep learning algorithm, is able to decode many different behaviours and stimuli from a wide variety of brain regions in different species, including humans.

Lead researcher, Markus Frey (Kavli Institute for Systems Neuroscience), said: “Neuroscientists have been able to record larger and larger datasets from the brain but understanding the information contained in that data – reading the neural code – is still a hard problem. In most cases we don’t know what messages are being transmitted.

“We wanted to develop an automatic method to analyse raw neural data of many different types, circumventing the need to manually decipher them.”

They tested the network, called DeepInsight, on neural signals from rats exploring an open arena and found it was able to precisely predict the position, head direction, and running speed of the animals. Even without manual processing, the results were more accurate than those obtained with conventional analyses.

Senior author, Professor Caswell Barry (UCL Cell & Developmental Biology), said: “Existing methods miss a lot of potential information in neural recordings because we can only decode the elements that we already understand. Our network is able to access much more of the neural code and in doing so teaches us to read some of those other elements.

“We are able to decode neural data more accurately than before, but the real advance is that the network is not constrained by existing knowledge.”

The team found that their model was able to identify new aspects of the neural code, which they show by detecting a previously unrecognised representation of head direction, encoded by interneurons in a region of the hippocampus that is among the first to show functional defects in people with Alzheimer’s disease.

Moreover, they show that the same network is able to predict behaviours from different types of recording across brain areas and can also be used to infer hand movements in human participants, which they determined by testing their network on a pre-existing dataset of brain activity recorded in people.

Co-author Professor Christian Doeller (Kavli Institute for Systems Neuroscience and Max Planck Institute for Human Cognitive and Brain Sciences) said: “This approach could allow us in the future to predict more accurately higher-level cognitive processes in humans, such as reasoning and problem solving.”

Markus Frey added: “Our framework enables researchers to get a rapid automated analysis of their unprocessed neural data, saving time which can be spent on only the most promising hypotheses, using more conventional methods.”

About this AI research news

Author: Chris Lane

Source: UCL

Contact: Chris Lane – UCL

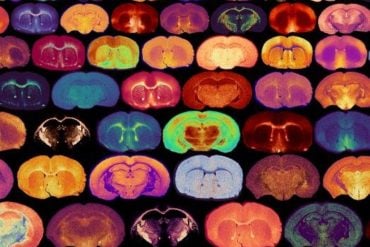

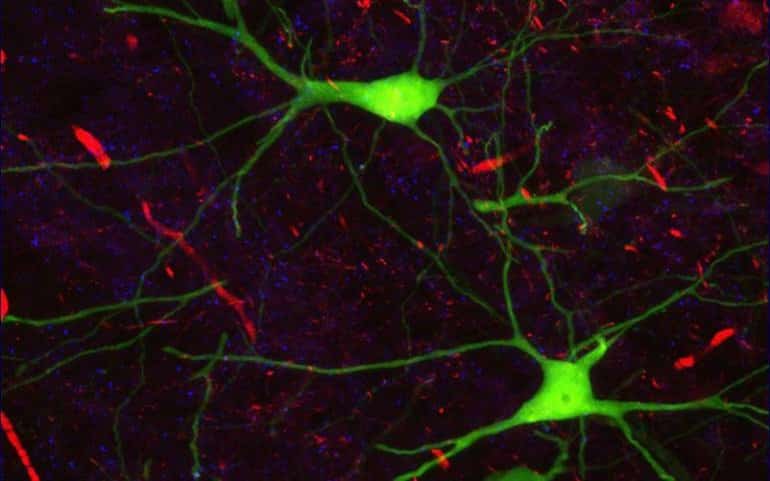

Image: The image is credited to UCL

Original Research: Open access.

“Interpreting wide-band neural activity using convolutional neural networks” by Markus Frey et al. eLife

Abstract

Interpreting wide-band neural activity using convolutional neural networks

Rapid progress in technologies such as calcium imaging and electrophysiology has seen a dramatic increase in the size and extent of neural recordings. Even so, interpretation of this data requires considerable knowledge about the nature of the representation and often depends on manual operations.

Decoding provides a means to infer the information content of such recordings but typically requires highly processed data and prior knowledge of the encoding scheme.

Here, we developed a deep-learning framework able to decode sensory and behavioral variables directly from wide-band neural data. The network requires little user input and generalizes across stimuli, behaviors, brain regions, and recording techniques.

Once trained, it can be analyzed to determine elements of the neural code that are informative about a given variable. We validated this approach using electrophysiological and calcium-imaging data from rodent auditory cortex and hippocampus as well as human electrocorticography (ECoG) data.

We show successful decoding of finger movement, auditory stimuli, and spatial behaviors – including a novel representation of head direction – from raw neural activity.