Stage magicians are not the only ones who can distract the eye: a new cognitive psychology experiment demonstrates how all human beings have a built-in ability to stop paying attention to objects that are right in front of them.

Perception experts have long known that we see much less of the world than we think we do. A person creates a mental model of their surroundings by stitching together scraps of visual information gleaned while shifting attention from place to place. Counterintuitively, the very process that creates the illusion of a complete picture relies on filtering out most of what’s out there.

In a paper published in the journal Attention, Perception, & Psychophysics a team of U of T researchers reveal how people have more “top-down” control of what they don’t notice than many scientists previously believed.

“The visual system really cares about objects,” says postdoctoral fellow J. Eric T. Taylor, who is the lead author on the paper. “If I move around a room, the locations of all the objects – chairs, tables, doors, walls, etc. — change on my retina, but my mental representation of the room stays the same.”

Objects play such a fundamental role in how we focus our attention that many perception researchers believe we are “addicted” to them; we couldn’t stop paying attention to objects if we tried. The visual brain guides attention largely by selecting objects — and this process is widely believed to be automatic.

“I had an inkling that object-based attention cues require a little more will on the observer’s part,” says Taylor. “I designed an experiment to determine whether you can ‘erase’ object-based attention shifting.”

Taylor put a new twist on an old and highly influential test known as a “two-rectangle experiment.” The original experiment was instrumental in demonstrating just how deeply objects are ingrained in how we see the world.

In the original experiment, test subjects stare at a screen with two skinny rectangles. A brief flash of light draws their attention to one end of one rectangle — say the top end of the left rectangle. Then, a “target” appears, either in the same place as the flash, at the other end of the same rectangle, or at one of the ends of the other rectangle.

Observers are consistently faster at seeing the target if it appeared at the opposite end of the original rectangle than if it appeared at the top of the other rectangle – even though those two points are precisely the same distance from the original flash of light.

The widely accepted conclusion was that the human brain is wired to use objects like these rectangles to focus attention. Alternately referred to as a “bottom-up” control or a “part of our lizard brain,” object-based attention cues seemed to evoke an involuntary, uncontrolled response in the human brain.

Taylor and colleague’s variations added a new element: test observers went through similar exercises, but they were instructed to hunt targets of a specific colour that either matched or contrasted with the colour of the rectangles themselves.

“They activate a ‘control setting’ for, say, green, which is a very top-down mental activity,” says Taylor. “We found that when the objects matched the target color, people use them to help direct their attention. But when the objects were not the target colour, people no longer use them — they become invisible.”

Test observers are aware of the rectangles on the screen, but when they’re seeking a green target among red shapes, those objects no longer affect the speed with which they find it. In everyday life, we continually create such top-down filters, by doing anything from heeding a “Watch for children” sign to scanning a crowd for a familiar face.

“This result tells us that one of the ways we move attention around is actually highly directed rather than automatic,” Taylor says. “We can’t say exactly what we’re missing, but whatever is and is not getting through the filter is not as automatic as we thought.”

Study co-authors also include Mark Fang, Jia L. Xu, Sebastian J. Markmiller, UC San Diego; and Mitchell R. O’Connell, UC Berkeley.

Funding: This research was fundedby Natural Sciences and Engineering Research Council of Canada.

Source: Eric Taylor – University of Toronto

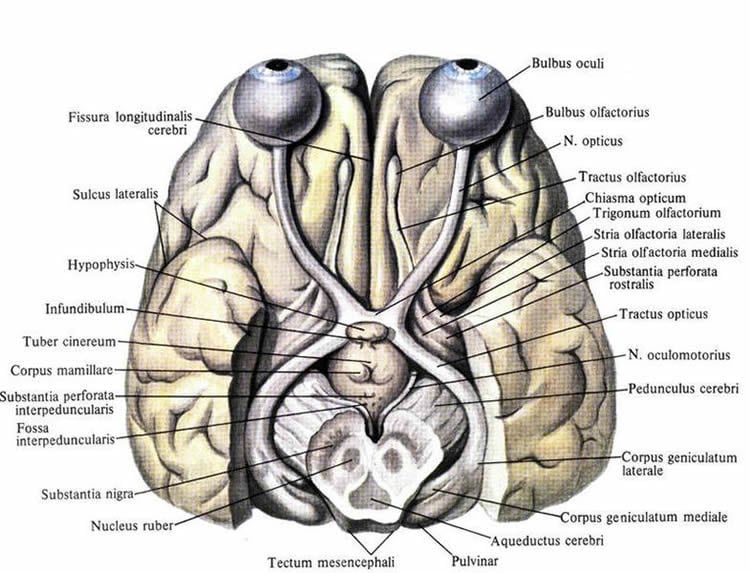

Image Source: The image is in the public domain.

Original Research: Abstract for “Object-based selection is contingent on attentional control settings” by J. Eric T. Taylor, Jason Rajsic, and Jay Pratt in Attention, Perception, & Psychophysics. Published online February 22 2016 doi:10.3758/s13414-016-1074-y

Abstract

Object-based selection is contingent on attentional control settings

The visual system allocates attention in object-based and location-based modes. However, the question of when attention selects objects and when it selects locations remains poorly understood. In this article, we present variations on two classic paradigms from the object-based attention literature, in which object-based effects are observed only when the object feature matches the task goal of the observer. In Experiment 1, covert orienting was influenced by task-irrelevant rectangles, but only when the target color matched the rectangle color. In Experiment 2, the region of attentional focus was adjusted to the size of task-irrelevant objects, but only when the target color matched the object color. In Experiment 3, we ruled out the possibility that contingent object-based selection is caused by color-based intratrial priming. These demonstrations of contingent object-based attention suggest that object-based selection is neither mandatory nor default, and that object-based effects are contingent on simple, top-down attentional control settings.

“Object-based selection is contingent on attentional control settings” by J. Eric T. Taylor, Jason Rajsic, and Jay Pratt in Attention, Perception, & Psychophysics. Published online February 22 2016 doi:10.3758/s13414-016-1074-y