Understanding how the brain manages to process the deluge of information about the outside world has been a daunting challenge. In a recent study in the journal Cell Reports, Yale’s Michael Higley and Jessica Cardin from the Department of Neuroscience provide some clues to how cells in the visual cortex direct sensory information to different targets throughout the brain.

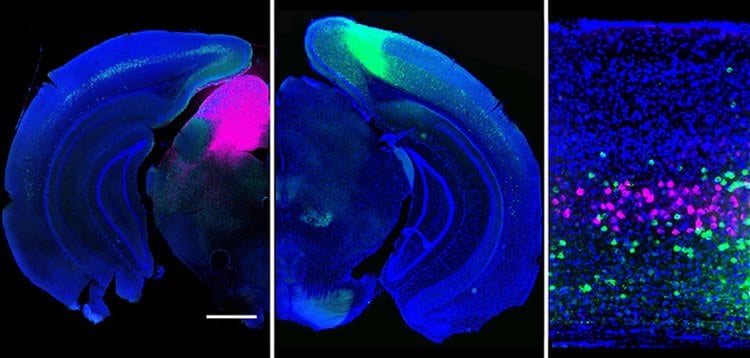

By imaging activity in the mouse brain, the researchers illustrated how neuron types (fluorescently tagged magenta and green) that project to different areas of the brain extract distinct features from a visual scene.

“These results demonstrate how the brain processes multiple sensory inputs in parallel,” Higley said, noting that the findings help reveal the normal flow of information in the brain, opening new avenues for understanding how perturbations of these systems might contribute to abnormal behavior.

Source: Bill Hathaway – Yale

Image Source: The image is adapted from the Yale press release.

Original Research: Full open access research for “Projection-Specific Visual Feature Encoding by Layer 5 Cortical Subnetworks” by Gyorgy Lur, Martin A. Vinck, Lan Tang, Jessica A. Cardin, Michael J. Higley in Cell Reports. Published online March 10 2016 doi:10.1016/j.celrep.2016.02.050

Abstract

Projection-Specific Visual Feature Encoding by Layer 5 Cortical Subnetworks

Highlights

•Projection targets define non-overlapping cell populations in L5 of mouse V1

•Corticotectal neurons are most broadly tuned for orientation and spatial frequency

•Corticostriatal and corticocortical neurons form correlated subnetworks in L5

Summary

Primary neocortical sensory areas act as central hubs, distributing afferent information to numerous cortical and subcortical structures. However, it remains unclear whether each downstream target receives a distinct version of sensory information. We used in vivo calcium imaging combined with retrograde tracing to monitor visual response properties of three distinct subpopulations of projection neurons in primary visual cortex. Although there is overlap across the groups, on average, corticotectal (CT) cells exhibit lower contrast thresholds and broader tuning for orientation and spatial frequency in comparison to corticostriatal (CS) cells, whereas corticocortical (CC) cells have intermediate properties. Noise correlational analyses support the hypothesis that CT cells integrate information across diverse layer 5 populations, whereas CS and CC cells form more selectively interconnected groups. Overall, our findings demonstrate the existence of functional subnetworks within layer 5 that may differentially route visual information to behaviorally relevant downstream targets.

“Projection-Specific Visual Feature Encoding by Layer 5 Cortical Subnetworks” by Gyorgy Lur, Martin A. Vinck, Lan Tang, Jessica A. Cardin, Michael J. Higley in Cell Reports. Published online March 10 2016 doi:10.1016/j.celrep.2016.02.050