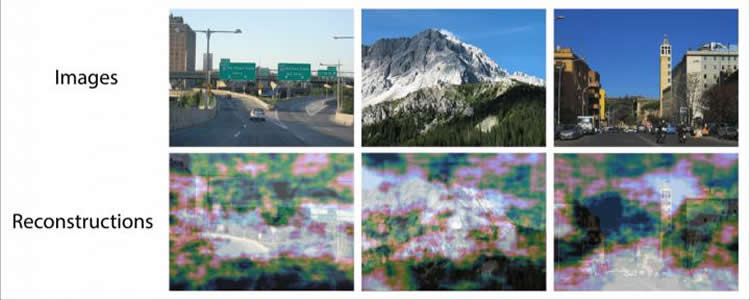

Summary: Combining neuroimaging data with deep convolutional neural networks, researchers were able to predict where people would direct their attention and gaze at images of natural scenes.

Source: Yale.

Using precise brain measurements, Yale researchers predicted how people’s eyes move when viewing natural scenes, an advance in understanding the human visual system that can improve a host of artificial intelligence efforts, such as the development of driverless cars, said the researchers.

“We are visual beings and knowing how the brain rapidly computes where to look is fundamentally important,” said Yale’s Marvin Chun, Richard M. Colgate Professor of Psychology, professor of neuroscience and co-author of new research published Dec. 4 in the journal Nature Communications.

Eye movements have been extensively studied, and researchers can tell with some certainty where a gaze will be directed at different elements in the environment. What hasn’t been understood is how the brain orchestrates this ability, which is so fundamental to survival.

In a previous example of “mind reading,” Chun’s group successfully reconstructed facial images viewed while people were scanned in an MRI machine, based on their brain imaging data alone.

In the new paper, Chun and lead author Thomas P. O’Connell took a similar approach and showed that by analyzing the brain responses to complex, natural scenes, they could predict where people would direct their attention and gaze. This was made possible by analyzing the brain data with deep convolutional neural networks — models that are extensively used in artificial intelligence (AI).

“The work represents a perfect marriage of neuroscience and data science,” Chun said.

The findings have a myriad of potential applications — such as testing competing artificial intelligence systems that categorize images and guide driverless cars.

“People can see better than AI systems can,” Chun said. “Understanding how the brain performs its complex calculations is an ultimate goal of neuroscience and benefits AI efforts.”

Source: Bill Hathaway – Yale

Publisher: Organized by NeuroscienceNews.com.

Image Source: NeuroscienceNews.com image is credited to Chun Lab Yale.

Original Research: Open access research for “Predicting eye movement patterns from fMRI responses to natural scenes” by Thomas P. O’Connell & Marvin M. Chun in Nature Communications. Published December 4 2018.

doi:10.1038/s41467-018-07471-9

[cbtabs][cbtab title=”MLA”]Yale”Mountain Splendor? Researchers Know Where Your Eyes Will Look.” NeuroscienceNews. NeuroscienceNews, 4 December 2018.

<https://neurosciencenews.com/vision-ai-look-120207/>.[/cbtab][cbtab title=”APA”]Yale(2018, December 4). Mountain Splendor? Researchers Know Where Your Eyes Will Look. NeuroscienceNews. Retrieved December 4, 2018 from https://neurosciencenews.com/vision-ai-look-120207/[/cbtab][cbtab title=”Chicago”]Yale”Mountain Splendor? Researchers Know Where Your Eyes Will Look.” https://neurosciencenews.com/vision-ai-look-120207/ (accessed December 4, 2018).[/cbtab][/cbtabs]

Abstract

Predicting eye movement patterns from fMRI responses to natural scenes

Eye tracking has long been used to measure overt spatial attention, and computational models of spatial attention reliably predict eye movements to natural images. However, researchers lack techniques to noninvasively access spatial representations in the human brain that guide eye movements. Here, we use functional magnetic resonance imaging (fMRI) to predict eye movement patterns from reconstructed spatial representations evoked by natural scenes. First, we reconstruct fixation maps to directly predict eye movement patterns from fMRI activity. Next, we use a model-based decoding pipeline that aligns fMRI activity to deep convolutional neural network activity to reconstruct spatial priority maps and predict eye movements in a zero-shot fashion. We predict human eye movement patterns from fMRI responses to natural scenes, provide evidence that visual representations of scenes and objects map onto neural representations that predict eye movements, and find a novel three-way link between brain activity, deep neural network models, and behavior.