Summary: Brain-to-text system could help people with speech difficulties to communicate, researchers report.

Source: Frontiers.

Recent research shows brain-to-text device capable of decoding speech from brain signals.

Ever wonder what it would be like if a device could decode your thoughts into actual speech or written words? While this might enhance the capabilities of already existing speech interfaces with devices, it could be a potential game-changer for those with speech pathologies, and even more so for “locked-in” patients who lack any speech or motor function.

“So instead of saying ‘Siri, what is the weather like today’ or ‘Ok Google, where can I go for lunch?’ I just imagine saying these things,” explains Christian Herff, author of a review recently published in the journal Frontiers in Human Neuroscience.

While reading one’s thoughts might still belong to the realms of science fiction, scientists are already decoding speech from signals generated in our brains when we speak or listen to speech.

In their review, Herff and co-author, Dr. Tanja Schultz, compare the pros and cons of using various brain imaging techniques to capture neural signals from the brain and then decode them to text.

The technologies include functional MRI and near infrared imaging that can detect neural signals based on metabolic activity of neurons, to methods such as EEG and magnetoencephalography (MEG) that can detect electromagnetic activity of neurons responding to speech. One method in particular, called electrocorticography or ECoG, showed promise in Herff’s study.

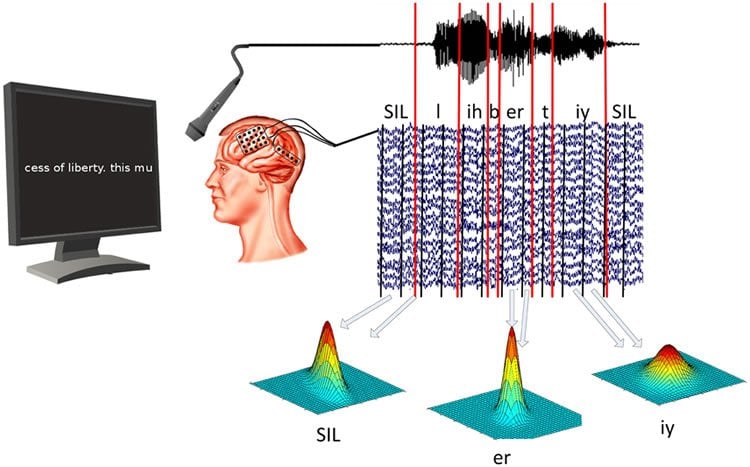

This study presents the Brain-to-text system in which epilepsy patients who already had electrode grids implanted for treatment of their condition participated. They read out texts presented on a screen in front of them while their brain activity was recorded. This formed the basis of a database of patterns of neural signals that could now be matched to speech elements or “phones”.

When the researchers also included language and dictionary models in their algorithms, they were able to decode neural signals to text with a high degree of accuracy. “For the first time, we could show that brain activity can be decoded specifically enough to use ASR technology on brain signals,” says Herff. “However, the current need for implanted electrodes renders it far from usable in day-to-day life.”

So, where does the field go from here to a functioning thought detection device? “A first milestone would be to actually decode imagined phrases from brain activity, but a lot of technical issues need to be solved for that,” concedes Herff.

Their study results, while exciting, are still only a preliminary step towards this type of brain-computer interface.

Source: Michelle Ponto – Frontiers

Image Source: NeuroscienceNews.com image is credited to the researchers/Frontiers in Neuroscience.

Original Research: Full open access research for “Automatic Speech Recognition from Neural Signals: A Focused Review” by Christian Herff and Tanja Schultz in Frontiers in Neuroscience. Published online September 27 2016 doi:10.3389/fnins.2016.00429

[cbtabs][cbtab title=”MLA”]Frontiers. “Can A Brain Computer Interface Convert Your Thoughts to Text?.” NeuroscienceNews. NeuroscienceNews, 25 October 2016.

<https://neurosciencenews.com/text-thought-bci-5345/>.[/cbtab][cbtab title=”APA”]Frontiers. (2016, October 25). Can A Brain Computer Interface Convert Your Thoughts to Text?. NeuroscienceNews. Retrieved October 25, 2016 from https://neurosciencenews.com/text-thought-bci-5345/[/cbtab][cbtab title=”Chicago”]Frontiers. “Can A Brain Computer Interface Convert Your Thoughts to Text?.” https://neurosciencenews.com/text-thought-bci-5345/ (accessed October 25, 2016).[/cbtab][/cbtabs]

Abstract

Automatic Speech Recognition from Neural Signals: A Focused Review

Speech interfaces have become widely accepted and are nowadays integrated in various real-life applications and devices. They have become a part of our daily life. However, speech interfaces presume the ability to produce intelligible speech, which might be impossible due to either loud environments, bothering bystanders or incapabilities to produce speech (i.e., patients suffering from locked-in syndrome). For these reasons it would be highly desirable to not speak but to simply envision oneself to say words or sentences. Interfaces based on imagined speech would enable fast and natural communication without the need for audible speech and would give a voice to otherwise mute people. This focused review analyzes the potential of different brain imaging techniques to recognize speech from neural signals by applying Automatic Speech Recognition technology. We argue that modalities based on metabolic processes, such as functional Near Infrared Spectroscopy and functional Magnetic Resonance Imaging, are less suited for Automatic Speech Recognition from neural signals due to low temporal resolution but are very useful for the investigation of the underlying neural mechanisms involved in speech processes. In contrast, electrophysiologic activity is fast enough to capture speech processes and is therefor better suited for ASR. Our experimental results indicate the potential of these signals for speech recognition from neural data with a focus on invasively measured brain activity (electrocorticography). As a first example of Automatic Speech Recognition techniques used from neural signals, we discuss the Brain-to-text system.

“Automatic Speech Recognition from Neural Signals: A Focused Review” by Christian Herff and Tanja Schultz in Frontiers in Neuroscience. Published online September 27 2016 doi:10.3389/fnins.2016.00429