Summary: Researchers have developed an artificial synapse that mimics the analog way the human brain completes tasks.

Source: University of Pittsburgh.

Digital computation has rendered nearly all forms of analog computation obsolete since as far back as the 1950s. However, there is one major exception that rivals the computational power of the most advanced digital devices: the human brain.

The human brain is a dense network of neurons. Each neuron is connected to tens of thousands of others, and they use synapses to fire information back and forth constantly. With each exchange, the brain modulates these connections to create efficient pathways in direct response to the surrounding environment. Digital computers live in a world of ones and zeros. They perform tasks sequentially, following each step of their algorithms in a fixed order.

A team of researchers from Pitt’s Swanson School of Engineering have developed an “artificial synapse” that does not process information like a digital computer but rather mimics the analog way the human brain completes tasks. Led by Feng Xiong, assistant professor of electrical and computer engineering, the researchers published their results in Advanced Materials. His Pitt co-authors include Mohammad Sharbati (first author), Yanhao Du, Jorge Torres, Nolan Ardolino, and Minhee Yun.

“The analog nature and massive parallelism of the brain are partly why humans can outperform even the most powerful computers when it comes to higher order cognitive functions such as voice recognition or pattern recognition in complex and varied data sets,” explains Dr. Xiong.

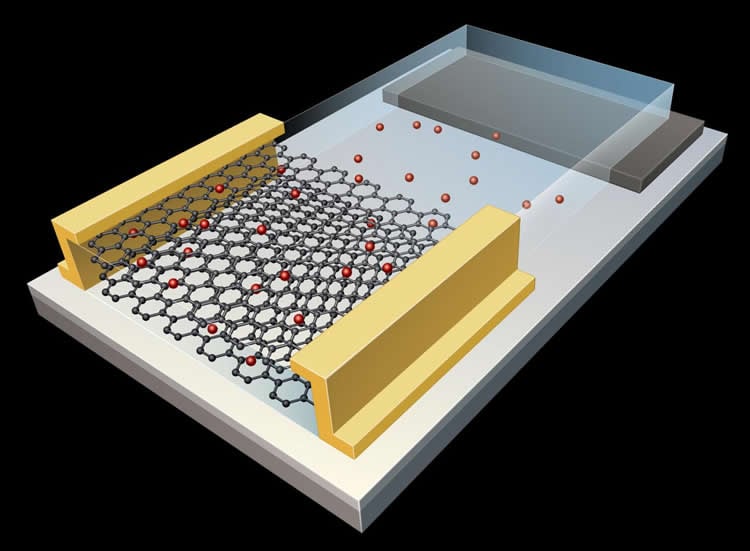

An emerging field called “neuromorphic computing” focuses on the design of computational hardware inspired by the human brain. Dr. Xiong and his team built graphene-based artificial synapses in a two-dimensional honeycomb configuration of carbon atoms. Graphene’s conductive properties allowed the researchers to finely tune its electrical conductance, which is the strength of the synaptic connection or the synaptic weight. The graphene synapse demonstrated excellent energy efficiency, just like biological synapses.

In the recent resurgence of artificial intelligence, computers can already replicate the brain in certain ways, but it takes about a dozen digital devices to mimic one analog synapse. The human brain has hundreds of trillions of synapses for transmitting information, so building a brain with digital devices is seemingly impossible, or at the very least, not scalable. Xiong Lab’s approach provides a possible route for the hardware implementation of large-scale artificial neural networks.

According to Dr. Xiong, artificial neural networks based on the current CMOS (complementary metal-oxide semiconductor) technology will always have limited functionality in terms of energy efficiency, scalability, and packing density. “It is really important we develop new device concepts for synaptic electronics that are analog in nature, energy-efficient, scalable, and suitable for large-scale integrations,” he says. “Our graphene synapse seems to check all the boxes on these requirements so far.”

With graphene’s inherent flexibility and excellent mechanical properties, these graphene-based neural networks can be employed in flexible and wearable electronics to enable computation at the “edge of the internet”–places where computing devices such as sensors make contact with the physical world.

“By empowering even a rudimentary level of intelligence in wearable electronics and sensors, we can track our health with smart sensors, provide preventive care and timely diagnostics, monitor plants growth and identify possible pest issues, and regulate and optimize the manufacturing process–significantly improving the overall productivity and quality of life in our society,” Dr. Xiong says.

The development of an artificial brain that functions like the analog human brain still requires a number of breakthroughs. Researchers need to find the right configurations to optimize these new artificial synapses. They will need to make them compatible with an array of other devices to form neural networks, and they will need to ensure that all of the artificial synapses in a large-scale neural network behave in the same exact manner. Despite the challenges, Dr. Xiong says he’s optimistic about the direction they’re headed.

“We are pretty excited about this progress since it can potentially lead to the energy-efficient, hardware implementation of neuromorphic computing, which is currently carried out in power-intensive GPU clusters. The low-power trait of our artificial synapse and its flexible nature make it a suitable candidate for any kind of A.I. device, which would revolutionize our lives, perhaps even more than the digital revolution we’ve seen over the past few decades,” Dr. Xiong says.

Source: Matt Cichowicz – University of Pittsburgh

Publisher: Organized by NeuroscienceNews.com.

Image Source: NeuroscienceNews.com image is credited to Swanson School of Engineering.

Original Research: Abstract for “Low‐Power, Electrochemically Tunable Graphene Synapses for Neuromorphic Computing” by Mohammad Taghi Sharbati, Yanhao Du, Jorge Torres, Nolan D. Ardolino, Minhee Yun, and Feng Xiong in Advanced Materials. Published July 23 2018.

doi:10.1002/adma.201802353

[cbtabs][cbtab title=”MLA”]University of Pittsburgh”If Only AI Had a Brain.” NeuroscienceNews. NeuroscienceNews, 23 July 2018.

<https://neurosciencenews.com/synapse-ai-9602/>.[/cbtab][cbtab title=”APA”]University of Pittsburgh(2018, July 23). If Only AI Had a Brain. NeuroscienceNews. Retrieved July 23, 2018 from https://neurosciencenews.com/synapse-ai-9602/[/cbtab][cbtab title=”Chicago”]University of Pittsburgh”If Only AI Had a Brain.” https://neurosciencenews.com/synapse-ai-9602/ (accessed July 23, 2018).[/cbtab][/cbtabs]

Abstract

Low‐Power, Electrochemically Tunable Graphene Synapses for Neuromorphic Computing

Brain‐inspired neuromorphic computing has the potential to revolutionize the current computing paradigm with its massive parallelism and potentially low power consumption. However, the existing approaches of using digital complementary metal–oxide–semiconductor devices (with “0” and “1” states) to emulate gradual/analog behaviors in the neural network are energy intensive and unsustainable; furthermore, emerging memristor devices still face challenges such as nonlinearities and large write noise. Here, an electrochemical graphene synapse, where the electrical conductance of graphene is reversibly modulated by the concentration of Li ions between the layers of graphene is presented. This fundamentally different mechanism allows to achieve a good energy efficiency (<500 fJ per switching event), analog tunability (>250 nonvolatile states), good endurance, and retention performances, and a linear and symmetric resistance response. Essential neuronal functions such as excitatory and inhibitory synapses, long‐term potentiation and depression, and spike timing dependent plasticity with good repeatability are demonstrated. The scaling study suggests that this simple, two‐dimensional synapse is scalable in terms of switching energy and speed.