Summary: Using HD-TACS brain stimulation, researchers influenced the integration of speech sounds by changing the balancing processes between the two brain hemispheres.

Source: Max Planck Institute

When we listen to speech sounds, the information that enters our left and right ear is not exactly the same. This may be because acoustic information reaches one ear before the other, or because the sound is perceived as louder by one of the ears. Information about speech sounds also reaches different parts of our brain, and the two hemispheres are specialised in processing different types of acoustic information. But how does the brain integrate auditory information from different areas?

To investigate this question, lead researcher Basil Preisig from the University of Zurich collaborated with an international team of scientists. In an earlier study, the team discovered that the brain integrates information about speech sounds by ‘balancing’ the rhythm of gamma waves across the hemispheres–a process called ‘oscillatory synchronisation’.

Preisig and his colleagues also found that they could influence the integration of speech sounds by changing the balancing process between the hemispheres. However, it was still unclear where in the brain this process occurred.

Did you hear ‘ga’ or ‘da’?

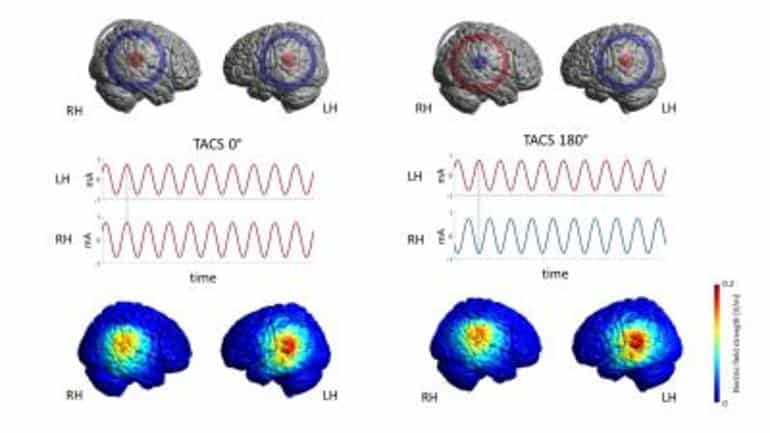

The researchers decided to apply electric brain stimulation (high density transcranial alternating current stimulation or HD-TACS) to 28 healthy volunteers while their brains were being scanned (with fMRI) at the Donders Centre for Cognitive Neuroimaging in Nijmegen. They created a syllable that was somewhere in between ‘ga’ and ‘da’, and played this ambiguous syllable to the right ear of the participants. At the same time, the disambiguating information was played to the left ear. Participants were asked to indicate whether they heard ‘ga’ or ‘da’ by pressing a button. Would changing the connection between the two hemispheres also change the way the participants integrated information played to the left and right ear?

The scientists disrupted the ‘balance’ of gamma waves between the two hemispheres, which in turn affected what the participants reported to hear (‘ga’ or ‘da’).

Phantom perception

“This is the first demonstration in the auditory domain that interhemispheric connectivity is important for the integration of speech sound information”, says Preisig. “This work paves the way for investigating other sensory modalities and more complex auditory stimulation”.

“These results give us valuable insights into how the brain’s hemispheres are coordinated, and how we may use experimental techniques to manipulate this” adds senior author Alexis-Hervais Adelman.

The findings, to be published in PNAS, may also have clinical implications. “We know that disturbances of interhemispheric connectivity occur in auditory ‘phantom’ perceptions, such as tinnitus and auditory verbal hallucinations”, Preisig explains. “Therefore, stimulating the two hemispheres with (HD-)TACS may offer therapeutic benefits. I will follow up on this research by applying TACS in patients with hearing loss and tinnitus, to improve our understanding of neural attention control and to enhance speech comprehension for this group.”

About this speech processing research news

Source: Max Planck Institute

Contact: Marjolein Scherphuis – Max Planck Institute

Image: The image is credited to Basil Preisig

Original Research: Closed access.

“Selective modulation of interhemispheric connectivity by transcranial alternating current stimulation influences binaural integration” by Basil Preisig et al. PNAS

Abstract

Selective modulation of interhemispheric connectivity by transcranial alternating current stimulation influences binaural integration

Brain connectivity plays a major role in the encoding, transfer, and integration of sensory information. Interregional synchronization of neural oscillations in the γ-frequency band has been suggested as a key mechanism underlying perceptual integration. In a recent study, we found evidence for this hypothesis showing that the modulation of interhemispheric oscillatory synchrony by means of bihemispheric high-density transcranial alternating current stimulation (HD-TACS) affects binaural integration of dichotic acoustic features. Here, we aimed to establish a direct link between oscillatory synchrony, effective brain connectivity, and binaural integration. We experimentally manipulated oscillatory synchrony (using bihemispheric γ-TACS with different interhemispheric phase lags) and assessed the effect on effective brain connectivity and binaural integration (as measured with functional MRI and a dichotic listening task, respectively). We found that TACS reduced intrahemispheric connectivity within the auditory cortices and antiphase (interhemispheric phase lag 180°) TACS modulated connectivity between the two auditory cortices. Importantly, the changes in intra- and interhemispheric connectivity induced by TACS were correlated with changes in perceptual integration. Our results indicate that γ-band synchronization between the two auditory cortices plays a functional role in binaural integration, supporting the proposed role of interregional oscillatory synchrony in perceptual integration.