Summary: The brain encodes the structure of sentences and phrases into different neural firing patterns.

Source: Max Planck Institute

The brain links incoming speech sounds to knowledge of grammar, which is abstract in nature. But how does the brain encode abstract sentence structure?

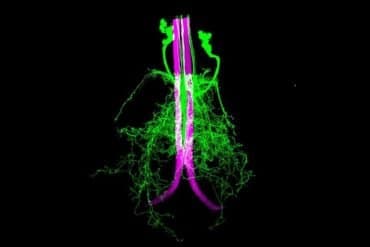

In a neuroimaging study published in PLOS Biology, researchers from the Max Planck Institute of Psycholinguistics and Radboud University in Nijmegen report that the brain encodes the structure of sentences (“the vase is red”) and phrases (“the red vase”) into different neural firing patterns.

How does the brain represent sentences? This is one of the fundamental questions in neuroscience, because sentences are an example of abstract structural knowledge that is not directly observable from speech.

While all sentences are made up of smaller building blocks, such as words and phrases, not all combinations of words or phrases lead to sentences. In fact, listeners need more than just knowledge of which words occur together: they need abstract knowledge of language structure to understand a sentence.

So how does the brain encode the structural relationships that make up a sentence?

Lise Meitner Group Leader Andrea Martin already had a theory on how the brain computes linguistic structure, based on evidence from computer simulations.

To further test this “time-based” model of the structure of language, which was developed together with Leonidas Doumas from the University of Edinburgh, Martin and colleagues used EEG (electroencephalography) to measure neural responses through the scalp.

In a collaboration with first author and Ph.D. candidate Fan Bai and MPI director Antje Meyer, she set out to investigate whether the brain responds differently to sentences and phrases, and if this could hint at how the brain encodes abstract structure.

The researchers created sets of spoken Dutch phrases (such as “de rode vaas,” “the red vase”) and sentences (such as “de vaas is rood,” “the vase is red”), which were identical in duration and number of syllables, and highly similar in meaning. They also created pictures with objects (such as a vase) in five different colors.

Fifteen adult native speakers of Dutch participated in the experiment. For each spoken stimulus, they were asked to perform one of three tasks in random order.

The first task was structure-related, as participants had to decide whether they had heard a phrase or a sentence by pushing a button. The second and third task were meaning-related, as participants had to decide whether the color or object of the spoken stimulus matched the picture that followed.

As expected from computational simulations, the activation patterns of neurons in the brain were different for phrases and sentences, in terms of both timing and strength of neural connections.

“Our findings show how the brain separates speech into linguistic structure by using the timing and connectivity of neural firing patterns. These signals from the brain provide a novel basis for future research on how our brains create language,” says Martin.

“Additionally, the time-based mechanism could in principle be used for machine learning systems that interface with spoken language comprehension in order to represent abstract structure, something machine systems currently struggle with.

“We will conduct further studies on how knowledge of abstract structure and countable statistical information, like transitional probabilities between linguistic units, are used by the brain during spoken language comprehension.”

About this neuroscience research news

Author: Press Office

Source: Max Planck Institute

Contact: Press Office – Max Planck Institute

Image: The image is in the public domain

Original Research: Open access.

“Neural dynamics differentially encode phrases and sentences during spoken language comprehension” by Andrea Martin et al. PLOS Biology

Abstract

Neural dynamics differentially encode phrases and sentences during spoken language comprehension

Human language stands out in the natural world as a biological signal that uses a structured system to combine the meanings of small linguistic units (e.g., words) into larger constituents (e.g., phrases and sentences). However, the physical dynamics of speech (or sign) do not stand in a one-to-one relationship with the meanings listeners perceive. Instead, listeners infer meaning based on their knowledge of the language.

The neural readouts of the perceptual and cognitive processes underlying these inferences are still poorly understood. In the present study, we used scalp electroencephalography (EEG) to compare the neural response to phrases (e.g., the red vase) and sentences (e.g., the vase is red), which were close in semantic meaning and had been synthesized to be physically indistinguishable.

Differences in structure were well captured in the reorganization of neural phase responses in delta (approximately <2 Hz) and theta bands (approximately 2 to 7 Hz),and in power and power connectivity changes in the alpha band (approximately 7.5 to 13.5 Hz). Consistent with predictions from a computational model, sentences showed more power, more power connectivity, and more phase synchronization than phrases did.

Theta–gamma phase–amplitude coupling occurred, but did not differ between the syntactic structures. Spectral–temporal response function (STRF) modeling revealed different encoding states for phrases and sentences, over and above the acoustically driven neural response.

Our findings provide a comprehensive description of how the brain encodes and separates linguistic structures in the dynamics of neural responses. They imply that phase synchronization and strength of connectivity are readouts for the constituent structure of language.

The results provide a novel basis for future neurophysiological research on linguistic structure representation in the brain, and, together with our simulations, support time-based binding as a mechanism of structure encoding in neural dynamics.