Kyoto University finds regions in the brain that may lead to new communication tools.

Researchers can now reconstruct what we see in our minds when we navigate — and explain how we get directions wrong.

The brain helps us navigate by continually generating, rationalizing, and analyzing great amounts of in-formation. For example, this innate GPS-like function helps us find our way in cities, follow directions to a specific destination, or go to a particular restaurant to satisfy a craving.

“When people try to get from one place to another, they ‘foresee’ the upcoming landscape in their minds,” said study author Yumi Shikauchi. “We wanted to decode prior belief in the brain, because it’s so crucial for spatial navigation.”

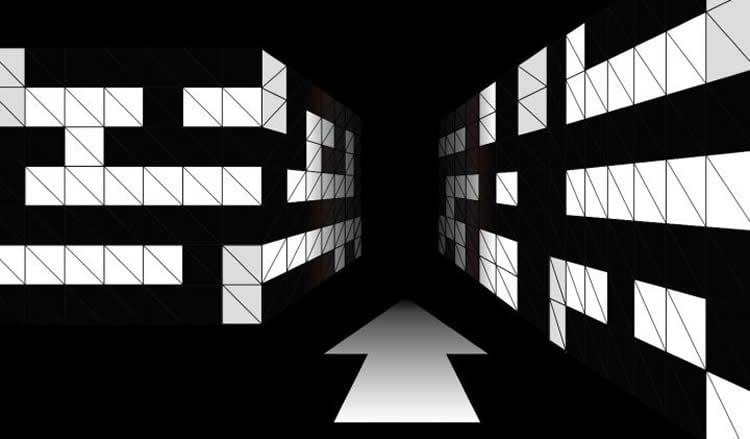

Using virtual three-dimensional mazes together with functional magnetic resonance imaging (fMRI), the researchers investigated whether a person’s preconceptions could be represented in brain activity.

Participants were led through each maze, memorizing a sequence of scenes by receiving directions for each move. Then, while being imaged using fMRI, they were asked to navigate through the maze by choosing the upcoming scene from two options. In contrast to methods in previous studies, the re-searchers focused on the underpinnings of expectation and prediction, crucial cognitive processes in everyday decision making.

Twelve decoders deciphered brain activity from fMRI scans by associating signals with output variables. They were ultimately able to reconstruct what scene the participants pictured in their minds as they pro-gressed through the maze.

They also discovered that the human sense of objectivity may sometimes be overpowered by precon-ception, which includes biases arising from external cues and prior knowledge.

“We found that the activity patterns in the parietal regions reflect participants’ expectations even when they are wrong, demonstrating that subjective belief can override objective reality,” said senior author Shin Ishii.

Shikauchi and Ishii hope that this research will contribute to the development of new communication tools that make use of brain activity.

“There are a lot of things that can’t be communicated just by words and language. As we were able to decipher virtual expectations both right and wrong, this could contribute to the development of a new type of tool that allows people to communicate non-linguistic information,” said Ishii. “We now need to be able to decipher scenes that are more complicated than simple mazes.”

Source: Anna Ikarashi – Kyoto University

Image Credit: The image is credited to Kyoto University

Original Research: Full open access research for “Decoding the view expectation during learned maze navigation from human fronto-parietal network” by Yumi Shikauchi and Shin Ishii in Scientific Reports. Published online December 3 2015 doi:10.1038/srep17648

Abstract

Decoding the view expectation during learned maze navigation from human fronto-parietal network

Humans use external cues and prior knowledge about the environment to monitor their positions during spatial navigation. View expectation is essential for correlating scene views with a cognitive map. To determine how the brain performs view expectation during spatial navigation, we applied a multiple parallel decoding technique to functional magnetic resonance imaging (fMRI) when human participants performed scene choice tasks in learned maze navigation environments. We decoded participants’ view expectation from fMRI signals in parietal and medial prefrontal cortices, whereas activity patterns in occipital cortex represented various types of external cues. The decoder’s output reflected participants’ expectations even when they were wrong, corresponding to subjective beliefs opposed to objective reality. Thus, view expectation is subjectively represented in human brain, and the fronto-parietal network is involved in integrating external cues and prior knowledge during spatial navigation.

“Decoding the view expectation during learned maze navigation from human fronto-parietal network” by Yumi Shikauchi and Shin Ishii in Scientific Reports. Published online December 3 2015 doi:10.1038/srep17648