You’re hustling across Huntington Avenue, eyes on the Marino Center, as the “walk” sign ticks down seconds: Seven, six, five…. Suddenly bike brakes screech to your right. Yikes! Why didn’t you see that cyclist coming with your peripheral vision?

Researchers in the lab of Northeastern psychology professor Peter J. Bex may now have the answer one that may lead to relief for those with agerelated macular degeneration, or AMD, an eye disorder that destroys central vision: the sharp, straightahead vision that enables us to read, drive, and decipher faces. AMD could affect close to 3 million people by 2020, according to the Centers for Disease Control.

The problem with peripheral vision, which people with AMD particularly rely on as their central vision fails, is that it’s notoriously poor because it’s subject to “crowding,” or interference from surrounding “visual clutter,” says William J. Harrison, coauthor of the study and a former Northeastern postdoctoral fellow. “You know something’s there, but you can’t identify what it is.”

Bex and Harrison’s breakthrough paper, published last month in the journal Current Biology, uses computational modeling of subjects’ perceptions of images to reveal why that visual befuddlement occurs, including the brain mechanism driving it. That knowledge could pave the way for treatments to circumvent crowding.

An evolutionary compromise

Our vision operates on a gradient: It has high resolution in the center and progressively coarser resolution in the periphery. The diminishment is an evolutionary necessity. “If we were to have the same resolution that we have in the center of our vision across our whole visual field, we’d need a brain and an optic nerve that were at least 10 times larger than they currently are,” says Bex, who specializes in basic and clinical visual science.

It’s that compromise that makes us vulnerable to crowding. “It’s not the physical properties of the eye, its shape, the number of photo receptors, that determine what we see,” says Harrison, who’s curently a postdoctoral fellow in psychology at the University of Cambridge. “It’s the wiring in the brain.”

Crowding, simply put, exemplifies the limited bandwidth of our visual processing system.

A new paradigm

Previous attempts to understand crowding spring from that insight. One hypothesis holds that those limited resources lead our brains to “average” the symbols in our peripheral vision, in effect adding the distractions to the target image and dividing the whole by the parts to produce an unidentifiable object. Another hypothesis suggests that each part is represented accurately in the brain’s vision center, in the occipital lobe. But other regions of the brain; such as the frontal lobe, which is largely responsible for visual attention; don’t have the power to select the target image from among the others.

Further blurring the picture, the prevailing hypotheses that explain crowding conflict with one another, requiring qualifiers for special cases.

But now Bex and Harrison, using an innovative experimental design, have reconciled those differences in a single computational model based on how cells in the brain’s visual center represent what we see. “Our model integrates a combination of hypotheses and enables us to find evidence for any account of crowding,” says Harrison.

Decoding the images

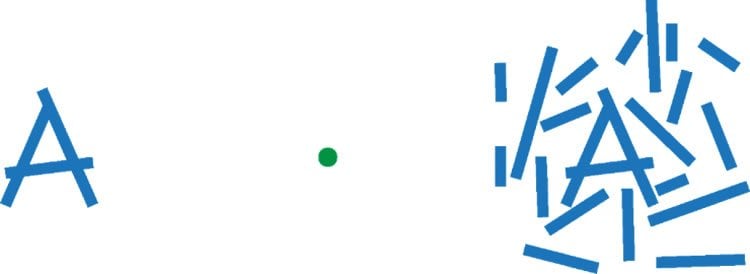

Most vision tests for crowding ask people to decipher a single letter of the alphabet, say an “A,” surrounded by lines at various angles and distances, while focusing on a dot with their central vision. Hence they elicit either a right or a wrong answer (“Er, maybe it’s an “N”?).

In Bex and Harrison’s study, however, participants viewed the image of a broken ring, similar to the letter “C,” and noted where in the image the opening appeared. The measure produced “an index of continuous perceptions,” says Bex, thus permitting the researchers not only to know if crowding was occurring but also to create a computer simulation of how the cells in the brain actually decoded the image.

“Knowing how crowding works, when it happens, and when it doesn’t, means we can start to modify the way information is presented to reduce the crowding,” says Bex. “And reducing it is what we need to do to help people with AMD.”

Source: Thea Singer – Northeastern University

Image Source: The image is credited to William Harrison

Original Research: Abstract for “A Unifying Model of Orientation Crowding in Peripheral Vision” by William J. Harrison and Peter J. Bex is in Current Biology. Published online November 25 2015 doi:10.1016/j.cub.2015.10.052

Abstract

A Unifying Model of Orientation Crowding in Peripheral Vision

Highlights

•An object surrounded by distractors is unrecognizable in peripheral vision

•We provide a novel method to systematically study such “crowded” perception

•A simple population code model produces the diverse errors made by human observers

•A single mechanism may thus suffice to explain multiple classes of crowding

Summary

Peripheral vision is fundamentally limited not by the visibility of features, but by the spacing between them [ 1 ]. When too close together, visual features can become “crowded” and perceptually indistinguishable. Crowding interferes with basic tasks such as letter and face identification and thus informs our understanding of object recognition breakdown in peripheral vision [ 2 ]. Multiple proposals have attempted to explain crowding [ 3 ], and each is supported by compelling psychophysical and neuroimaging data [ 4–6 ] that are incompatible with competing proposals. In general, perceptual failures have variously been attributed to the averaging of nearby visual signals [ 7–10 ], confusion between target and distractor elements [ 11, 12 ], and a limited resolution of visual spatial attention [ 13 ]. Here we introduce a psychophysical paradigm that allows systematic study of crowded perception within the orientation domain, and we present a unifying computational model of crowding phenomena that reconciles conflicting explanations. Our results show that our single measure produces a variety of perceptual errors that are reported across the crowding literature. Critically, a simple model of the responses of populations of orientation-selective visual neurons accurately predicts all perceptual errors. We thus provide a unifying mechanistic explanation for orientation crowding in peripheral vision. Our simple model accounts for several perceptual phenomena produced by crowding of orientation and raises the possibility that multiple classes of object recognition failures in peripheral vision can be accounted for by a single mechanism.

“A Unifying Model of Orientation Crowding in Peripheral Vision” by William J. Harrison and Peter J. Bex is in Current Biology. Published online November 25 2015 doi:10.1016/j.cub.2015.10.052