Imagine the brain’s delight when experiencing the sounds of Beethoven’s “Moonlight Sonata” while simultaneously taking in a light show produced by a visualizer.

A new Northwestern University study did much more than that.

To understand how the brain responds to highly complex auditory-visual stimuli like music and moving images, the study tracked parts of the auditory system involved in the perceptual processing of “Moonlight Sonata” while it was synchronized with the light show made by the iTunes Jelly visualizer.

The study shows how and when the auditory system encodes auditory-visual synchrony between complex and changing sounds and images.

Much of related research looks at how the brain processes simple sounds and images. Locating a woodpecker in a tree, for example, is made easier when your brain combines the auditory (pecking) and visual (movement of the bird) streams and judges that they are synchronous. If they are, the brain decides that the two sensory inputs probably came from a single source.

While that research is important, Julia Mossbridge, lead author of the study and research associate in psychology at Northwestern, said it also is critical to expand investigations to highly complex stimuli like music and movies.

“These kinds of things are closer to what the brain actually has to manage to process in every moment of the day,” she said. “Further, it’s important to determine how and when sensory systems choose to combine stimuli across their boundaries.

“If someone’s brain is mis-wired, sensory information could combine when it’s not appropriate,” she said. “For example, when that person is listening to a teacher talk while looking out a window at kids playing, and the auditory and visual streams are integrated instead of separated, this could result in confusion and misunderstanding about which sensory inputs go with what experience.”

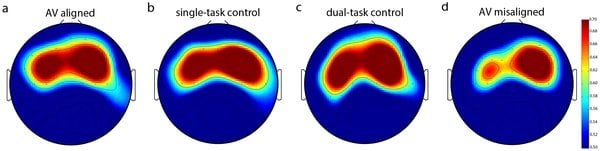

It was already known that the left auditory cortex is specialized to process sounds with precise, complex and rapid timing; this gift for auditory timing may be one reason that in most people, the left auditory cortex is used to process speech, for which timing is critical. The results of this study show that this specialization for timing applies not just to sounds, but to the timing of complex and dynamic sounds and images.

Previous research indicates that there are multi-sensory areas in the brain that link sounds and images when they change in similar ways, but much of this research is focused particularly on speech signals (e.g., lips moving as vowels and consonants are heard). Consequently, it hasn’t been clear what areas of the brain process more general auditory-visual synchrony or how this processing differs when sounds and images should not be combined.

“It appears that the brain is exploiting the left auditory cortex’s gift at processing auditory timing, and is using similar mechanisms to encode auditory-visual synchrony, but only in certain situations; seemingly only when combining the sounds and images is appropriate,” Mossbridge said.

In addition to Mossbridge, co-authors include Marcia Grabowecky and Satoru Suzuki of Northwestern. The article “Seeing the song: Left auditory structures may track auditory-visual dynamic alignment” will appear Oct. 23 in PLOS ONE.

Notes about this neuroscience research

Written by Hilary Hurd Anyaso

Contact: Hilary Hurd Anyaso – Northwestern University

Source: Northwestern University press release

Image Source: The image is credited to Julia A. Mossbridge, Marcia Grabowecky, and Satoru Suzuki/PLOS ONE, and is adapted from the PLOS ONE research paper.

Original Research: Full open access research for “Seeing the Song: Left Auditory Structures May Track Auditory-Visual Dynamic Alignment” by Julia A. Mossbridge, Marcia Grabowecky, and Satoru Suzuki in PLOS ONE. Published online October 23 2013 doi:10.1371/journal.pone.0077201

#neuroscience, #auditorysystem, #vision, #openscience, #openaccess