The brain uses similar computations to calculate the direction and speed of objects in motion whether they are perceived visually or through the sense of touch. The notion that the brain uses shared calculations to interpret information from fundamentally different physical inputs has important implications for both basic and applied neuroscience, and suggests a powerful organizing principle for sensory perception.

In an essay published September 29, 2015, in PLOS Biology, Sliman Bensmaia, PhD, Associate Professor in the Department of Organismal Biology and Anatomy at the University of Chicago and Christopher Pack, PhD, Associate Professor in the Department of Neurology & Neurosurgery at McGill University, assert that such canonical computations can be used as a starting point for a more complete understanding of various regions of the brain and their functions. The essay synthesizes Bensmaia’s research on the sense of touch at UChicago and Pack’s study of vision at McGill.

“Sight is obviously different physically from touch, but in both cases the nervous system has to make sense of information that is changing in space and time.” Pack said. “There are many way to do this, but evolution is conservative and so the brain may prefer to reuse strategies that work particularly well..”

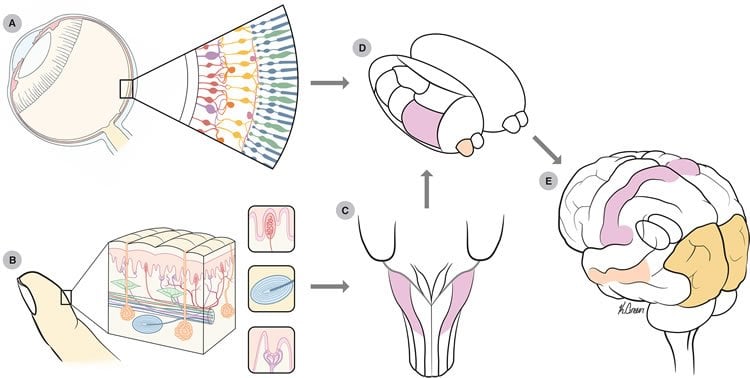

In both vision and touch, the brain perceives objects in motion as they move across a sheet of sensor receptors. For touch, this is the set of receptors laid out in a grid across the skin; in vision, these receptors are in the retina. As we run our fingertip across a surface, nearby receptors are excited sequentially. Likewise, when we gaze upon a moving object, nearby photoreceptors are excited sequentially.

The nervous system passes this information about vision and touch to the primary visual cortex and somatosensory cortex of the brain, respectively, where this sensory information is processed. Neurons in both these regions of the brain respond to a small part of the stimulus, e.g. a tiny section of a rough surface, not the entire object. In addition, these neurons are tuned for direction; they respond best to movement in only one direction. For example, some neurons respond to objects moving to the left, others respond to objects moving right.

To interpret the direction and velocity of a moving object, both of these primary sensory cortices send this information to areas of the brain specialized for motion processing, namely the middle-temporal area for vision and Brodmann’s area 1 for touch. Both of these areas integrate signals from individual neurons with a very small perspective of the object in question and a preference for one direction of movement.

“They’re integrating information from the first set of neurons that can’t see the forest for the trees, to be able to see forest and forget about the individual trees,” Bensmaia said.

In experiments using so-called plaid stimuli, crisscrossing diagonal patterns presented either visually or etched onto a plate for tactile feedback, Bensmaia and Pack tracked the neuronal responses of human subjects and primates. Both researchers showed that similar computations could be used to represent how subjects interpreted movement of objects through space and time.

In the new paper, they suggest that these two senses developed a common representation of motion because they coexist in a world in which, in many cases, we perceive objects through both vision and touch at the same time. When a cup you are holding slips from your grasp, for example, it is far more efficient to use a shared language to represent the movement you see as if falls—and feel as it slips through your fingers—than two separate ones.

Further understanding of this canonical language, Bensmaia said, gives scientists a foundation for understanding how the brain perceives the world as a whole, through multiple senses.

“The brain contains one hundred billion neurons, each different from the others,” he said. “What we try to do as neuroscientists is find organizing principles, ways to make sense of this complexity, and the idea of canonical computations for motion is a very appealing organizing principle.”

Funding: The article, “Seeing and Feeling Motion: Canonical Computations in Vision and Touch,” was supported by the Canadian Institutes of Health Research and the National Science Foundation.

Source: Matthew Wood – University of Chicago Medical Center

Image Source: The image is credited to Kenzie Green/PLOS Biology.

Video Source: The video is credited to Sliman Bensmaia and Christopher Pack

Original Research: Full open access research for “Seeing and Feeling Motion: Canonical Computations in Vision and Touch” by Christopher C. Pack and Sliman J. Bensmaia in PLOS Biology. Published online September 29 2015 doi:10.1371/journal.pbio.1002271

Abstract

Seeing and Feeling Motion: Canonical Computations in Vision and Touch

While the different sensory modalities are sensitive to different stimulus energies, they are often charged with extracting analogous information about the environment. Neural systems may thus have evolved to implement similar algorithms across modalities to extract behaviorally relevant stimulus information, leading to the notion of a canonical computation. In both vision and touch, information about motion is extracted from a spatiotemporal pattern of activation across a sensory sheet (in the retina and in the skin, respectively), a process that has been extensively studied in both modalities. In this essay, we examine the processing of motion information as it ascends the primate visual and somatosensory neuraxes and conclude that similar computations are implemented in the two sensory systems.

“Seeing and Feeling Motion: Canonical Computations in Vision and Touch” by Christopher C. Pack and Sliman J. Bensmaia in PLOS Biology. Published online September 29 2015 doi:10.1371/journal.pbio.1002271