Summary: Lip reading causes brain activity to synchronize with sound waves, even when there is no audible sound.

Source: SfN

Brain activity synchronizes with sound waves, even without audible sound, through lip-reading, according to new research published in Journal of Neuroscience.

Listening to speech activates our auditory cortex to synchronize with the rhythm of incoming sound waves. Lip-reading is a useful aid to comprehend unintelligible speech, but we still don’t know how lip-reading helps the brain process sound.

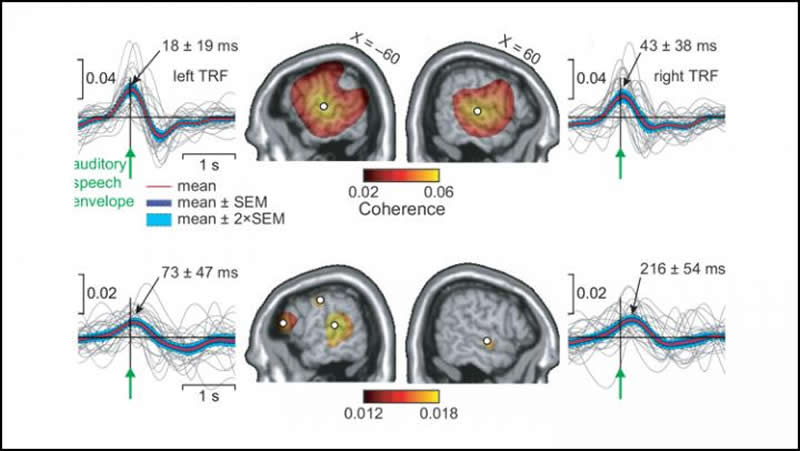

Bourguignon et al. used magnetoencephalography to measure brain activity in healthy adults while they listened to a story or watched a silent video of a woman speaking. The participants’ auditory cortices synchronized with sound waves produced by the woman in the video, even though they could not hear it.

The synchronization resembled that in those who actually did listen to the story, indicating the brain can glean auditory information from the visual information available to them through lip-reading.

The researchers suggest this ability arises from activity in the visual cortex synchronizing with lip movement.

This signal is sent to other brain areas that translate the movement information into sound information, creating the sound wave synchronization.

Source:

SfN

Media Contacts:

Calli McMurray – SfN

Image Source:

The image is credited to Bourguignon et al., JNeurosci 2019.

Original Research: Closed access

“Lip-Reading Enables the Brain to Synthesize Auditory Features of Unknown Silent Speech”. Mathieu Bourguignon, Martijn Baart, Efthymia C. Kapnoula and Nicola Molinaro.

Journal of Neuroscience doi:10.1523/JNEUROSCI.1101-19.2019.

Abstract

Lip-Reading Enables the Brain to Synthesize Auditory Features of Unknown Silent Speech

Lip-reading is crucial for understanding speech in challenging conditions. But how the brain extracts meaning from—-silent—-visual speech is still under debate. Lip-reading in silence activates the auditory cortices, but it is not known whether such activation reflects immediate synthesis of the corresponding auditory stimulus or imagery of unrelated sounds.

To disentangle these possibilities, we used magnetoencephalography to evaluate how cortical activity in 28 healthy adults humans (17 females) entrained to the auditory speech envelope and lip movements (mouth opening) when listening to a spoken story without visual input (audio-only), and when seeing a silent video of a speaker articulating another story (video-only).

In video-only, auditory cortical activity entrained to the absent auditory signal at frequencies below 1 Hz more than to the seen lip movements. This entrainment process was characterized by an auditory-speech—to—brain delay of ∼70 ms in the left hemisphere, compared to ∼20 ms in audio-only. Entrainment to mouth opening was found in the right angular gyrus at below 1 Hz, and in early visual cortices at 1—8 Hz.

These findings demonstrate that the brain can use a silent lip-read signal to synthesize a coarse-grained auditory speech representation in early auditory cortices. Our data indicate the following underlying oscillatory mechanism: Seeing lip movements first modulates neuronal activity in early visual cortices at frequencies that match articulatory lip movements; the right angular gyrus then extracts slower features of lip movements, mapping them onto the corresponding speech sound features; this information is fed to auditory cortices, most likely facilitating speech parsing.

SIGNIFICANCE STATEMENT

Lip-reading consists in decoding speech based on visual information derived from observation of a speaker’s articulatory facial gestures. Lip reading is known to improve auditory speech understanding, especially when speech is degraded. Interestingly, lip-reading in silence still activates the auditory cortices, even when participants do not know what the absent auditory signal should be. However, it was uncertain what such activation reflected. Here, using magnetoencephalographic recordings, we demonstrate it reflects fast synthesis of the auditory stimulus rather than mental imagery of unrelated—-speech or non-speech—-sounds. Our results also shed light on the oscillatory dynamics underlying lip-reading.