Summary: EEG study reveals when we imagine a song, similar brain activity occurs as when we experience moments of silence in music.

Source: SfN

Imagining a song triggers similar brain activity as moments of silence in music, according to a pair of studies recently published in Journal of Neuroscience.

The results reveal how the brain continues responding to music, even when none is playing.

When we listen to music, the brain attempts to predict what comes next. A surprise, such as a loud note or disharmonious chord, increases brain activity. Yet it is difficult to isolate the brain’s prediction signal because it also responds to the actual sensory experience.

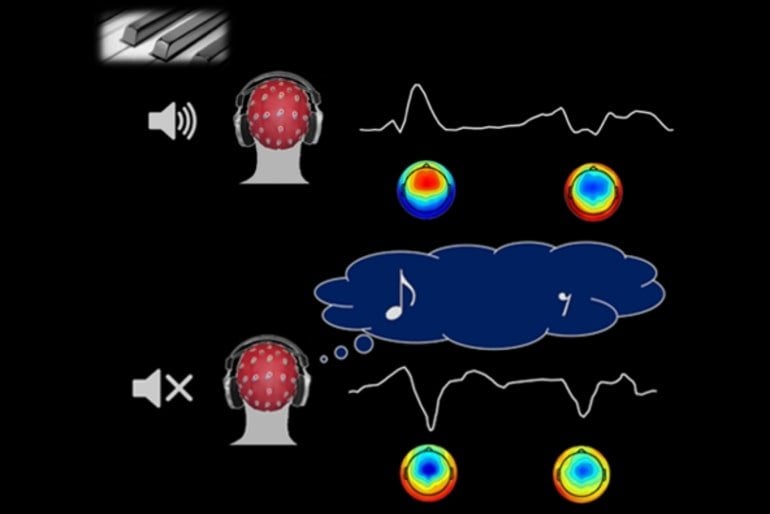

Di Liberto, Marion, and Shamma used EEG to measure the brain activity of musicians while they listened to or imagined Bach piano melodies. Activity while imagining music had the opposite polarity of activity while listening to music, meaning when one was positive, the other was negative.

The same type of activity occurred in silent moments of the songs when statistically there could have been a note, but there wasn’t. There is no sensory input during silence and imagined music, so this activity comes from the brain’s predictions.

The research team also decoded the brain activity to determine which song someone was imagining.

The researchers find music is more than a sensory experience for the brain. Instead, the brain keeps making predictions even when music is not playing.

About this auditory neuroscience research news

Source: SfN

Contact: Calli McMurray – SfN

Image: The image is credited to Di Liberto, Marion, and Shamma, JNeurosci 2021

Original Research: Closed access.

“The Music of Silence. Part I: Responses to Musical Imagery Encode Melodic Expectations and Acoustics” by Guilhem Marion, Giovanni M. Di Liberto and Shihab A. Shamma. Journal of Neuroscience

Abstract

The Music of Silence. Part I: Responses to Musical Imagery Encode Melodic Expectations and Acoustics

Musical imagery is the voluntary internal hearing of music in the mind without the need for physical action or external stimulation. Numerous studies have already revealed brain areas activated during imagery.

However, it remains unclear to what extent imagined music responses preserve the detailed temporal dynamics of the acoustic stimulus envelope and, crucially, whether melodic expectations play any role in modulating responses to imagined music, as they prominently do during listening. These modulations are important as they reflect aspects of the human musical experience, such as its acquisition, engagement, and enjoyment.

This study explored the nature of these modulations in imagined music based on EEG recordings from 21 professional musicians (6 females and 15 males). Regression analyses were conducted to demonstrate that imagined neural signals can be predicted accurately, similarly to the listening task, and were sufficiently robust to allow for accurate identification of the imagined musical piece from the EEG.

In doing so, our results indicate that imagery and listening tasks elicited an overlapping but distinctive topography of neural responses to sound acoustics, which is in line with previous fMRI literature. Melodic expectation, however, evoked very similar frontal spatial activation in both conditions, suggesting that they are supported by the same underlying mechanisms. Finally, neural responses induced by imagery exhibited a specific transformation from the listening condition, which primarily included a relative delay and a polarity inversion of the response.

This transformation demonstrates the top-down predictive nature of the expectation mechanisms arising during both listening and imagery.

SIGNIFICANT STATEMENT

It is well known that the human brain is activated during musical imagery – the act of voluntarily hearing music in our mind without external stimulation. It is unclear, however, what the temporal dynamics of this activation are, as well as what musical features are precisely encoded in the neural signals.

This study uses an experimental paradigm with high temporal precision to record and analyze the cortical activity during musical imagery. This study reveals that neural signals encode music acoustics and melodic expectations during both listening and imagery.

Crucially, it is also found that a simple mapping based on a time-shift and a polarity inversion could robustly describe the relationship between listening and imagery signals.