Summary: Researchers have investigated the mouth clicks in human echolocation. They hope to use synthetic human clicks to investigate how sounds can reveal the physical features of an object.

Source: PLOS.

Findings could aid efforts to use synthetic mouth clicks to better understand human echolocation.

Like some bats and marine mammals, people can develop expert echolocation skills, in which they produce a clicking sound with their mouths and listen to the reflected sound waves to “see” their surroundings. A new study published in PLOS Computational Biology provides the first in-depth analysis of the mouth clicks used in human echolocation.

The research, performed by Lore Thaler of Durham University, U.K., Galen Reich and Michael Antoniou of Birmingham University, U.K., and colleagues, focuses on three blind adults who have been expertly trained in echolocation. Since the age of 15 or younger, all three have used echolocation in their daily lives. They use the technique for such activities as hiking, visiting unfamiliar cities, and riding bicycles.

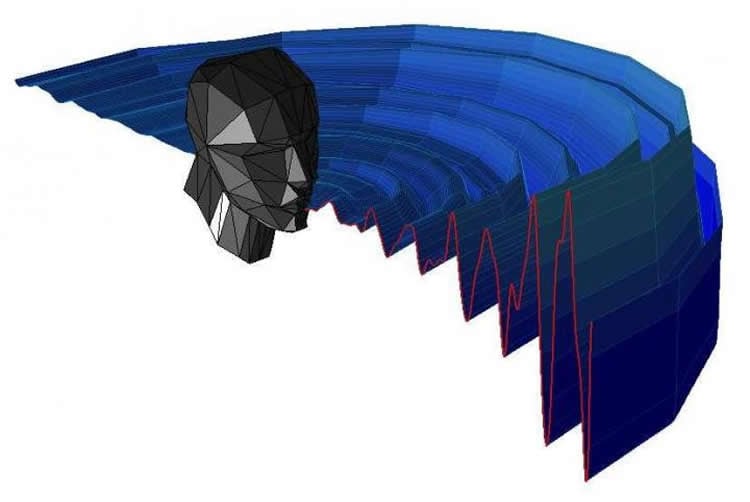

While the existence of human echolocation is well documented, the details of the underlying acoustic mechanisms have been unclear. In the new study, the researchers set out to provide physical descriptions of the mouth clicks used by each of the three participants during echolocation. They recorded and analyzed the acoustic properties of several thousand clicks, including the spatial path the sound waves took in an acoustically controlled room.

Analysis of the recordings revealed that the clicks made by the participants had a distinct acoustic pattern that was more focused in its direction than that of human speech. The clicks were brief–around three milliseconds long–and their strongest frequencies were between two to four kilohertz, with some additional strength around 10 kilohertz.

The researchers also used the recordings to propose a mathematical model that could be used to synthesize mouth clicks made during human echolocation. They plan to use synthetic human clicks to investigate how these sounds can reveal the physical features of objects; the number of measurements required for such studies would be impractical to ask from human volunteers.

“The results allow us to create virtual human echolocators,” Thaler says. “This allows us to embark on an exciting new journey in human echolocation research.”

Funding: This work was supported by the British Council and the Department for Business, Innovation and Skills in the UK (award SC037733) to the GII Seeing with Sound Consortium. This work was partially supported by a Biotechnology and Biological Sciences Research Council grant to LT (BB/M007847/1). The funders had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.

Source: Lore Thaler – PLOS

Image Source: NeuroscienceNews.com image is credited to Thaler et al.

Original Research: Full open access research for “Mouth-clicks used by blind expert human echolocators – signal description and model based signal synthesis” by Lore Thaler, Galen M. Reich, Xinyu Zhang, Dinghe Wang, Graeme E. Smith, Zeng Tao, Raja Syamsul Azmir Bin. Raja Abdullah, Mikhail Cherniakov, Christopher J. Baker, Daniel Kish, and Michail Antoniou in PLOS Computational Biology. Published online August 31 2017 doi:10.1371/journal.pcbi.1005670

[cbtabs][cbtab title=”MLA”]PLOS “Mouth Clicks Used in Human Echolocation Captured in Unprecedented Detail.” NeuroscienceNews. NeuroscienceNews, 3 September 2017.

<https://neurosciencenews.com/echolocation-mouth-clicks-7402/>.[/cbtab][cbtab title=”APA”]PLOS (2017, September 3). Mouth Clicks Used in Human Echolocation Captured in Unprecedented Detail. NeuroscienceNew. Retrieved September 3, 2017 from https://neurosciencenews.com/echolocation-mouth-clicks-7402/[/cbtab][cbtab title=”Chicago”]PLOS “Mouth Clicks Used in Human Echolocation Captured in Unprecedented Detail.” https://neurosciencenews.com/echolocation-mouth-clicks-7402/ (accessed September 3, 2017).[/cbtab][/cbtabs]

Abstract

Mouth-clicks used by blind expert human echolocators – signal description and model based signal synthesis

Echolocation is the ability to use sound-echoes to infer spatial information about the environment. Some blind people have developed extraordinary proficiency in echolocation using mouth-clicks. The first step of human biosonar is the transmission (mouth click) and subsequent reception of the resultant sound through the ear. Existing head-related transfer function (HRTF) data bases provide descriptions of reception of the resultant sound. For the current report, we collected a large database of click emissions with three blind people expertly trained in echolocation, which allowed us to perform unprecedented analyses. Specifically, the current report provides the first ever description of the spatial distribution (i.e. beam pattern) of human expert echolocation transmissions, as well as spectro-temporal descriptions at a level of detail not available before. Our data show that transmission levels are fairly constant within a 60° cone emanating from the mouth, but levels drop gradually at further angles, more than for speech. In terms of spectro-temporal features, our data show that emissions are consistently very brief (~3ms duration) with peak frequencies 2-4kHz, but with energy also at 10kHz. This differs from previous reports of durations 3-15ms and peak frequencies 2-8kHz, which were based on less detailed measurements. Based on our measurements we propose to model transmissions as sum of monotones modulated by a decaying exponential, with angular attenuation by a modified cardioid. We provide model parameters for each echolocator. These results are a step towards developing computational models of human biosonar. For example, in bats, spatial and spectro-temporal features of emissions have been used to derive and test model based hypotheses about behaviour. The data we present here suggest similar research opportunities within the context of human echolocation. Relatedly, the data are a basis to develop synthetic models of human echolocation that could be virtual (i.e. simulated) or real (i.e. loudspeaker, microphones), and which will help understanding the link between physical principles and human behaviour.

“Mouth-clicks used by blind expert human echolocators – signal description and model based signal synthesis” by Lore Thaler, Galen M. Reich, Xinyu Zhang, Dinghe Wang, Graeme E. Smith, Zeng Tao, Raja Syamsul Azmir Bin. Raja Abdullah, Mikhail Cherniakov, Christopher J. Baker, Daniel Kish, and Michail Antoniou in PLOS Computational Biology. Published online August 31 2017 doi:10.1371/journal.pcbi.1005670