Summary: A new study reveals neurons in the V4 area of the visual cortex do not necessarily need to have low visual resolution.

Source: Chinese Academy of Sciences.

A long-standing paradox in vision shows that the complexity of neural encoding increases along the visual hierarchy even as visual resolution dramatically decreases. Put differently, how do people simultaneously recognize the face of a child, while at the same time visually resolving individual eyelash hairs?

The idea that sensory transformation discards low-level detail to yield invariant classification is a central core of many models of brain function. Some complex models then invoke large re-entrant loops to solve this fundamental paradox of increasing complexity vis-à-vis decreasing selectivity.

Primates can identify objects in the 10° central visual field within 150 ms, suggesting an initial fast cascade of largely feedforward processing. How they can effortlessly perceive both global and local features of objects in such a short time and in great detail remains a mystery. The main question in vision study is how the cortex integrates local visual cues to form global representations along the object-processing visual hierarchy.

In a recent study published in Neuron, Dr. WANG Wei’s lab at the Institute of Neuroscience of the Chinese Academy of Sciences revealed an unexpected neural clustering preserving visual acuity from V1 into V4, enabling the spatiotemporal separation of processing local and global features along the hierarchy.

The work from Dr. WANG’s group aims at evaluating the core concept of whether low-level information like spatial resolution is preserved along the visual hierarchy, and if so, what are its functional implications. This is fundamental to understanding how the brain does sensory transformation.

Dr. WANG’s lab studied the simultaneous transformation of spatial resolution (i.e., visual acuity) across macaque parafoveal V1, V2, and V4. Spatial resolution is often measured as spatial frequency (SF) discrimination. The researchers particularly focused on spatial analysis in V4, which links the analysis of local features by V1 and V2 with the global object representation provided by IT.

Surprisingly, they discovered clustered “islands” of V4 neurons selective for high SFs up to 12 cycles/°, far exceeding the average optimal SFs of V1 and V2 neurons at similar retinal eccentricities. These neural clusters violate the inverse relationship between visual acuity and retinal eccentricity.

They proceeded to show that higher-acuity clusters represent local features, whereas lower-acuity clusters represent global features of the same stimuli. Furthermore, the clustered neurons with high-SF selectivity were found to respond 10 ms later than those in low-SF domains, providing direct neural evidence for the coarse-to-fine nature of human perception at intermediate levels of the visual processing hierarchy.

The study demonstrated that neurons in V4 (and most likely also in IT) do not necessarily need to have only low visual resolution. The research will prompt further studies to probe how this preservation of low-level information is useful for higher-level vision.

The study for the first time showed an unexpected compartmentation of area V4 into SF-selective functional domains that extend to high visual acuity. Higher acuities are preserved to later stages of the visual hierarchy where more complex visual cognitive behavior occurs, and may begin to resolve the long-standing paradox concerning fine visual discrimination in visual perception.

Data provided by Dr. WANG’s lab has informed a conceptual reevaluation of processing models that currently dominate system neuroscience and artificial neural networks such as Deep Neural Networks (DNN).

Funding: The research was supported by Chinese Academy of Sciences, National Natural Science Foundation of China.

Source: Wang Wei – Chinese Academy of Sciences

Publisher: Organized by NeuroscienceNews.com.

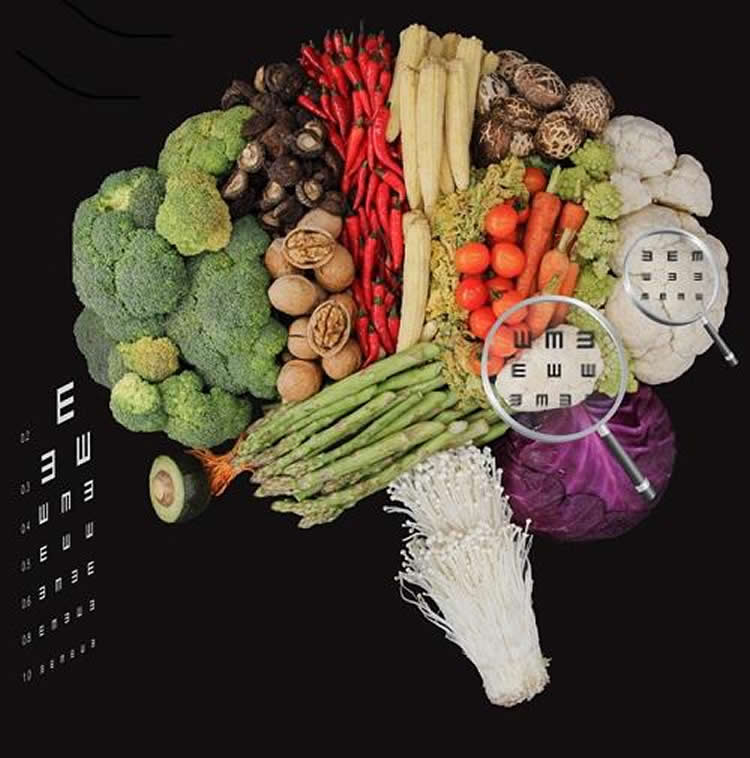

Image Source: NeuroscienceNews.com image is credited to Drs. WANG Wei and LU Yiliang.

Original Research: Abstract for “Revealing Detail along the Visual Hierarchy: Neural Clustering Preserves Acuity from V1 to V4” by Yiliang Lu, Jiapeng Yin, Zheyuan Chen, Hongliang Gong, Ye Liu, Liling Qian, Xiaohong Li, Rui Liu, Ian Max Andolina, and Wei Wang in Neuron. Published March 29 2018.

doi:10.1016/j.neuron.2018.03.009

[cbtabs][cbtab title=”MLA”]Chinese Academy of Sciences “Increasing Understanding of the Coarse-to-Fine Human Visual Perception.” NeuroscienceNews. NeuroscienceNews, 29 March 2018.

<https://neurosciencenews.com/coarse-fine-visual-perception-8710/>.[/cbtab][cbtab title=”APA”]Chinese Academy of Sciences (2018, March 29). Increasing Understanding of the Coarse-to-Fine Human Visual Perception. NeuroscienceNews. Retrieved March 29, 2018 from https://neurosciencenews.com/coarse-fine-visual-perception-8710/[/cbtab][cbtab title=”Chicago”]Chinese Academy of Sciences “Increasing Understanding of the Coarse-to-Fine Human Visual Perception.” https://neurosciencenews.com/coarse-fine-visual-perception-8710/ (accessed March 29, 2018).[/cbtab][/cbtabs]

Abstract

Revealing Detail along the Visual Hierarchy: Neural Clustering Preserves Acuity from V1 to V4

Highlights

•Simultaneous population analysis of visual resolution across macaque V1, V2, and V4

•Unexpected neural clustering in V4 selective for high SF up to 12 cycles/°

•Neural activity of high and low SF clusters link to local and global features

•Response latency of SF-selective clusters agree with coarse-to-fine perception

Summary

How primates perceive objects along with their detailed features remains a mystery. This ability to make fine visual discriminations depends upon a high-acuity analysis of spatial frequency (SF) along the visual hierarchy from V1 to inferotemporal cortex. By studying the transformation of SF across macaque parafoveal V1, V2, and V4, we discovered SF-selective functional domains in V4 encoding higher SFs up to 12 cycles/°. These intermittent higher-SF-selective domains, surrounded by domains encoding lower SFs, violate the inverse relationship between SF preference and retinal eccentricity. The neural activities of higher- and lower-SF domains correspond to local and global features, respectively, of the same stimuli. Neural response latencies in high-SF domains are around 10 ms later than in low-SF domains, consistent with the coarse-to-fine nature of perception. Thus, our finding of preserved resolution from V1 into V4, separated both spatially and temporally, may serve as a connecting link for detailed object representation.