Neuroscientists at Duke Health have developed a brain-machine interface (BMI) that allows primates to use only their thoughts to navigate a robotic wheelchair.

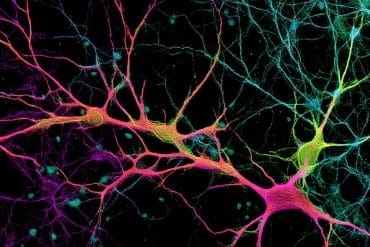

The BMI uses signals from hundreds of neurons recorded simultaneously in two regions of the monkeys’ brains that are involved in movement and sensation. As the animals think about moving toward their goal — in this case, a bowl containing fresh grapes — computers translate their brain activity into real-time operation of the wheelchair.

The interface, described in the March 3 issue of the online journal Scientific Reports, demonstrates the future potential for people with disabilities who have lost most muscle control and mobility due to quadriplegia or ALS, said senior author Miguel Nicolelis, M.D., Ph.D., co-director for the Duke Center for Neuroengineering.

“In some severely disabled people, even blinking is not possible,” Nicolelis said. “For them, using a wheelchair or device controlled by noninvasive measures like an EEG (a device that monitors brain waves through electrodes on the scalp) may not be sufficient. We show clearly that if you have intracranial implants, you get better control of a wheelchair than with noninvasive devices.”

Scientists began the experiments in 2012, implanting hundreds of hair-thin microfilaments in the premotor and somatosensory regions of the brains of two rhesus macaques. They trained the animals by passively navigating the chair toward their goal, the bowl containing grapes. During this training phase, the scientists recorded the primates’ large-scale electrical brain activity. The researchers then programmed a computer system to translate brain signals into digital motor commands that controlled the movements of the wheelchair.

As the monkeys learned to control the wheelchair just by thinking, they became more efficient at navigating toward the grapes and completed the trials faster, Nicolelis said.

In addition to observing brain signals that corresponded to translational and rotational movement, the Duke team also discovered that primates’ brain signals showed signs they were contemplating their distance to the bowl of grapes.

“This was not a signal that was present in the beginning of the training, but something that emerged as an effect of the monkeys becoming proficient in this task,” Nicolelis said. “This was a surprise. It demonstrates the brain’s enormous flexibility to assimilate a device, in this case a wheelchair, and that device’s spatial relationships to the surrounding world.”

The trials measured the activity of nearly 300 neurons in each of the two monkeys. The Nicolelis lab previously reported the ability to record up to 2,000 neurons using the same technique. The team now hopes to expand the experiment by recording more neuronal signals to continue to increase the accuracy and fidelity of the primate BMI before seeking trials for an implanted device in humans, he said.

In addition to Nicolelis, study authors include Sankaranarayani Rajangam; Po-He Tseng; Allen Yin; Gary Lehew; David Schwarz; and Mikhail A. Lebedev.

Funding: The National Institutes of Health (DP1MH099903) funded this study. The Itau Bank of Brazil provided research support to the study as part of the Walk Again Project, an international non-profit consortium aimed at developing new assistive technologies for severely paralyzed patients. The authors declared no competing financial interests.

Source: Samiha Khanna – Duke Health

Image Credit: The image is credited to Shawn Rocco/ Duke Health.

Original Research: Full open access research for “Wireless Cortical Brain-Machine Interface for Whole-Body Navigation in Primates” by Sankaranarayani Rajangam, Po-He Tseng, Allen Yin, Gary Lehew, David Schwarz, Mikhail A. Lebedev and Miguel A. L. Nicolelis in Scientific Reports. Published online March 3 2016 doi:10.1038/srep22170

Abstract

Wireless Cortical Brain-Machine Interface for Whole-Body Navigation in Primates

How does an animal know where it is when it stops moving? Hippocampal place cells fire at discrete locations as subjects traverse space, thereby providing an explicit neural code for current location during locomotion. In contrast, during awake immobility, the hippocampus is thought to be dominated by neural firing representing past and possible future experience. The question of whether and how the hippocampus constructs a representation of current location in the absence of locomotion has been unresolved. Here we report that a distinct population of hippocampal neurons, located in the CA2 subregion, signals current location during immobility, and does so in association with a previously unidentified hippocampus-wide network pattern. In addition, signalling of location persists into brief periods of desynchronization prevalent in slow-wave sleep. The hippocampus thus generates a distinct representation of current location during immobility, pointing to mnemonic processing specific to experience occurring in the absence of locomotion.

“Wireless Cortical Brain-Machine Interface for Whole-Body Navigation in Primates” by Sankaranarayani Rajangam, Po-He Tseng, Allen Yin, Gary Lehew, David Schwarz, Mikhail A. Lebedev and Miguel A. L. Nicolelis in Scientific Reports. Published online March 3 2016 doi:10.1038/srep22170