Summary: A new brain-computer interface (BCI) has enabled a paralyzed man to control a robotic arm by simply imagining movements. Unlike previous BCIs, which lasted only a few days, this AI-enhanced device worked reliably for seven months. The AI model adapts to natural shifts in brain activity, maintaining accuracy over time.

After training with a virtual arm, the participant successfully grasped, moved, and manipulated real-world objects. The technology represents a major step toward restoring movement for people with paralysis. Researchers are now refining the system for smoother operation and testing its use in home settings.

Key Facts:

- Long-Term Stability: The AI-enhanced BCI functioned for seven months, far longer than previous versions.

- Adaptive Learning: The system adjusts to daily shifts in brain activity, maintaining accuracy.

- Real-World Use: The participant controlled a robotic arm to pick up objects and use a water dispenser.

Source: UCSF

Researchers at UC San Francisco have enabled a man who is paralyzed to control a robotic arm through a device that relays signals from his brain to a computer.

He was able to grasp, move and drop objects just by imagining himself performing the actions.

The device, known as a brain-computer interface (BCI), worked for a record 7 months without needing to be adjusted. Until now, such devices have only worked for a day or two.

The BCI relies on an AI model that can adjust to the small changes that take place in the brain as a person repeats a movement – or in this case, an imagined movement – and learns to do it in a more refined way.

“This blending of learning between humans and AI is the next phase for these brain-computer interfaces,” said neurologist, Karunesh Ganguly, MD, PhD, a professor of neurology and a member of the UCSF Weill Institute for Neurosciences. “It’s what we need to achieve sophisticated, lifelike function.”

The study, which was funded by the National Institutes of Health, appears March 6 in Cell.

The key was the discovery of how activity shifts in the brain day to day as a study participant repeatedly imagined making specific movements. Once the AI was programmed to account for those shifts, it worked for months at a time.

Location, location, location

Ganguly studied how patterns of brain activity in animals represent specific movements and saw that these representations changed day-to-day as the animal learned. He suspected the same thing was happening in humans, and that was why their BCIs so quickly lost the ability to recognize these patterns.

Ganguly and neurology researcher Nikhilesh Natraj, PhD, worked with a study participant who had been paralyzed by a stroke years earlier. He could not speak or move.

He had tiny sensors implanted on the surface of his brain that could pick up brain activity when he imagined moving.

To see whether his brain patterns changed over time, Ganguly asked the participant to imagine moving different parts of his body, like his hands, feet or head.

Although he couldn’t actually move, the participant’s brain could still produce the signals for a movement when he imagined himself doing it. The BCI recorded the brain’s representations of these movements through the sensors on his brain.

Ganguly’s team found that the shape of representations in the brain stayed the same, but their locations shifted slightly from day to day.

From virtual to reality

Ganguly then asked the participant to imagine himself making simple movements with his fingers, hands or thumbs over the course of two weeks, while the sensors recorded his brain activity to train the AI.

Then, the participant tried to control a robotic arm and hand. But the movements still weren’t very precise.

So, Ganguly had the participant practice on a virtual robot arm that gave him feedback on the accuracy of his visualizations. Eventually, he got the virtual arm to do what he wanted it to do.

Once the participant began practicing with the real robot arm, it only took a few practice sessions for him to transfer his skills to the real world.

He could make the robotic arm pick up blocks, turn them and move them to new locations. He was even able to open a cabinet, take out a cup and hold it up to a water dispenser.

Months later, the participant was still able to control the robotic arm after a 15-minute “tune-up” to adjust for how his movement representations had drifted since he had begun using the device.

Ganguly is now refining the AI models to make the robotic arm move faster and more smoothly, and planning to test the BCI in a home environment.

For people with paralysis, the ability to feed themselves or get a drink of water would be life changing.

Ganguly thinks this is within reach.

“I’m very confident that we’ve learned how to build the system now, and that we can make this work,” he said.

Authors: Other authors of this study include Sarah Seko and Adelyn Tu-Chan of UCSF and Reza Abiri of the University of Rhode Island.

Funding: This work was supported by National Institutes of Health (1 DP2 HD087955) and the UCSF Weill Institute for Neurosciences.

About this AI and robotics research news

Author: Robin Marks

Source; UCSF

Contact: Robin Marks – UCSF

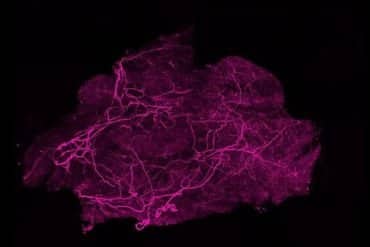

Image: The image is credited to Neuroscience News

Original Research: Open access.

“Sampling representational plasticity of simple imagined movements across days enables long-term neuroprosthetic control” by Karunesh Ganguly et al. Cell

Abstract

Sampling representational plasticity of simple imagined movements across days enables long-term neuroprosthetic control

The nervous system needs to balance the stability of neural representations with plasticity. It is unclear what the representational stability of simple well-rehearsed actions is, particularly in humans, and their adaptability to new contexts.

Using an electrocorticography brain-computer interface (BCI) in tetraplegic participants, we found that the low-dimensional manifold and relative representational distances for a repertoire of simple imagined movements were remarkably stable.

The manifold’s absolute location, however, demonstrated constrained day-to-day drift. Strikingly, neural statistics, especially variance, could be flexibly regulated to increase representational distances during BCI control without somatotopic changes.

Discernability strengthened with practice and was BCI-specific, demonstrating contextual specificity.

Sampling representational plasticity and drift across days subsequently uncovered a meta-representational structure with generalizable decision boundaries for the repertoire; this allowed long-term neuroprosthetic control of a robotic arm and hand for reaching and grasping.

Our study offers insights into mesoscale representational statistics that also enable long-term complex neuroprosthetic control.