Summary: As traditional healthcare systems struggle with long waiting lists and rising costs, a massive global survey reveals a seismic shift in public trust toward Artificial Intelligence. The study, involving 31,000 adults across 35 countries, found that 41% of UK adults (and 61% globally) are now comfortable using ChatGPT as a mental health counselor.

While AI’s non-judgmental tone and 24/7 availability offer a sense of security and companionship for many, experts warn that these tools are “no substitute” for professional care and raise concerns about the long-term impact on cognitive functions like memory and learning.

Key Facts

- The “Counselor” Shift: 41% of UK respondents would use AI for counseling, likely driven by long wait times for traditional mental health services.

- Universal Companion: Three-quarters of people globally (and over half in the UK) are willing to use AI as a friend or companion, drawn to its adaptive tone and “private” conversation feel.

- Trust in Medicine: 45% of people globally (25% in the UK) would trust AI to act as their doctor, with higher trust levels in regions where healthcare is expensive or inaccessible.

- Educational Concerns: 25% of UK adults would delegate teaching their children to AI. Researchers warn this could lead to “prompt-focused” learning rather than deep information retention.

- Biological Risks: Neuroscientists expressed concern that replacing traditional learning with excessive AI reliance could physically shrink the hippocampus, the brain region critical for memory and spatial awareness.

Source: Bornemouth University

More than 4 in 10 adults in the UK are happy to use ChatGPT for their mental health support, new research suggests.

The study, led by Bournemouth University surveyed nearly 31,000 adults in 35 countries about their use of Artificial Intelligence (AI) large language models such as ChatGPT. The research also discovered that:

- One quarter of UK adults would be happy to delegate the role of teaching their children to AI.

- Globally, 45% of people would trust AI models to take on the role of their doctor.

- Three quarters of people surveyed said they would use an AI chat tool as a companion and a friend.

The study has been published in the journal AI and Society.

Dr Ala Yankouskaya, Senior Lecturer in Psychology at Bournemouth University who led the study said: “With the rapid development and mass availability of AI, more people are placing their trust in it. We wanted to learn more about how people would trust generative AI tools, such as ChatGPT, to carry out some of the most important roles in their daily lives.”

AI for mental health support

41% of participants from the UK, and 61% globally, said that they would be happy to using AI for counselling services. The researchers suggest that for the UK, this could be the result of the waiting times many people face to access the mental health services that they need.

“If someone is experiencing depression, they do not want to wait months for an appointment, so instead they can turn to AI,” Dr Yankouskaya said.

“However, when I tested some of the tools myself, I found the language used very vague and confusing because the developers are careful not to jump into providing diagnoses. So, it is no substitute for speaking to a health professional.”

The researchers also noted that users were already familiar with NHS chatbots, which use similar AI technology, and this could be normalising their use of AI in other apps such as ChatGPT for their mental health care.

AI as a teacher

A quarter of people in the UK and half of everyone surveyed globally said that they would trust AI to carry out the role of a teacher, which the research team found particularly concerning.

“It really knocked me down when I saw how many people would be willing to delegate AI to the role of teaching their children,” Dr Yankouskaya explained.

“We still do not know the long-term effects that using these tools for education could have on children’s memory and cognitive functions. We could be heading to the stage where we are developing children who are good at putting prompts into AI tools but not as good at taking the information in,” she continued.

The researchers were also concerned about the long-term physical effects on the brain if learning information in the traditional way was replaced by excessive search-engine use, and whether this could shrink the hippocampus region of the brain that used for spatial awareness and learning.

AI as a doctor

45% of all respondents and 25% in the UK said that they would trust AI to carry out the role of their doctor. The numbers were particularly higher in countries where healthcare is more expensive and harder to access.

This wasn’t as surprising to the researchers who believe people that live in parts of the world where access to health care services is not readily available, might rely on technology for quick answers.

However, they were cautious about the underlying algorithm used to retain the user’s attention and keep them in a relaxed chat. This might be more harmful for mental health advice, where traditional methods of advice might be to alert the user to specific services such as The Samaritans.

AI as a companion

The highest amount of trust participants were willing to place in AI came in the role of friendship. Over three quarters of people globally and over half of people in the UK said they would talk to ChatGPT as a companion.

The researchers think this is explained by a perceived sense of empathy from generative language tools because they are designed to adapt the tone of their responses to the suit the user’s.

“AI tools come across as a friend who knows you well and understands you,” Dr Yankouskaya explained.

“ChatGPT can remember every chat it has had with a user and it feels like a private conversation between them. Nowadays people can be very sensitive to being judged and AI tools are designed to be non-judgemental. This means they can provide the sense of security people need,” she continued.

Dr Yankouskaya and the team concluded that as the prospect of AI playing a bigger role in people’s lives moves from a theoretical prospect to reality, there needs to be more awareness within societies about how generative AI tools work and their limitations.

The lack of knowledge about the long-term effects on someone’s memory means caution needs to be applied before they take over roles in education in particular.

Key Questions Answered:

A: It often comes down to access and judgment. If you’re struggling with depression, waiting months for an appointment isn’t an option. AI provides an immediate “listening ear.” Furthermore, people are often sensitive about being judged; AI is designed to be non-judgmental and “remembers” every past conversation, making it feel like a supportive, private friend.

A: Not quite. While it can offer general support, researchers found that the language used by AI is often vague and confusing because developers are careful not to provide clinical diagnoses. It can’t replace the nuance and safety of a human professional, especially in crisis situations where specific emergency services are needed.

A: It might be. Researchers are worried about “cognitive outsourcing.” If we rely on AI to find every answer and teach our children, we might stop using the parts of our brain responsible for deep memory and learning. Over time, this lack of “mental exercise” could lead to a smaller hippocampus and reduced cognitive flexibility.

Editorial Notes:

- This article was edited by a Neuroscience News editor.

- Journal paper reviewed in full.

- Additional context added by our staff.

About this AI and psychology research news

Author: Steve Bates

Source: Bournemouth University

Contact: Steve Bates – Bournemouth University

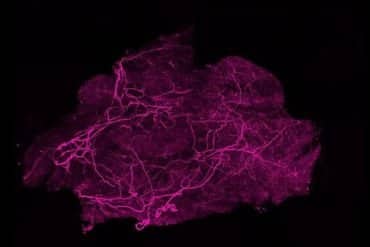

Image: The image is credited to Neuroscience News

Original Research: Open access.

“Who lets AI take over? Cross-national variation in willingness to delegate socially important roles to artificial intelligence” by Ala Yankouskaya, Mohamed Basel Almourad, Magnus Liebherr, Fahad Beyahi, Guandong Xu & Raian Ali. AI & Society

DOI:10.1007/s00146-026-02858-5

Abstract

Who lets AI take over? Cross-national variation in willingness to delegate socially important roles to artificial intelligence

Delegating socially significant roles to artificial intelligence (AI) is an emerging reality, yet little is known about how publics evaluate this transfer of responsibility across contexts and countries.

This study applied a structural model to a large cross-national dataset (30,994 individuals in 35 countries) to test how cognitive appraisals, affective dispositions, and contextual factors jointly shape willingness to delegate socially important roles of companionship, mental health advisor, doctor and teacher to children to AI.

The results revealed a robust hierarchy of delegation preferences, with companionship most frequently entrusted to AI, followed by mental-health advisor, teacher, and doctor. Cognitive appraisals emerged as the strongest predictors: trust in online information was consistently the most powerful driver across all roles, while optimism and life satisfaction made smaller but reliable contributions.

Affective dispositions played narrower, domain-specific roles, with anxiety shaping delegation in teaching and mental health, and loneliness linked only weakly to companionship. Women were less willing than men to delegate across all roles, with the gender gap largest in medicine and education, and strikingly invariant across cognitive and affective predictors.

Beyond these, national baselines diverged by nearly 30 percentage points even after adjusting for these predictors demonstrating the independent influence of country context.

Our findings show that willingness to delegate socially important roles to AI follows a robust hierarchy and reflects the combined influence of cognitive appraisals, affective dispositions, and contextual factors. A key implication is that delegation roles to AI must be understood as both a personal and a societal orientation, requiring attention to the interplay between these layers.