Summary: A new study sheds light on how the brain predicts what comes next when someone is talking.

Source: PLOS.

An international collaboration of neuroscientists has shed light on how the brain helps us to predict what is coming next in speech.

In the study, publishing on April 25 in the open access journal PLOS Biology scientists from Newcastle University, UK, and a neurosurgery group at the University of Iowa, USA, report that they have discovered mechanisms in the brain’s auditory cortex involved in processing speech and predicting upcoming words, which is essentially unchanged throughout evolution. Their research reveals how individual neurons coordinate with neural populations to anticipate events, a process that is impaired in many neurological and psychiatric disorders such as dyslexia, schizophrenia and Attention Deficit Hyperactivity Disorder (ADHD).

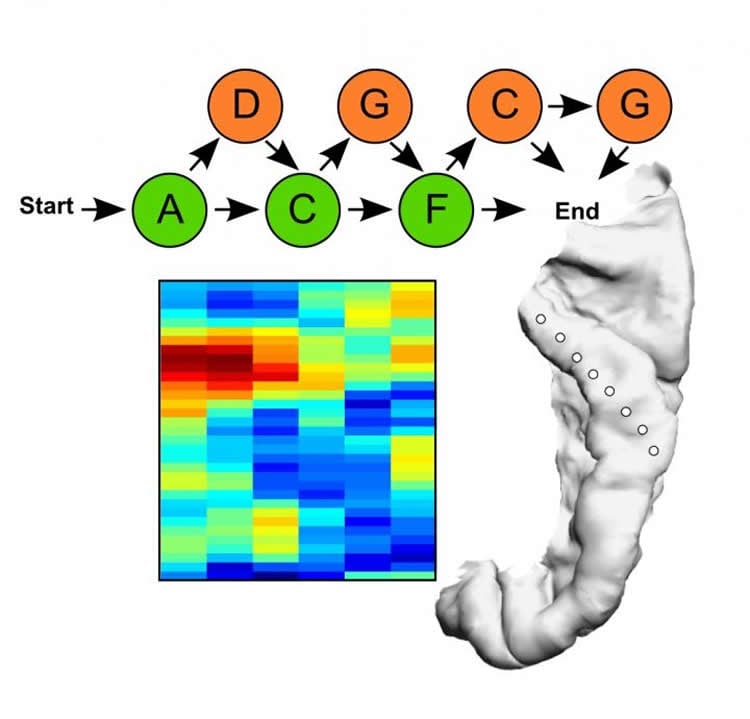

Using an approach first developed for studying infant language learning, the team of neuroscientists led by Dr Yuki Kikuchi and Prof Chris Petkov of Newcastle University had humans and monkeys listen to sequences of spoken words from a made-up language. Both species were able to learn the predictive relationships between the spoken sounds in the sequences.

Neural responses from the auditory cortex in the two species revealed how populations of neurons responded to the speech sounds and to the learned predictive relationships between the sounds. The neural responses were found to be remarkably similar in both species, suggesting that the way the human auditory cortex responds to speech harnesses evolutionarily conserved mechanisms, rather than those that have uniquely specialized in humans for speech or language.

“Being able to predict events is vital for so much of what we do every day,” Professor Petkov notes. “Now that we know humans and monkeys share the ability to predict speech we can apply this knowledge to take forward research to improve our understanding of the human brain.”

Dr Kikuchi elaborates, “in effect we have discovered the mechanisms for speech in your brain that work like predictive text on your mobile phone, anticipating what you are going to hear next. This could help us better understand what is happening when the brain fails to make fundamental predictions, such as in people with dementia or after a stroke.”

Building on these results, the team are working on projects to harness insights on predictive signals in the brain to develop new models to study how these signals go wrong in patients with stroke or dementia. The long-term goal is to identify strategies that yield more accurate prognoses and treatments for these patients.

Funding: BBSRC http://www.bbsrc.ac.uk/ (grant number BB/J009849/1). Received by CIP and YK, joint with Quoc Vuong. The funder had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript. Wellcome Trust https://wellcome.ac.uk (grant number WT091681MA). Received by TDG and PEG. The funder had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript. Wellcome Trust https://wellcome.ac.uk (grant number WT092606AIA). Received by CIP (Investigator Award). The funder had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript. NeuroCreative Award. Received by YK. The funder had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript. NIH Intramural contract. Received by CIP and YK. The funder had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript. NIH https://www.nih.gov/ (grant number R01-DC04290). Received by MAH, AER, KVN, CKK, and HK. The funder had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.

Competing Interests: The authors have declared that no competing interests exist.

Source: Yuki Kikuchi – PLOS

Image Source: NeuroscienceNews.com image is credited to Dr Y. Kikuchi et al.

Original Research: Full open access research for “Sequence learning modulates neural responses and oscillatory coupling in human and monkey auditory cortex” by Yukiko Kikuchi, Adam Attaheri, Benjamin Wilson, Ariane E. Rhone, Kirill V. Nourski, Phillip E. Gander, Christopher K. Kovach, Hiroto Kawasaki, Timothy D. Griffiths, Matthew A. Howard III, and Christopher I. Petkov in PLOS Biology. Published online April 25 2017 doi:10.1371/journal.pbio.2000219

[cbtabs][cbtab title=”MLA”]PLOS “What’s Coming Next? How the Brain Predicts Speech.” NeuroscienceNews. NeuroscienceNews, 25 April 2017.

<https://neurosciencenews.com/speech-prediction-6499/>.[/cbtab][cbtab title=”APA”]PLOS (2017, April 25). What’s Coming Next? How the Brain Predicts Speech. NeuroscienceNew. Retrieved April 25, 2017 from https://neurosciencenews.com/speech-prediction-6499/[/cbtab][cbtab title=”Chicago”]PLOS “What’s Coming Next? How the Brain Predicts Speech.” https://neurosciencenews.com/speech-prediction-6499/ (accessed April 25, 2017).[/cbtab][/cbtabs]

Abstract

Sequence learning modulates neural responses and oscillatory coupling in human and monkey auditory cortex

Learning complex ordering relationships between sensory events in a sequence is fundamental for animal perception and human communication. While it is known that rhythmic sensory events can entrain brain oscillations at different frequencies, how learning and prior experience with sequencing relationships affect neocortical oscillations and neuronal responses is poorly understood. We used an implicit sequence learning paradigm (an “artificial grammar”) in which humans and monkeys were exposed to sequences of nonsense words with regularities in the ordering relationships between the words. We then recorded neural responses directly from the auditory cortex in both species in response to novel legal sequences or ones violating specific ordering relationships. Neural oscillations in both monkeys and humans in response to the nonsense word sequences show strikingly similar hierarchically nested low-frequency phase and high-gamma amplitude coupling, establishing this form of oscillatory coupling—previously associated with speech processing in the human auditory cortex—as an evolutionarily conserved biological process. Moreover, learned ordering relationships modulate the observed form of neural oscillatory coupling in both species, with temporally distinct neural oscillatory effects that appear to coordinate neuronal responses in the monkeys. This study identifies the conserved auditory cortical neural signatures involved in monitoring learned sequencing operations, evident as modulations of transient coupling and neuronal responses to temporally structured sensory input.

“Sequence learning modulates neural responses and oscillatory coupling in human and monkey auditory cortex” by Yukiko Kikuchi, Adam Attaheri, Benjamin Wilson, Ariane E. Rhone, Kirill V. Nourski, Phillip E. Gander, Christopher K. Kovach, Hiroto Kawasaki, Timothy D. Griffiths, Matthew A. Howard III, and Christopher I. Petkov in PLOS Biology. Published online April 25 2017 doi:10.1371/journal.pbio.2000219