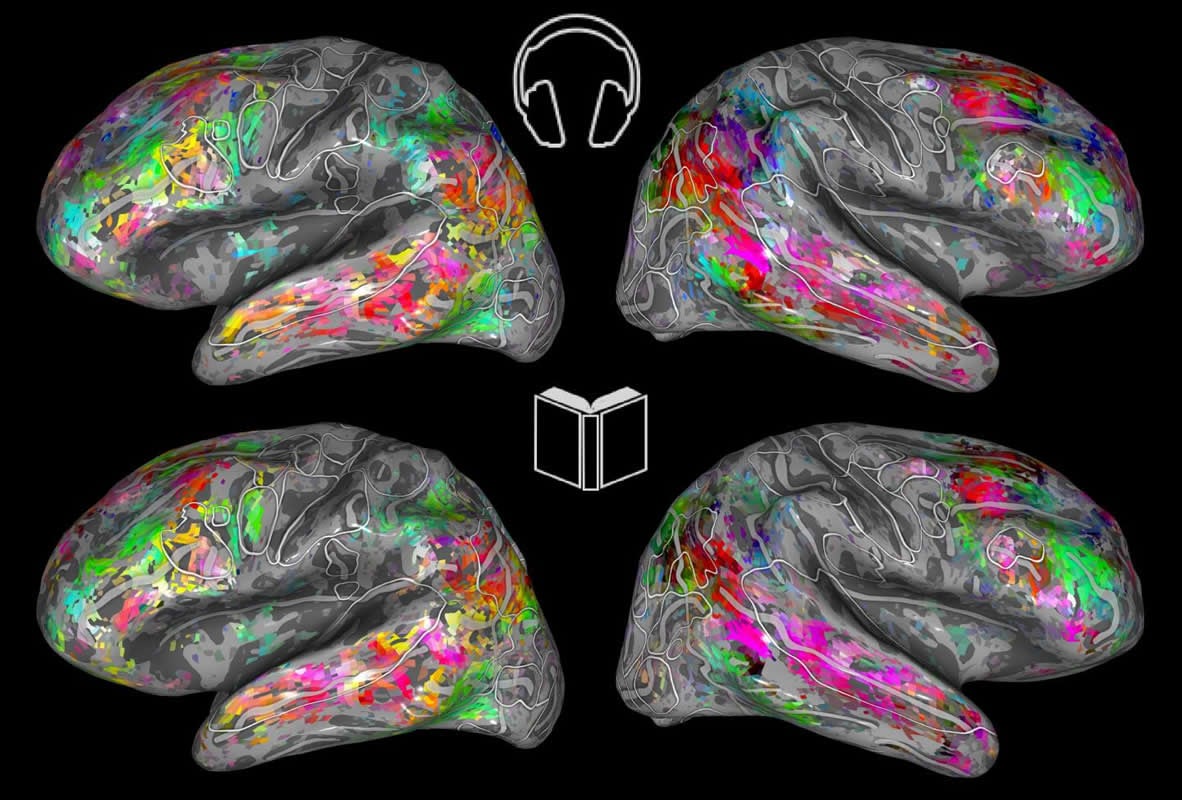

Summary: fMRI brain scans reveal semantic tuning during both reading and listening to words are highly correlated in selective areas of the cerebral cortex. The new brain maps enabled researchers to accurately predict which words would active specific regions of the cortex.

Source: UC Berkeley

Too busy or lazy to read Melville’s Moby Dick or Tolstoy’s Anna Karenina? That’s OK. Whether you read the classics, or listen to them instead, the same cognitive and emotional parts of the brain are likely to be stimulated. And now, there’s a map to prove it.

Neuroscientists at the University of California, Berkeley, have created interactive maps that can predict where different categories of words activate the brain. Their latest map is focused on what happens in the brain when you read stories.

The findings, to appear Aug. 19 in the Journal of Neuroscience, provide further evidence that different people share similar semantic — or word-meaning — topography, opening yet another door to our inner thoughts and narratives. They also have practical implications for learning and for speech disorders, from dyslexia to aphasia.

“At a time when more people are absorbing information via audiobooks, podcasts and even audio texts, our study shows that, whether they’re listening to or reading the same materials, they are processing semantic information similarly,” said study lead author Fatma Deniz, a postdoctoral researcher in neuroscience in the Gallant Lab at UC Berkeley and former data science fellow with the Berkeley Institute for Data Science.

For the study, people listened to stories from “The Moth Radio Hour,” a popular podcast series, and then read those same stories. Using functional MRI, researchers scanned their brains in both the listening and reading conditions, compared their listening-versus-reading brain activity data, and found the maps they created from both datasets were virtually identical.

The results can be viewed in an interactive, 3D, color-coded map, where words — grouped in such categories as visual, tactile, numeric, locational, violent, mental, emotional and social — are presented like vibrant butterflies on flattened cortices. The cortex is the coiled surface layer of gray matter of the cerebrum that coordinates sensory and motor information.

The interactive 3D brain viewer is scheduled to go online this month.

As for clinical applications, the maps could be used to compare language processing in healthy people and in those with stroke, epilepsy and brain injuries that impair speech. Understanding such differences can aid recovery efforts, Deniz said.

The semantic maps can also inform interventions for dyslexia, a widespread, neurodevelopmental language-processing disorder that impairs reading.

“If, in the future, we find that the dyslexic brain has rich semantic language representation when listening to an audiobook or other recording, that could bring more audio materials into the classroom,” Deniz said.

And the same goes for auditory processing disorders, in which people cannot distinguish the sounds or “phonemes” that make up words. “It would be very helpful to be able to compare the listening and reading semantic maps for people with auditory processing disorder,” she said.

Nine volunteers each spent a couple of hours inside functional MRI scanners, listening and then reading stories from “The Moth Radio Hour” as researchers measured their cerebral blood flow.

Their brain activity data, in both conditions, were then matched against time-coded transcriptions of the stories, the results of which were fed into a computer program that scores words according to their relationship to one another.

Using statistical modeling, researchers arranged thousands of words on maps according to their semantic relationships. Under the animals category, for example, one can find the words “bear,” “cat” and “fish.”

The maps, which covered at least one-third of the cerebral cortex, enabled the researchers to predict with accuracy which words would activate which parts of the brain.

The results of the reading experiment came as a surprise to Deniz, who had anticipated some changes in the way readers versus listeners would process semantic information.

“We knew that a few brain regions were activated similarly when you hear a word and read the same word, but I was not expecting such strong similarities in the meaning representation across a large network of brain regions in both these sensory modalities,” Deniz said.

Her study is a follow-up to a 2016 Gallant Lab study that recorded the brain activity of seven study subjects as they listened to stories from “The Moth Radio Hour.”

Future mapping of semantic information will include experiments with people who speak languages other than English, as well as with people who have language-based learning disorders, Deniz said.

Co-authors of the study are Anwar Nunez-Elizalde, Alexander Huth and Jack Gallant, all from UC Berkeley.

Source:

UC Berkeley

Media Contacts:

Yasmin Anwar – UC Berkeley

Image Source:

The image is credited to Fatma Deniz.

Original Research: Closed access

“The representation of semantic information across human cerebral cortex during listening versus reading is invariant to stimulus modality”. Fatma Deniz, Anwar O. Nunez-Elizalde, Alexander G. Huth and Jack L. Gallant.

Journal of Neuroscience. doi:10.1523/JNEUROSCI.0675-19.2019

Abstract

The representation of semantic information across human cerebral cortex during listening versus reading is invariant to stimulus modality

An integral part of human language is the capacity to extract meaning from spoken and written words, but the precise relationship between brain representations of information perceived by listening versus reading is unclear. Prior neuroimaging studies have shown that semantic information in spoken language is represented in multiple regions in the human cerebral cortex, while amodal semantic information appears to be represented in a few broad brain regions. However, previous studies were too insensitive to determine whether semantic representations were shared at a fine level of detail rather than merely at a coarse scale. We used fMRI to record brain activity in two separate experiments while participants listened to or read several hours of the same narrative stories, and then created voxelwise encoding models to characterize semantic selectivity in each voxel and in each individual participant. We find that semantic tuning during listening and reading are highly correlated in most semantically-selective regions of cortex, and models estimated using one modality accurately predict voxel responses in the other modality. These results suggest that the representation of language semantics is independent of the sensory modality through which the semantic information is received.

SIGNIFICANCE STATEMENT

Humans can comprehend the meaning of words from both spoken and written language. It is therefore important to understand the relationship between the brain representations of spoken or written text. Here we show that although the representation of semantic information in the human brain is quite complex, the semantic representations evoked by listening versus reading are almost identical. These results suggest that the representation of language semantics is independent of the sensory modality through which the semantic information is received.