Summary: Researchers developed a drone that flies autonomously using neuromorphic image processing, mimicking animal brains. This method significantly improves data processing speed and energy efficiency compared to traditional GPUs.

The study highlights the potential for tiny, agile drones for various applications. The neuromorphic approach allows the drone to process data up to 64 times faster while consuming three times less energy.

Key Facts:

- Efficient Processing: Neuromorphic AI processes data up to 64 times faster than GPUs.

- Energy Saving: The system consumes three times less energy than traditional methods.

- Real-World Applications: Potential uses include crop monitoring and warehouse management.

Source: Delft University of Technology

A team of researchers at Delft University of Technology has developed a drone that flies autonomously using neuromorphic image processing and control based on the workings of animal brains.

Animal brains use less data and energy compared to current deep neural networks running on GPUs (graphic chips). Neuromorphic processors are therefore very suitable for small drones because they don’t need heavy and large hardware and batteries.

The results are extraordinary: during flight the drone’s deep neural network processes data up to 64 times faster and consumes three times less energy than when running on a GPU. Further developments of this technology may enable the leap for drones to become as small, agile, and smart as flying insects or birds.

The findings were recently published in Science Robotics.

Learning from animal brains: spiking neural networks

Artificial intelligence holds great potential to provide autonomous robots with the intelligence needed for real-world applications. However, current AI relies on deep neural networks that require substantial computing power.

The processors made for running deep neural networks (Graphics Processing Units, GPUs) consume a substantial amount of energy. Especially for small robots like flying drones this is a problem, since they can only carry very limited resources in terms of sensing and computing.

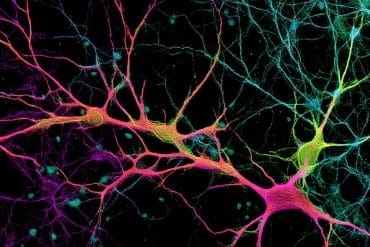

Animal brains process information in a way that is very different from the neural networks running on GPUs.

Biological neurons process information asynchronously, and mostly communicate via electrical pulses called spikes. Since sending such spikes costs energy, the brain minimizes spiking, leading to sparse processing.

Inspired by these properties of animal brains, scientists and tech companies are developing new, neuromorphic processors.

These new processors allow to run spiking neural networks and promise to be much faster and more energy efficient.

“The calculations performed by spiking neural networks are much simpler than those in standard deep neural networks.”, says Jesse Hagenaars, PhD candidate and one of the authors of the article, “Whereas digital spiking neurons only need to add integers, standard neurons have to multiply and add floating point numbers.

“This makes spiking neural networks quicker and more energy efficient. To understand why, think of how humans also find it much easier to calculate 5 + 8 than to calculate 6.25 x 3.45 + 4.05 x 3.45.”

This energy efficiency is further boosted if neuromorphic processors are used in combination with neuromorphic sensors, like neuromorphic cameras. Such cameras do not make images at a fixed time interval. Instead, each pixel only sends a signal when it becomes brighter or darker.

The advantages of such cameras are that they can perceive motion much more quickly, are more energy efficient, and function well both in dark and bright environments.

Moreover, the signals from neuromorphic cameras can feed directly into spiking neural networks running on neuromorphic processors. Together, they can form a huge enabler for autonomous robots, especially small, agile robots like flying drones.

First neuromorphic vision and control of a flying drone

In an article published in Science Robotics on May 15, 2024, researchers from Delft University of Technology, the Netherlands, demonstrate for the first time a drone that uses neuromorphic vision and control for autonomous flight.

Specifically, they developed a spiking neural network that processes the signals from a neuromorphic camera and outputs control commands that determine the drone’s pose and thrust.

They deployed this network on a neuromorphic processor, Intel’s Loihi neuromorphic research chip, on board of a drone. Thanks to the network, the drone can perceive and control its own motion in all directions.

“We faced many challenges,” says Federico Paredes-Vallés, one of the researchers that worked on the study, “but the hardest one was to imagine how we could train a spiking neural network so that training would be both sufficiently fast and the trained network would function well on the real robot. In the end, we designed a network consisting of two modules.

“The first module learns to visually perceive motion from the signals of a moving neuromorphic camera. It does so completely by itself, in a self-supervised way, based only on the data from the camera. This is similar to how also animals learn to perceive the world by themselves.

“The second module learns to map the estimated motion to control commands, in a simulator. This learning relied on an artificial evolution in simulation, in which networks that were better in controlling the drone had a higher chance of producing offspring.

“Over the generations of the artificial evolution, the spiking neural networks got increasingly good at control, and were finally able to fly in any direction at different speeds.

“We trained both modules and developed a way with which we could merge them together. We were happy to see that the merged network immediately worked well on the real robot.”

With its neuromorphic vision and control, the drone is able to fly at different speeds under varying light conditions, from dark to bright. It can even fly with flickering lights, which make the pixels in the neuromorphic camera send great numbers of signals to the network that are unrelated to motion.

Improved energy efficiency and speed by neuromorphic AI

“Importantly, our measurements confirm the potential of neuromorphic AI. The network runs on average between 274 and 1600 times per second. If we run the same network on a small, embedded GPU, it runs on average only 25 times per second, a difference of a factor ~10-64! Moreover, when running the network,

“Intel’s Loihi neuromorphic research chip consumes 1.007 watts, of which 1 watt is the idle power that the processor spends just when turning on the chip. Running the network itself only costs 7 milliwatts. In comparison, when running the same network, the embedded GPU consumes 3 watts, of which 1 watt is idle power and 2 watts are spent for running the network.

“The neuromorphic approach results in AI that runs faster and more efficiently, allowing deployment on much smaller autonomous robots.”, says Stein Stroobants, PhD candidate in the field of neuromorphic drones.

Future applications of neuromorphic AI for tiny robots

“Neuromorphic AI will enable all autonomous robots to be more intelligent,” says Guido de Croon, Professor in bio-inspired drones, “but it is an absolute enabler for tiny autonomous robots.

“At Delft University of Technology’s Faculty of Aerospace Engineering, we work on tiny autonomous drones which can be used for applications ranging from monitoring crop in greenhouses to keeping track of stock in warehouses.

“The advantages of tiny drones are that they are very safe and can navigate in narrow environments like in between ranges of tomato plants. Moreover, they can be very cheap, so that they can be deployed in swarms.

“This is useful for more quickly covering an area, as we have shown in exploration and gas source localization settings.”

“The current work is a great step in this direction. However, the realization of these applications will depend on further scaling down the neuromorphic hardware and expanding the capabilities towards more complex tasks such as navigation.”

About this AI and robotics research news

Author: Marc Kool

Source: Delft University of Technology

Contact: Marc Kool – Delft University of Technology

Image: The image is credited to Neuroscience News

Original Research: Closed access.

“Fully neuromorphic vision and control for autonomous drone flight” by Guido de Croon et al. Science Robotics

Abstract

Fully neuromorphic vision and control for autonomous drone flight

Biological sensing and processing is asynchronous and sparse, leading to low-latency and energy-efficient perception and action.

In robotics, neuromorphic hardware for event-based vision and spiking neural networks promises to exhibit similar characteristics.

However, robotic implementations have been limited to basic tasks with low-dimensional sensory inputs and motor actions because of the restricted network size in current embedded neuromorphic processors and the difficulties of training spiking neural networks.

Here, we present a fully neuromorphic vision-to-control pipeline for controlling a flying drone. Specifically, we trained a spiking neural network that accepts raw event-based camera data and outputs low-level control actions for performing autonomous vision-based flight.

The vision part of the network, consisting of five layers and 28,800 neurons, maps incoming raw events to ego-motion estimates and was trained with self-supervised learning on real event data.

The control part consists of a single decoding layer and was learned with an evolutionary algorithm in a drone simulator. Robotic experiments show a successful sim-to-real transfer of the fully learned neuromorphic pipeline.

The drone could accurately control its ego-motion, allowing for hovering, landing, and maneuvering sideways—even while yawing at the same time.

The neuromorphic pipeline runs on board on Intel’s Loihi neuromorphic processor with an execution frequency of 200 hertz, consuming 0.94 watt of idle power and a mere additional 7 to 12 milliwatts when running the network.

These results illustrate the potential of neuromorphic sensing and processing for enabling insect-sized intelligent robots.