Summary: Researchers report our visual attentoin pays most attention to parts of a scene that have meaning to us, not the parts that stick out.

Source: UC Davis.

Our visual attention is drawn to parts of a scene that have meaning, rather than to those that are salient or “stick out,” according to new research from the Center for Mind and Brain at the University of California, Davis. The findings, published Sept. 25 in the journal Nature Human Behavior, overturn the widely-held model of visual attention.

“A lot of people will have to rethink things,” said Professor John Henderson, who led the research. “The saliency hypothesis really is the dominant view.”

Our eyes we perceive a wide field of view in front of us, but we only focus our attention on a small part of this field. How do we decide where to direct our attention, without thinking about it?

The dominant theory in attention studies is “visual salience,” Henderson said. Salience means things that “stick out” from the background, like colorful berries on a background of leaves or a brightly lit object in a room.

Saliency is relatively easy to measure. You can map the amount of saliency in different areas of a picture by measuring relative contrast or brightness, for example.

Henderson called this the “magpie theory” our attention is drawn to bright and shiny objects.

“It becomes obvious, though, that it can’t be right,” he said, otherwise we would constantly be distracted.

Making a Map of Meaning

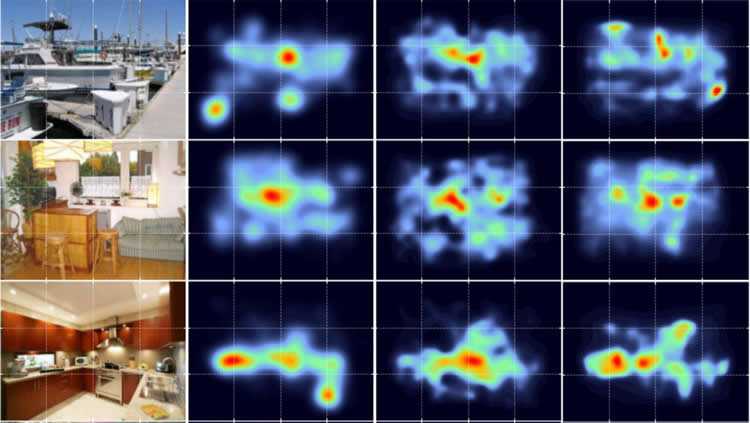

Henderson and postdoctoral researcher Taylor Hayes set out to test whether attention is guided instead by how “meaningful” we find an area within our view. They first had to construct “meaning maps” of test scenes, where different parts of the scene had different levels of meaning to an observer.

To make their meaning map, Henderson and Hayes took images of scenes, broke them up into overlapping circular tiles, and submitted the individual tiles to the online crowdsourcing service Mechanical Turk, asking users to rate the tiles for meaning.

By tallying the votes of Mechanical Turk users they were able to assign levels of meaning to different areas of an image and create a meaning map comparable to a saliency map of the same scene.

Next, they tracked the eye movements of volunteers as they looked at the scene. Those eyetracks gave them a map of what parts of the image attracted the most attention. This “attention map” was closer to the meaning map than the salience map, Henderson said.

In Search of Meaning

Henderson and Hayes don’t yet have firm data on what makes part of a scene meaningful, although they have some ideas. For example, a cluttered table or shelf attracted more attention than a highly salient splash of sunlight on a wall. With further work, they hope to develop a “taxonomy of meaning,” Henderson said.

Although the research is aimed at a fundamental understanding of how visual attention works, there could be some near-term applications, Henderson said, for example in developing automated visual systems that allow computers to scan security footage or to automatically identify or caption images online.

Funding: The work was supported by the National Science Foundation.

Source: Andy Fell – UC Davis

Image Source: NeuroscienceNews.com image is credited to John Henderson and Taylor Hayes, UC Davis.

Original Research: Abstract for “Meaning-based guidance of attention in scenes as revealed by meaning maps” by John M. Henderson & Taylor R. Hayes in Nature Human Nature. Published online September 25 2017 doi:10.1038/s41562-017-0208-0

[cbtabs][cbtab title=”MLA”]UC Davis “Visual Brain Drawn to Meaning, Not What Stands Out.” NeuroscienceNews. NeuroscienceNews, 25 September 2017.

<https://neurosciencenews.com/meaning-visual-brain-7580/>.[/cbtab][cbtab title=”APA”]UC Davis (2017, September 25). Visual Brain Drawn to Meaning, Not What Stands Out. NeuroscienceNews. Retrieved September 25, 2017 from https://neurosciencenews.com/meaning-visual-brain-7580/[/cbtab][cbtab title=”Chicago”]UC Davis “Visual Brain Drawn to Meaning, Not What Stands Out.” https://neurosciencenews.com/meaning-visual-brain-7580/ (accessed September 25, 2017).[/cbtab][/cbtabs]

Abstract

Meaning-based guidance of attention in scenes as revealed by meaning maps

Real-world scenes comprise a blooming, buzzing confusion of information. To manage this complexity, visual attention is guided to important scene regions in real time. What factors guide attention within scenes? A leading theoretical position suggests that visual salience based on semantically uninterpreted image features plays the critical causal role in attentional guidance, with knowledge and meaning playing a secondary or modulatory role. Here we propose instead that meaning plays the dominant role in guiding human attention through scenes. To test this proposal, we developed ‘meaning maps’ that represent the semantic richness of scene regions in a format that can be directly compared to image salience. We then contrasted the degree to which the spatial distributions of meaning and salience predict viewers’ overt attention within scenes. The results showed that both meaning and salience predicted the distribution of attention, but that when the relationship between meaning and salience was controlled, only meaning accounted for unique variance in attention. This pattern of results was apparent from the very earliest time-point in scene viewing. We conclude that meaning is the driving force guiding attention through real-world scenes.

“Meaning-based guidance of attention in scenes as revealed by meaning maps” by John M. Henderson & Taylor R. Hayes in Nature Human Nature. Published online September 25 2017 doi:10.1038/s41562-017-0208-0