Summary: A new machine learning algorithm can accurately determine whether a person heard a real or made-up word based on neuroimaging data.

Source: SfN

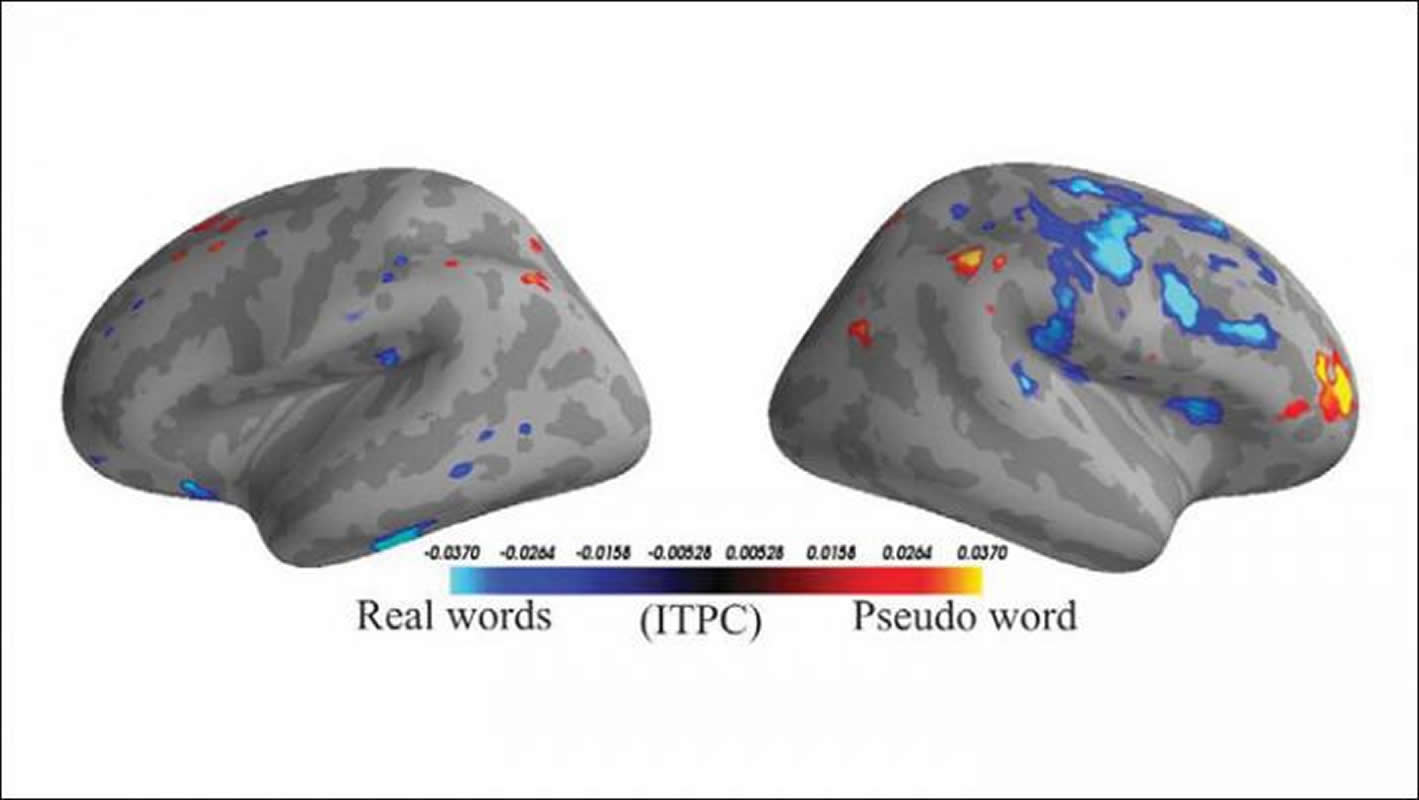

Pairing machine learning with neuroimaging can determine whether a person heard a real or made up word based on their brain activity, according to a new study published in eNeuro. These results lay the groundwork for investigating language processing in the brain and developing an imaging-based tool to assess language impairments.

Many brain injuries and disorders cause language impairments that are difficult to establish with standard language tasks because the patient is unresponsive or uncooperative, creating a need for a task-free diagnosis method. Using magnetoencephalography, Mads Jensen, Rasha Hyder, and Yury Shtyrov from Aarhus University examined the brain activity of participants while they listened to audio recordings of both similar-sounding real words with different meanings and made up “pseudowords.” The participants were then instructed to ignore the words and focus on a silent film.

Using machine learning algorithms, the team was able to determine when a participant was hearing a real or made up word, a grammatically correct or incorrect word, and the word’s meaning based on their brain activity. They also identified specific brain regions and frequencies responsible for processing different types of language.

Source:

SfN

Media Contacts:

Calli McMurray – SfN

Image Source:

The image is credited to Jensen et al., eNeuro 2019.

Original Research: Closed access

“MVPA analysis of intertrial phase coherence of neuromagnetic responses to words reliably classifies multiple levels of language processing in the brain”. Mads Jensen, Rasha Hyder and Yury Shtyrov.

eNeuro. doi:10.1523/ENEURO.0444-18.2019

Abstract

MVPA analysis of intertrial phase coherence of neuromagnetic responses to words reliably classifies multiple levels of language processing in the brain

Neural processing of language is still among the most poorly understood functions of the human brain, whereas a need to objectively assess the neurocongitve status of the language function in a participant-friendly and noninvasive fashion arises in various situations. Here, we propose a solution for this based on a short task-free recording of MEG responses to a set of spoken linguistic contrasts. We used spoken stimuli that diverged lexically (words/pseudowords), semantically (action-related/abstract) or morphosyntactically (grammatically correct/ungrammatical). Based on beamformer source reconstruction we investigated inter-trial phase coherence (ITPC) in five canonical bands (alpha, beta, and low, medium and high gamma) using multivariate pattern analysis (MVPA). Using this approach, we could successfully classify brain responses to meaningful words from meaningless pseudowords, correct from incorrect syntax, as well as semantic differences. The best classification results indicated distributed patterns of activity dominated by core temporofrontal language circuits and complemented by other areas. They varied between the different neurolinguistic properties across frequency bands, with lexical processes classified predominantly by broad gamma, semantic distinctions – by alpha and beta, and syntax – by low gamma feature patterns. Crucially, all types of processing commenced in a near-parallel fashion from ∼100 ms after the auditory information allowed for disambiguating the spoken input. This shows that individual neurolinguistic properties take place simultaneously and involve overlapping yet distinct neuronal networks that operate at different frequency bands. This brings further hope that brain imaging can be used to assess neurolinguistic processes objectively and noninvasively in a range of populations.

Significance Statement

In an MEG study that was optimally designed to test several language features in a non-attentive paradigm, we found that, by analysing cortical source-level inter-trial phase coherence (ITPC) in five canonical bands (alpha, beta, and low, medium and high gamma) with machine-learning classification tools (multivariate pattern analysis, MVPA), we could successfully classify meaningful words from meaningless pseudowords, correct from incorrect syntax, and semantic differences between words, based on passive brain responses recorded in a task-free fashion. The results show different time courses for the different processes that involve different frequency bands. It is to our knowledge the first study to simultaneously map and objectively classify multiple neurolinguistics processes in a comparable manner across language features and frequency bands.