Researchers at the Ruhr-Universität Bochum have debunked the theory that the left brain hemisphere is dominant in the processing of all languages. To date, it has been assumed that that dominance is not determined by the physical structure of a given language. However, the biopsychologists have demonstrated that both hemispheres are equally involved in the perception of whistled Turkish. Onur Güntürkün, Monika Güntürkün and Constanze Hahn report in the journal “Current Biology”.

Common theory: left hemisphere dominant in language perception

The perception of all spoken languages – including those with clicks –, written texts and even sign language involves the left brain hemisphere more strongly than the right one. The right hemisphere, on the other hand, processes acoustic information via slow frequencies, pitch and melody. According to the currently commonly held opinion, the asymmetry in language processing is not determined by the physical properties of a given language. “The theory can be easily verified by analysing a language which possesses the full range of physical properties in the perception of which the right brain hemisphere is specialised,” says Onur Güntürkün. “We can count ourselves lucky that such a language exists – namely whistled Turkish.”

Hearing test with spoken and whistled Turkish

The Bochum team tested 31 inhabitants of Kuṣköy, a village in Turkey, who speak Turkish and whistle it as well. Via headphones, they were presented either whistled or spoken Turkish syllables. In some test runs, they heard different syllables in both ears, in other runs the same syllables. They were asked to state which syllable they had perceived. The left brain hemisphere processes information from the right ear, the right hemisphere from the left ear. For spoken Turkish, a clear asymmetry emerged: If the participants heard different syllables, they perceived the syllables from the right ear much more frequently – a dominance of the left brain hemisphere. That asymmetry did not exist in whistled Turkish. “The results have shown that brain asymmetries occur at a very early signal processing stage,” concludes the researcher from Bochum.

Turkish researcher discovers whistled Turkish in Australia

Whistled Turkish contains the same vocabulary and follows the same grammatical rules as Turkish. “It is simply a different format, in the same way as written and spoken Turkish are,” describes Onur Güntürkün. A small group of people in the mountainous north-eastern part of Turkey use whistled language which can be heard over distances of several kilometres. “Even though I am Turkish, I had, strangely enough, never heard of whistled Turkish. I encountered it in Australia for the first time, when a colleague told me about it,” explains the biopsychologist. “I knew instantly that nature had thus provided the perfect method for verifying the theory regarding asymmetry of language perception.”

Source: Dr. Julia Weiler – RUB

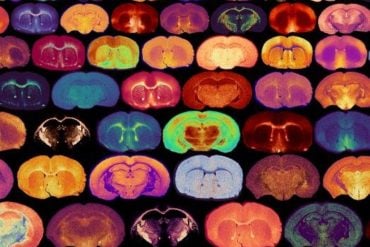

Image Source: The image is credited to Onur Güntürkün

Original Research: Abstract for “Whistled Turkish alters language asymmetries” by Onur Güntürkün, Monika Güntürkün, and Constanze Hahn in Current Biology. Published online August 17 2015 doi:10.1016/j.cub.2015.06.067

Abstract

Whistled Turkish alters language asymmetries

Whistled languages represent an experiment of nature to test the widely accepted view that language comprehension is to some extent governed by the left hemisphere in a rather input-invariant manner [1] . Indeed, left-hemisphere superiority has been reported for atonal and tonal languages, click consonants, writing and sign languages [2–5] . The right hemisphere is specialized to encode acoustic properties like spectral cues, pitch, and melodic lines and plays a role for prosodic communicative cues [6,7] . Would left hemisphere language superiority change when subjects had to encode a language that is constituted by acoustic properties for which the right hemisphere is specialized? Whistled Turkish uses the full lexical and syntactic information of vocal Turkish, and transforms this into whistles to transport complex conversations with constrained whistled articulations over long distances [8] . We tested the comprehension of vocally vs. whistled identical lexical information in native whistle-speaking people of mountainous Northeast Turkey. We discovered that whistled language comprehension relies on symmetric hemispheric contributions, associated with a decrease of left and a relative increase of right hemispheric encoding mechanisms. Our results demonstrate that a language that places high demands on right-hemisphere typical acoustical encoding creates a radical change in language asymmetries. Thus, language asymmetry patterns are in an important way shaped by the physical properties of the lexical input.

“Whistled Turkish alters language asymmetries” by Onur Güntürkün, Monika Güntürkün, and Constanze Hahn in Current Biology. Published online August 17 2015 doi:10.1016/j.cub.2015.06.067