Summary: The machine learning model may be widely adopted to identify connections between neural activity and natural behavior.

Source: Princeton University

Imagine an attractive person walking toward you. Do you look up and smile? Turn away? Approach but avoid eye contact? The setup is the same, but the outcomes depend entirely on your “internal state,” which includes your mood, your past experiences, and countless other variables that are invisible to someone watching the scene..

So how can an observer decode internal states by watching outward behaviors? That was the challenge facing a team of Princeton neuroscientists. Rather than tackling the intricacies of human brains, they investigated fruit flies with fewer behaviors and, one imagines, fewer internal states. They built on prior work studying the songs and movements of amorous Drosophila melanogaster males.

“Our previous work was able to predict a portion of singing behaviors, but by estimating the fly’s internal state, we can accurately predict what the male will sing over time as he courts a female,” said Mala Murthy, a professor of neuroscience and the senior author on a paper appearing in today’s issue of Nature Neuroscience with co-authors Jonathan Pillow, a professor of psychology and neuroscience, and PNI postdoctoral research fellow Adam Calhoun.

Their models use observable variables like the speed of the male or his distance to the female. The researchers identified three separate types of songs, generated by wing vibration, plus the choice not to sing. They then linked the song decisions to the observable variables.

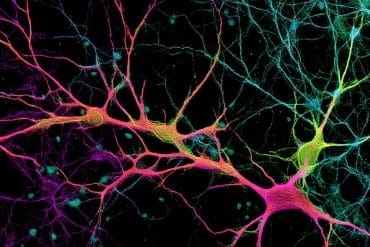

The key was building a machine learning model with a new expectation: animals don’t change their behaviors at random, but based on a combination of feedback that they are getting from the female and the state of their own nervous system. Using their new method, they discovered that males pattern their songs in three distinct ways, each lasting tens to hundreds of milliseconds. They named each of the three states: “Close,” when a male is closer than average to a female and approaching her slowly; “Chasing,” when he is approaching quickly; and “Whatever,” when he is facing away from her and moving slowly. The researchers showed that these states correspond to distinct strategies, and then they identified neurons that can control how the males switch between strategies.

“This is an important breakthrough,” said Murthy. “We anticipate that this modeling framework will be widely used for connecting neural activity with natural behavior.”

Funding: This work was funded by the Simons Foundation (AWD494712, AWD1004351, and AWD543027), the Brain Research through Advancing Innovative Neurotechnologies (BRAIN) Initiative at the National Institutes of Health (NS104899), and the Howard Hughes Medical Institute.

Source:

Princeton University

Media Contacts:

Liz Fuller-Wright – Princeton University

Image Source:

The image is in the public domain.

Original Research: Closed access

“Unsupervised identification of the internal states that shape natural behavior”. Adam J. Calhoun, Jonathan W. Pillow & Mala Murthy.

Nature Neuroscience doi:10.1038/s41593-019-0533-x.

Abstract

Unsupervised identification of the internal states that shape natural behavior

Internal states shape stimulus responses and decision-making, but we lack methods to identify them. To address this gap, we developed an unsupervised method to identify internal states from behavioral data and applied it to a dynamic social interaction. During courtship, Drosophila melanogaster males pattern their songs using feedback cues from their partner. Our model uncovers three latent states underlying this behavior and is able to predict moment-to-moment variation in song-patterning decisions. These states correspond to different sensorimotor strategies, each of which is characterized by different mappings from feedback cues to song modes. We show that a pair of neurons previously thought to be command neurons for song production are sufficient to drive switching between states. Our results reveal how animals compose behavior from previously unidentified internal states, which is a necessary step for quantitative descriptions of animal behavior that link environmental cues, internal needs, neuronal activity and motor outputs.