Summary: In the world of AI, bigger is usually seen as better—but this leads to massive energy consumption and computational costs. Taking a cue from human biology, a research team has developed a brain-inspired “selective pruning” framework for Spiking Neural Networks (SNNs).

The study reveals that AI doesn’t need more connections to learn complex tasks; it needs the right ones. By mimicking how an infant’s brain strengthens long-range links while “pruning” away local clutter, this new AI achieves continual learning—mastering perception, motor control, and interaction—while actually getting smaller and more energy-efficient over time.

Key Facts

- The “Infant” Approach: Human brains don’t just add connections; they refine them. This model follows a “simple-to-complex” trajectory, maturing primary modules (like perception) before moving on to higher cognition.

- Selective Pruning: Unlike traditional AI that freezes weights to prevent forgetting, this system introduces a feedback mechanism that actively inhibits and removes redundant local connections from earlier tasks.

- Knowledge Reuse: While local clutter is pruned, cross-regional “long-range” connections are strengthened. This allows the AI to reuse knowledge from old tasks to solve new ones without needing more “brain” space.

- No More “Catastrophic Forgetting”: A major hurdle in AI is that learning something new often “erases” the old. This developmental framework mitigates that loss without using energy-heavy tricks like “experience replay.”

- Sustainably Evolving: The network scale is continuously reduced as learning progresses, offering a low-energy pathway toward General Cognitive Intelligence.

Source: Science China Press

How does artificial intelligence continue to improve its capabilities?

For a long time, expanding model size has been regarded as an important way to enhance the performance of artificial neural networks, but it has also led to rising energy consumption and growing computational costs.

In contrast, during development the human brain does not simply increase connection density; instead, it continuously gains new cognitive abilities through selective pruning.

Inspired by these, the research team proposed a temporally developmental continual learning framework for spiking neural networks. By enabling the temporal establishment and reorganization of connections across different regions, the approach achieves continual learning from simple to complex across perception–motor–interaction tasks while network size is progressively reduced, offering a new pathway toward low-energy, sustainably evolving general cognitive intelligence.

Temporally Development–Inspired Continual Learning Mechanism

Studies show that brain development follows clear temporal principles: neural connectivity first increases and then becomes refined, with cross-regional long-range connections gradually strengthening while local connections are selectively pruned.

Primary brain regions mature earlier to support higher cognition, and feedback from higher cognitive functions in turn optimizes lower-level structures. Along this process, infants progressively acquire multiple cognitive functions from simple to complex. Building on these principles, the researchers proposed a temporally development-inspired continual learning method.

The approach allows cognitive modules in spiking neural networks to grow progressively following the learning sequence of perception, motor control, and interaction, while evolving cross-regional long-range connections to promote knowledge reuse across tasks.

At the same time, feedback mechanisms are introduced to inhibit and prune redundant local connections from earlier tasks, enabling the network to become increasingly compact as learning progresses.

Energy-Efficient Cross-Domain Continual Learning

The research team found that the proposed method demonstrates stable and strong continual learning performance across multiple cognitive domains, including perception, motor control, and interaction, and achieves leading results on several widely used continual learning benchmarks.

Experimental results show that the model learns complex tasks progressively along a “simple-to-complex” trajectory, clearly outperforming direct training or direct pruning approaches.

Even as the network scale is continuously reduced, the model effectively preserves memory of previously learned tasks, significantly mitigating catastrophic forgetting while continuing to acquire new cognitive capabilities.

Further analysis indicates that this performance gain arises from brain-like dynamic changes within the network. As learning progresses, local connections first grow rapidly and are then selectively inhibited and pruned, reducing interference from irrelevant or outdated knowledge, while cross-regional long-range connections are continuously strengthened to support the selective reuse of prior knowledge with shared structure and semantics.

Importantly, this process does not rely on conventional continual learning strategies such as regularization, experience replay, or weight freezing.

The researchers note that this brain-inspired developmental mechanism enhances learning and memory in an efficient, low-energy manner, highlighting the potential of brain developmental principles to drive the next generation of artificial intelligence.

Key Questions Answered:

A: Surprisingly, no. In the human brain, we prune the “static” or redundant local noise to make the important long-range connections faster. This AI does the same: it deletes the specific “clutter” of an old task but keeps the high-level “concepts” in its long-range network, allowing it to remember more with less.

A: SNNs are the most brain-like form of AI because they only process information in “pulses” (spikes) rather than constant data streams. Combining SNNs with “selective pruning” makes this one of the most energy-efficient AI models ever created.

A: Currently, as AI gets smarter (like GPT-4), the hardware requirements and electricity bills skyrocket. This model proves that AI can follow a “biological growth curve”—where it actually requires less power and fewer parameters as it matures and becomes an expert.

Editorial Notes:

- This article was edited by a Neuroscience News editor.

- Journal paper reviewed in full.

- Additional context added by our staff.

About this AI and neuroscience research news

Author: Bei Yan

Source: Science China Press

Contact: Bei Yan – Science China Press

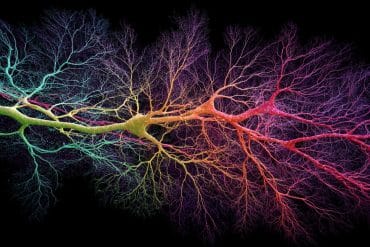

Image: The image is credited to Neuroscience News

Original Research: Open access.

“Continual Learning of Multiple Cognitive Functions with Brain-inspired Temporal Development Mechanism” by Bing Han, Feifei Zhao, Yinqian Sun, Wenxuan Pan, and Yi Zeng. National Science Review

DOI:10.1093/nsr/nwag066

Abstract

Continual Learning of Multiple Cognitive Functions with Brain-inspired Temporal Development Mechanism

Cognitive functions in current artificial intelligence networks are tied to the exponential increase in network scale, whereas the human brain can continuously learn hundreds of cognitive functions with remarkably low energy consumption.

This advantage partly arises from the brain’s cross-regional temporal development mechanisms, where the progressive formation, reorganization, and pruning of connections from basic to advanced regions, facilitate knowledge transfer and prevent network redundancy.

Inspired by these, we propose the Continual Learning of Multiple Cognitive Functions with Brain-inspired Temporal Development Mechanism(TD-MCL), enabling cognitive enhancement from simple to complex in Perception-Motor-Interaction (PMI) tasks.

The model drives sequential evolution of long-range inter-module connections to facilitate positive knowledge transfer, and uses feedback-guided local inhibition/pruning to eliminate prior task redundancies, reducing energy consumption while preserving acquired knowledge.

Experiments on the proposed cross-domain PMI dataset and general datasets (CIFAR100, ImageNet) show that the proposed method can achieve continual learning capabilities while reducing network scale, without introducing regularization, replay, or freezing strategies, and achieving superior accuracy on new tasks compared to direct learning.

The proposed method shows that the brain’s developmental mechanisms offer a valuable reference for exploring biologically plausible, low-energy enhancements of general cognitive abilities.