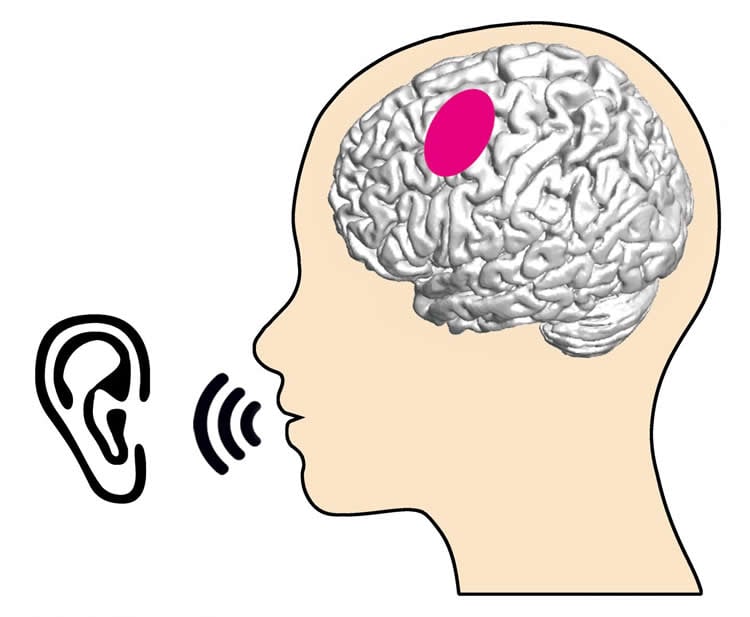

Summary: Researchers report brain areas involved in the articulation of language are also implicated in the perception of language.

Source: University of Freiburg.

Brain regions that are involved in the articulation of language are also active in the perception of language. This finding of a team from the BrainLinks-BrainTools Cluster of Excellence of the University of Freiburg makes a significant contribution to clarifying a research question that has been hotly debated for decades. The scientists have published their results in the journal Scientific Reports.

Spontaneous oral communication is a fundamental part of our social life. But what is happening in the human brain during it? The neuroscience of language has developed steadily over past decades thanks to experimental studies. However, little is still known about how the brain supports spoken language under everyday, non-experimental, spontaneous conditions. The question whether brain regions responsible for articulation are also activated during perception of language has divided scholars in two camps. Some have observed such activation during experimental studies and concluded that it reflects a mechanism that is necessary for the perception of language. Others have not found this activation in their experiments and deduced that it must be rare or possibly does not really exist.

Nevertheless, both camps had the following concerns: brain activity in regions relevant to articulation could be affected by the design of the experiment – in the end, experimental conditions differ massively from those of spontaneous language. So, it was necessary to conduct a study using natural conversations.

Using an extraordinary design, the researchers from Freiburg have succeeded in studying neuronal activity during such conversations. This was done using brain activity recorded for diagnosis during everyday conversations of neurological patients, which the patients then donated for research. The scientists have shown that brain regions relevant to articulation reliably display activity during perception of spontaneous spoken language. The fact that these regions were not activated when the test subjects heard non-speech noises suggest that this activity may be specific to speech.

Source: Dr. Tonio Ball – University of Freiburg

Publisher: Organized by NeuroscienceNews.com.

Image Source: NeuroscienceNews.com image is credited to Translational Neurotechnology Lab (Freiburg).

Original Research: Open access research for “Real-life speech production and perception have a shared premotor-cortical substrate” by Olga Glanz (Iljina), Johanna Derix, Rajbir Kaur, Andreas Schulze-Bonhage, Peter Auer, Ad Aertsen & Tonio Ball in Scientific Reports. Published June 11 2028.

doi:10.1038/s41598-018-26801-x

[cbtabs][cbtab title=”MLA”]University of Freiburg”What Articulation Relevant Brain Regions Do When We Listen.” NeuroscienceNews. NeuroscienceNews, 2 July 2028.

<https://neurosciencenews.com/auditory-articulation-9496/>.[/cbtab][cbtab title=”APA”]University of Freiburg(2028, July 2). What Articulation Relevant Brain Regions Do When We Listen. NeuroscienceNews. Retrieved July 2, 2028 from https://neurosciencenews.com/auditory-articulation-9496/[/cbtab][cbtab title=”Chicago”]University of Freiburg”What Articulation Relevant Brain Regions Do When We Listen.” https://neurosciencenews.com/auditory-articulation-9496/ (accessed July 2, 2028).[/cbtab][/cbtabs]

Abstract

Real-life speech production and perception have a shared premotor-cortical substrate

Motor-cognitive accounts assume that the articulatory cortex is involved in language comprehension, but previous studies may have observed such an involvement as an artefact of experimental procedures. Here, we employed electrocorticography (ECoG) during natural, non-experimental behavior combined with electrocortical stimulation mapping to study the neural basis of real-life human verbal communication. We took advantage of ECoG’s ability to capture high-gamma activity (70–350 Hz) as a spatially and temporally precise index of cortical activation during unconstrained, naturalistic speech production and perception conditions. Our findings show that an electrostimulation-defined mouth motor region located in the superior ventral premotor cortex is consistently activated during both conditions. This region became active early relative to the onset of speech production and was recruited during speech perception regardless of acoustic background noise. Our study thus pinpoints a shared ventral premotor substrate for real-life speech production and perception with its basic properties.