Summary: A new artificial intelligence model automatically learns higher-level language patterns that can apply to different languages, enabling it to achieve better results.

Source: McGill University

Human languages are notoriously complex, and linguists have long thought it would be impossible to teach a machine how to analyze speech sounds and word structures in the way humans do.

But researchers from McGill University, MIT, and Cornell University have taken a step in this direction. They have developed an artificial intelligence (AI) system that can learn the rules and patterns of human languages on its own.

The model automatically learns higher-level language patterns that can apply to different languages, enabling it to achieve better results.

When given words and examples of how those words change to express different grammatical functions in one language – like tense, case, or gender – this machine-learning model comes up with rules that explain why the forms of those words change.

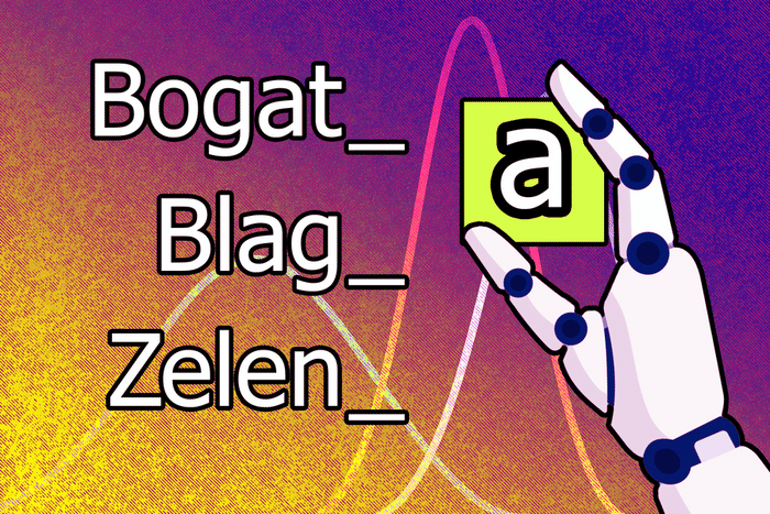

For instance, it might learn that the letter “a” must be added to the end of a word to make the masculine form feminine in Serbo-Croatian.

This system could be used to put language theories to the test and investigate subtle similarities in the way diverse languages transform words, say the researchers. “We wanted to see if we can emulate the kinds of knowledge and reasoning that humans bring to the task,” says co-author Adam Albright, a professor of linguistics at MIT.

“The exciting thing about this work is that it shows how we can build algorithms that are able to generalize from really tiny samples of language data, more like human scientists and children,” says senior author Timothy O’Donnell, assistant professor in the Department of Linguistics at McGill University, and Canada CIFAR AI Chair at Mila – Quebec Artificial Intelligence Institute.

About this artificial intelligence research news

Author: Shirley Cardenas

Source: McGill University

Contact: Shirley Cardenas – McGill University

Image: The image is credited to Jose-Luis Olivares, MIT

Original Research: Open access.

“Synthesizing theories of human language with Bayesian program induction” by Timothy O’Donnell et al. Nature Communications

Abstract

Synthesizing theories of human language with Bayesian program induction

Automated, data-driven construction and evaluation of scientific models and theories is a long-standing challenge in artificial intelligence.

We present a framework for algorithmically synthesizing models of a basic part of human language: morpho-phonology, the system that builds word forms from sounds. We integrate Bayesian inference with program synthesis and representations inspired by linguistic theory and cognitive models of learning and discovery.

Across 70 datasets from 58 diverse languages, our system synthesizes human-interpretable models for core aspects of each language’s morpho-phonology, sometimes approaching models posited by human linguists. Joint inference across all 70 data sets automatically synthesizes a meta-model encoding interpretable cross-language typological tendencies.

Finally, the same algorithm captures few-shot learning dynamics, acquiring new morphophonological rules from just one or a few examples.

These results suggest routes to more powerful machine-enabled discovery of interpretable models in linguistics and other scientific domains.