Summary: A new study updates a theory originally developed to explain how humans learn and highlights its importance as a framework to guide AI.

Source: Cell Press.

Recent breakthroughs in creating artificial systems that outplay humans in a diverse array of challenging games have their roots in neural networks inspired by information processing in the brain. In a Review published June 14 in Trends in Cognitive Sciences, researchers from Google DeepMind and Stanford University update a theory originally developed to explain how humans and other animals learn – and highlight its potential importance as a framework to guide the development of agents with artificial intelligence.

First published in 1995, the theory states that learning is the product of two complementary learning systems. The first system gradually acquires knowledge and skills from exposure to experiences, and the second stores specific experiences so that these can be replayed to allow their effective integration into the first system. The paper built on an earlier theory by influential British computational neuroscientist David Marr and on then-recent discoveries in neural network learning methods.

“The evidence seems compelling that the brain has these two kinds of learning systems, and the complementary learning systems theory explains how they complement each other to provide a powerful solution to a key learning problem that faces the brain,” says Stanford Professor of Psychology James McClelland, lead author of the 1995 paper and senior author of the current Review.

The first system in the proposed theory, placed in the neocortex of the brain, was inspired by precursors of today’s deep neural networks. As with today’s deep networks, these systems contain several layers of neurons between input and output, and the knowledge in these networks is in their connections. Furthermore, their connections are gradually programmed by experience, giving rise to their ability to recognize objects, perceive speech, understand and produce language, and even to select optimal actions in game-playing and other settings where intelligent action depends on acquired knowledge.

Such systems face a dilemma when new information must be learned: If large enough changes are made to the connections to force the new knowledge into the connections quickly, it will radically distort all of the other knowledge already stored in the connections.

“That’s where the complementary learning system comes in,” McClelland says. In humans and other mammals, this second system is located in a structure called the hippocampus. “By initially storing information about the new experience in the hippocampus, we make it available for immediate use and we also keep it around so that it can be replayed back to the cortex, interleaving it with ongoing experience and stored information from other relevant experiences.” This two-system set-up therefore allows both immediate learning and also gradual integration into the structured knowledge representation in the neocortex.

“Components of the neural network architecture that succeeded in achieving human-level performance in a variety of computer games like Space Invaders and Breakout were inspired by complementary learning systems theory” says DeepMind cognitive neuroscientist Dharshan Kumaran, the first author of the Review. “As in the theory, these neural networks exploit a memory buffer akin to the hippocampus that stores recent episodes of game play and replays them in interleaved fashion. This greatly amplifies the use of actual game play experience and avoids the tendency for a particular local run of experience to dominate learning in the system.”

Kumaran has collaborated both with McClelland and with DeepMind co-founder Demis Hassabis (also a co-author on the Review), in work that extended the role of the hippocampus as it was envisioned in the 1995 version of the complementary learning systems theory.

“In my view,” says Hassabis, “the extended version of the complementary learning systems theory is likely to continue to provide a framework for future research, not only in neuroscience but also in the quest to develop Artificial General Intelligence, our goal at Google DeepMind.”

Funding: The researchers were supported by the New York State Stem Cell Science and the Starr Foundation. The work was further supported in part by the National Institutes of Health and the National Cancer Institute.

Source: Joseph Caputo – Cell Press

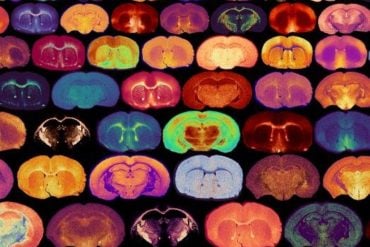

Image Source: This NeuroscienceNews.com image is credited to Kumaran et al./Trends in Cognitive Sciences.

Original Research: Full open access research for “What Learning Systems do Intelligent Agents Need? Complementary Learning Systems Theory Updated” by Dharshan Kumaran, Demis Hassabis, and James L. McClelland in Trends in Cognitive Sciences. Published online June 14 2016 doi:10.1016/j.tics.2016.05.004

[cbtabs][cbtab title=”MLA”]Cell Press. “How Insights into Human Learning Can Foster Smarter Artificial Intelligence.” NeuroscienceNews. NeuroscienceNews, 14 June 2016.

<https://neurosciencenews.com/ai-human-learning-4468/>.[/cbtab][cbtab title=”APA”]Cell Press. (2016, June 14). How Insights into Human Learning Can Foster Smarter Artificial Intelligence. NeuroscienceNews. Retrieved June 14, 2016 from https://neurosciencenews.com/ai-human-learning-4468/[/cbtab][cbtab title=”Chicago”]Cell Press. “How Insights into Human Learning Can Foster Smarter Artificial Intelligence.” https://neurosciencenews.com/ai-human-learning-4468/ (accessed June 14, 2016).[/cbtab][/cbtabs]

Abstract

What Learning Systems do Intelligent Agents Need? Complementary Learning Systems Theory Updated

We update complementary learning systems (CLS) theory, which holds that intelligent agents must possess two learning systems, instantiated in mammalians in neocortex and hippocampus. The first gradually acquires structured knowledge representations while the second quickly learns the specifics of individual experiences. We broaden the role of replay of hippocampal memories in the theory, noting that replay allows goal-dependent weighting of experience statistics. We also address recent challenges to the theory and extend it by showing that recurrent activation of hippocampal traces can support some forms of generalization and that neocortical learning can be rapid for information that is consistent with known structure. Finally, we note the relevance of the theory to the design of artificial intelligent agents, highlighting connections between neuroscience and machine learning.

Trends

Discovery of structure in ensembles of experiences depends on an interleaved learning process both in biological neural networks in neocortex and in contemporary artificial neural networks.

Recent work shows that once structured knowledge has been acquired in such networks, new consistent information can be integrated rapidly.

Both natural and artificial learning systems benefit from a second system that stores specific experiences, centred on the hippocampus in mammalians.

Replay of experiences from this system supports interleaved learning and can be modulated by reward or novelty, which acts to rebalance the general statistics of the environment towards the goals of the agent.

Recurrent activation of multiple memories within an instance-based system can be used to discover links between experiences, supporting generalization and memory-based reasoning.

“What Learning Systems do Intelligent Agents Need? Complementary Learning Systems Theory Updated” by Dharshan Kumaran, Demis Hassabis, and James L. McClelland in Trends in Cognitive Sciences. Published online June 14 2016 doi:10.1016/j.tics.2016.05.004