Summary: Can you tell the difference between a real human voice and an AI-generated one? According to a new study, your conscious mind might struggle, but your brain is already picking up the clues.

Researchers found that while human listeners are remarkably bad at distinguishing “deepfake” speech from real voices—even after training—their brain activity tells a different story. Neural recordings showed that brief training makes the brain’s responses to AI vs. human speech significantly more distinct, suggesting that our auditory systems are adapting to AI faster than our conscious decision-making can keep up.

Key Facts

- The “Conscious” Fail: Participants were largely unable to reliably tell the difference between human and AI voices, and short training sessions only improved their guessing ability minimally.

- The “Neural” Win: On a brain level, training caused a measurable spike in distinctiveness. The brain began “tagging” AI speech differently than human speech, even if the person couldn’t verbalize why.

- Subtle Cues: The auditory system picks up on micro-acoustic differences—tiny flaws in AI rhythm or tone—that the conscious mind hasn’t yet learned to prioritize.

- Adaptive Potential: The findings suggest that humans are in an “adaptation phase” with AI content. The biological signals are present; we just haven’t learned how to use those “cues” for behavior yet.

- Fighting Fraud: This research provides a starting point for developing better training tools to help people identify deepfake scams and voice-cloning fraud.

Source: SfN

In a collaboration between Tianjin University and the Chinese University of Hong Kong, researchers led by Xiangbin Teng used behavioral and brain activity measures to explore whether people can discern between AI-generated and human speech. The researchers also assessed whether brief training improves this ability.

This work is published in eNeuro.

Thirty participants listened to sentences spoken by people or AI-generated voices and judged whether the speakers were human or AI before and after short training. The researchers discovered that study participants were bad at discriminating between the two types of speakers, and that training helped only minimally.

However, on a neural level, training made the brain’s responses more distinct for human versus AI speech. What might that mean?

Says Teng, “The auditory brain system seems to start picking up subtle acoustic differences, even if people can’t reliably turn that into a behavioral decision yet. That’s encouraging—it suggests training can help, and it’s a promising starting point for building better ways to distinguish deepfake speech from real human speech.

“Humans are still adapting to AI-generated content so poor performance doesn’t mean the signals aren’t there—it may mean we’re not yet using the right cues.”

Key Questions Answered:

A: Because AI has become incredibly good at mimicking human prosody (the rhythm and melody of speech). Our conscious minds are easily fooled by the “emotional” sound of a voice. However, this study shows that underneath that layer, the AI still leaves a “digital footprint” in the sound waves that our auditory cortex can detect.

A: There is a gap between “perception” and “decision.” Your auditory system is registering the subtle acoustic differences, but your brain hasn’t yet connected those signals to the “This is a Fake” button in your mind. It’s like hearing a tiny crack in a glass; you hear the sound, but you might not realize it means the glass is about to break until you’ve been trained to recognize that specific signal.

A: We’re getting there! While the training in this study only helped a little bit behaviorally, the fact that the brain changed its response is a huge win. It means the “data” is there. Future training programs could focus on teaching people to listen for the specific cues their brains are already detecting.

Editorial Notes:

- This article was edited by a Neuroscience News editor.

- Journal paper reviewed in full.

- Additional context added by our staff.

About this AI and auditory neuroscience research news

Author: SfN Media

Source: SfN

Contact: SfN Media – SfN

Image: The image is credited to Neuroscience News

Original Research: Closed access.

“Short-Term Perceptual Training Modulates Neural Responses to Deepfake Speech but Does Not Improve Behavioral Discrimination” by Jinghan Yang, Haoran Jiang, Yanru Bai, Guangjian Ni and Xiangbin Teng. eNeuro

DOI:10.1523/ENEURO.0300-25.2025

Abstract

Short-Term Perceptual Training Modulates Neural Responses to Deepfake Speech but Does Not Improve Behavioral Discrimination

Rapid advancements in artificial intelligence (AI) have enabled text-to-speech (TTS) systems to produce voices increasingly indistinguishable from humans, posing significant societal risks, particularly through potential misuse in fraud and deception.

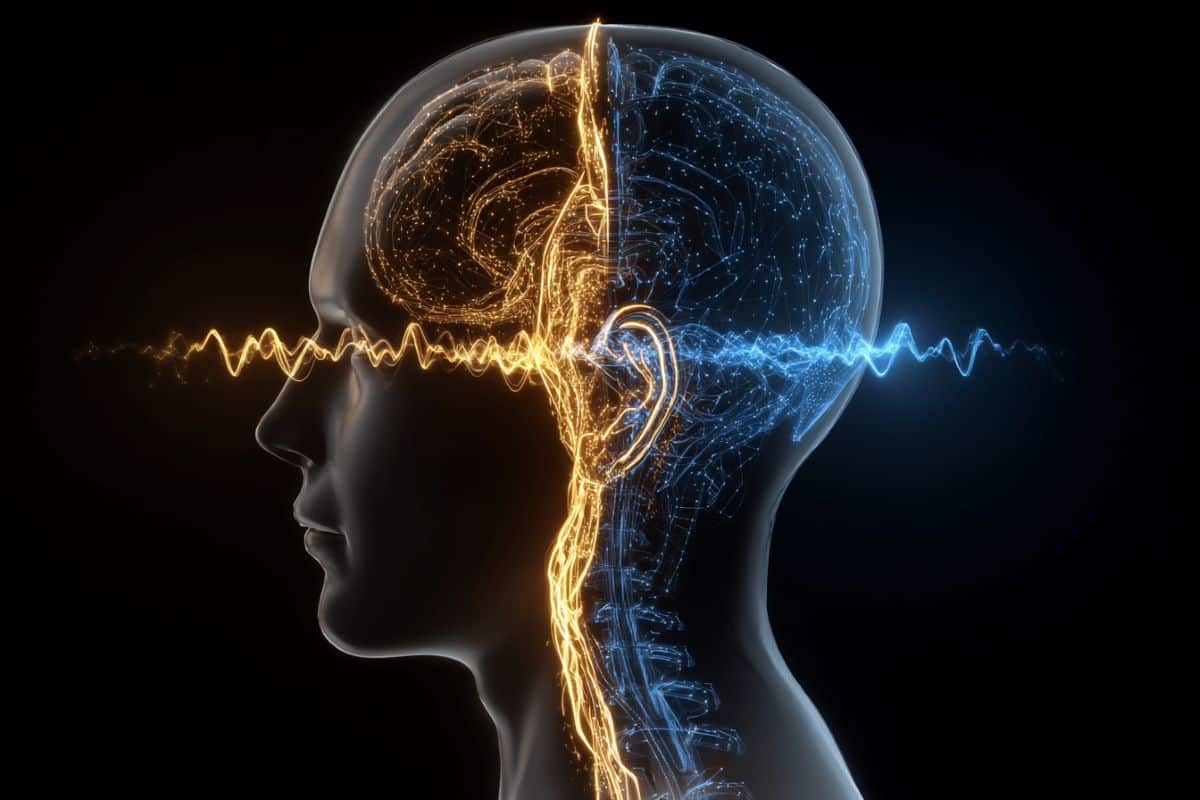

To address this concern, this study combined behavioral assessments and neural measures using electroencephalography (EEG) to examine whether short-term perceptual training enhances people’s ability to distinguish AI-generated from human speech.

Thirty participants (of either sex) listened to sentences produced by human speakers and corresponding AI-generated clones, judging each sentence as either human or AI-generated before and after a brief (∼12-minute) training session, during which voices were explicitly labeled as “human” or “AI”.

Behaviorally, participants showed consistently poor discrimination before and after training, with only minimal improvement. However, neural analyses revealed substantial training-induced changes.

Specifically, temporal response function (TRF) analysis identified significant neural differentiation between speech types at early (∼55 ms, ∼210 ms) and later (∼455 ms) auditory processing stages following training. Additional EEG analyses, including spectral power and decoding, were conducted to further investigate training effects, but these measures revealed limited differentiation.

The findings here highlight a dissociation between behavioral and neural sensitivity: while listeners struggle to behaviorally discriminate sophisticated AI-generated voices, their auditory systems rapidly adapt to subtle acoustic differences following short-term exposure.

Understanding this neural-behavioral dissociation is crucial for developing effective perceptual training protocols and informing policies to mitigate societal threats posed by increasingly realistic synthetic voices.